| Eddie and Kinect Co-Star in Robotics Projects |

| Written by Lucy Black | |||

| Monday, 04 June 2012 | |||

|

All three prize-winning projects in the Microsoft Robotics@Home competition used the Kinect Sensor. The Grand Prize went to Smart Tripod, an invention every videographer would love to own. As we reported last year, the idea of the Robotics@Home competition was to stimulate robotics research and specifically to promote the use of the Microsoft Robotics Developer Studio. After the first phase of the competition, in which contestants submitted their ideas for home robot usage, ten finalists were provided with EDDIE, a commercially available robot platform from Parallax, in order to work on their final submissions - videos of their robots in action. The Grand Prize of $10,000 went to Arthur Wait for his SmartTripod robot which he explains in this video, the initial part of which uses the SmartTripod to good effect for a very smooth and natural presentation before going into an explanation of the project;

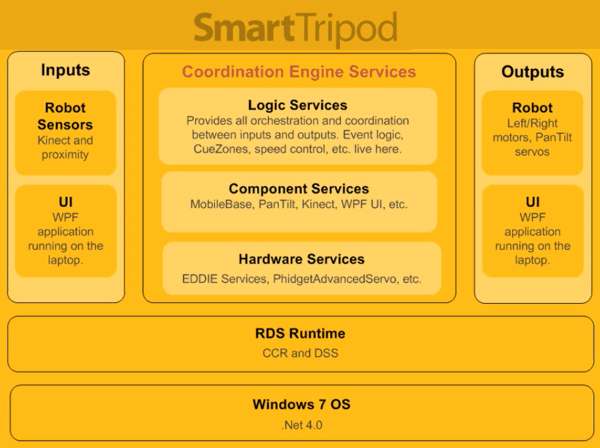

The mobile base is the "standard-issue" EDDIE plus a laptop and extra battery to meet the power requirements of the pan and tilt gears. A lightweight tripod has been attached to the platform and then comes a custom-built tripod head with two decks. The bottom one supports the Kinect, which is used for tracking the subject of the video. Above this is the pan and tilt system driven by a Phidgets advanced servo board and two servos - one for the pan system and the other for the tilt - with two gearing mechanisms from ServoCity which provide a 5-to1 gear ratio, allowing for really fine-grained control of the cameras movement's which is responsible for the smoothness. Arthur Wait then discusses the project's software, the architecture of which is summarized in this diagram:

The SmartTripod user interface is built in Windows Presentation Foundation and uses the WPF adapter for RBS. It is fully explained which other Kinect developers will find very interesting. Excerpts from a cookery demonstration video, are then used to illustrate SmartTripod's three modes: Follow Mode, which uses the Power Factors to track the moving subject , Dolly Mode for following the subject horizontally and Static Mode where the base remains fixed but pan and tilt are used to good effect.

The use of gesture to control the SmartTripod is a crucial element of the project and so the Cue Zones area of the interface is also discussed with examples. Altogether the video presentation, created with the robot itself, is a polished piece of work.

Two other prizes were awarded. The First Prize of $5,000 went to Todd Christell for a robot that is designed for use by elderly people living independently in their own homes. As shown in the video below, in this project EDDIE is programmed not only to monitor the senior concerned in a non invasive way, but to be able to respond in an emergency, such as a fall, by detecting the world "Help" and locating the individual by voice - so in this project the Kinect's array of four directional microphones come into play.

EDDIE's "rescue mode" is indicated by flashing red lights and while searching EDDIE also sets up a Skype-video call with a human responder.

The second prize winning video also shows Eddie responding to voice - but in this case voice is used in combination with gesture to issue instructions. The idea implemented by the three-man Plant Sitter Team is to have its EDDIE-based Autonomous Mobile Plant Sitter (AMPS) water plants according to a schedule while you are away from home. The additional components of this project are the AL5D, 5 degrees of freedom, Robotic Arm from Lynxmotion which is used to direct the hose, and a Watering Mechanism with a L12 linear actuator from Firgelli. For the localization and mapping required by this project the team modified the Kinect depth map to be used as an input to the tinySLAM algorithm, which uses particle filtering to compute the position of the robot. In the video we mainly see AMPS being trained by a human, in a very shaky video which would have benefited from SmartTripod, which doesn't really do justice to the idea that this is an autonomous robot that performs a very worthwhile task.

More InformationAnnouncing the Winners of the Microsoft Robotics @ Home Competition Related ArticlesKinect SDK 1.5 - Now With Face Tracking

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Monday, 04 June 2012 ) |