| LightSpace agumented reality the future UI |

| Tuesday, 05 October 2010 | |||

|

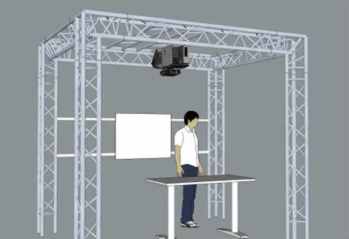

LightSpace uses 3D position sensing technology and projection to create a volumetric user interface that borders on augmented reality. It's not so long since we were all entranced by the original GUI with its icons and key innovation - the mouse. Recently we moved on to gestural interfaces and touch is the key input device. Now Microsoft Research are showing off a system that gives us some idea where this might all be going. LightSpace is a user interface that uses a whole room.

Virtual objects are projected onto fixed surfaces such as a tabletop or a wall. So far this is just surface based computing and nothing new - but in this version there are multiple surfaces in the room and a user can "pick up" a virtual object and "carry" it from one surface to another as if it was a real object. This has much in common with a full Virtual Reality approach to the UI although its more like VR melded with the real world so perhaps its better described as an augmented reality UI. The hardware uses depth measuring video cameras much like the system in the Kinect video game controller. This means that while the full system is confined to the lab for the moment there is low cost hardware already available that could do at least part of the job. To see it in action just play the video:

Now despite the brave attempts at trying to find applications for this technology - school lessons where children carry their projects to the front of class in virtual form doesn't seem convincing to me - it looks fun enough just to want to use. In a domestic environment you can see that it might be good to read an ebook on a coffee table and carry it to another table but what about picking it up and reading it in your hand?

Perhaps this is another technology in search of a problem to solve? But then again I did say that touch screens would never catch on because of the smudges and that the mouse was far too dangerous to use on a desktop... Further Reading

<ASIN:0596518390> <ASIN:0321643399> <ASIN:3540436782> <ASIN:3540669353> <ASIN:019852451X> <ASIN:3642125522> |

|||

| Last Updated ( Tuesday, 05 October 2010 ) |