| The Digital Camera |

| Written by Harry Fairhead | ||||

| Wednesday, 21 March 2012 | ||||

Page 1 of 3 Digital cameras are everywhere and both users and apps can assume that they are available most of the time. We take an in-depth look at how a digital camera works and some of the difficulties of using one. Digital Cameras have changed the way we take photographs. No longer do you have to wait for them to be processed and you can snap way without worrying about the cost of film. We now take more photos for sillier reasons that ever before. Photography is now everywhere and software and users alike can now assume that one is usually available. Let's look at how a camera works and some of the difficulties. The key parts of a digital camera are:

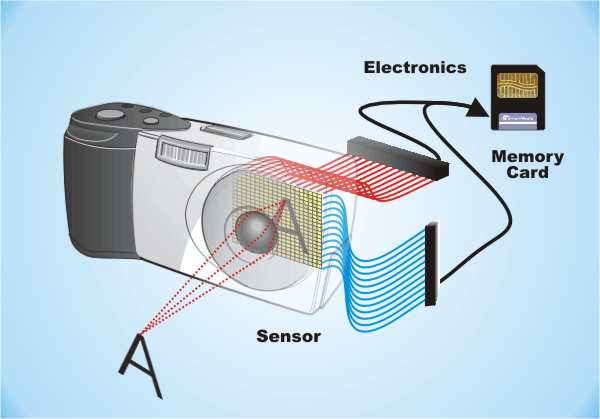

The resulting stream of numbers is stored in a “flash” memory ready to be downloaded. A microprocessor is needed to control all of the steps in taking and storing a photo. Its tasks include responding to the buttons that the user presses, adding intelligence to the exposure, focus and other settings and performing any digital signal processing needed to improve and compress the image before it is stored in the Flash memory.

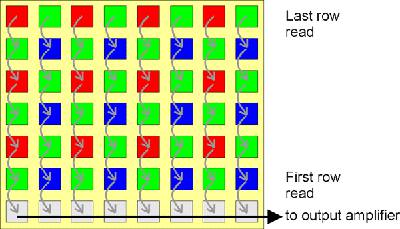

Sensor technologyThe sensor is one of the most important parts of the digital camera – put simply it determines how good the pictures are going to be. A sensor is composed of a large number of light sensitive areas or pixels and the resolution of the final image is determined by how many pixels there are in total. The number of pixels has been increasing with each generation of digital cameras but there is more to the quality of a sensor than this. There are two major sensor technologies – CCD, or Charge Coupled Device, and CMOS, or Complimentary Metal Oxide Semiconductor. The first to be invented was CCD and it is still considered to be the best. It works by creating an array of photosensitive cells. Each cell accumulates an electric charge as light falls on it. The cells are connected together so that each row can shift its accumulated charge to the row below. The final row shifts its charge into an output register and then on to an A-to-D converter.

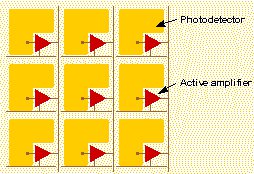

The CCD shifts one whole row at a time into the readout register. The readout register then shifts one pixel at a time to the A-to-D A CMOS sensor also uses photosensitive devices arranged in an array but in this case each pixel is addressed by a row and read out directly. This is very similar to the way a standard RAM chip works and in fact CMOS sensors are basically just RAM chips designed to store the amount of charge produced by light falling on each pixel. CMOS sensors can even be made using the same production lines that make RAM chips and this is the reason they are much cheaper than CCDs. However there are drawbacks to the CMOS design. A CMOS sensor needs additional electronics at each pixel to process the data and this results in an area that isn’t sensitive to light. A CCD sensor on the other hand can cover nearly the entire area of each pixel with a photosensitive element. For this reason CMOS sensors are inherently less sensitive to light and provide a noisier image. On the plus side, however, they use a lot less power than CCD.

A CMOS sensor has additional electronics at each pixel and the light sensitive area is smaller. Over time the disadvantage of the CMOS sensor has been turned to its advantage. It is relatively easy to add electronics at each pixel and on the same chip and this means that CMOS can use sophisticated techniques to improve its performance. Early sensors were “passive-pixel sensors” and these not only lacked sensitivity but showed regular patterns of noise due to the variation in sensitivity from pixel-to-pixel. The current crop of CMOS sensors make use of “Active-Pixel” technology to cancel the noise and increase the sensitivity. In addition CMOS sensors are often built with a layer of micro-lenses that focus the light onto the smaller sensitive area. As a result CMOS sensors are slowly taking over, even in the professional field. Samsung, for example, has a CMOS sensor that is at least 10 times more sensitive than a CCD sensor. CCD sensors aren’t improving as fast as CMOS sensors but FujiFilm have developed a better CCD by using larger octagonal pixels that makes it more sensitive. Of course an important factor in quality is the number of pixels a sensor has. According to Kodak the minimum number of pixels you need for various print sizes to appear “photorealistic” are:

An 8x10 print is usually considered to be the typical size used for a professional photo so given that there are phone cameras which offer around 10Megapixel resolution then it looks as if resolution is no longer an issue. The only flaw in this argument is that this assumes that we are using the entire sensor area for the print. If you crop or zoom in on an area to produce the print then the number of pixels available goes down. For reference it is worth knowing that a 35mm slide can be considered to have anything from 5 to 80Megapixels. |

||||

| Last Updated ( Wednesday, 21 March 2012 ) |