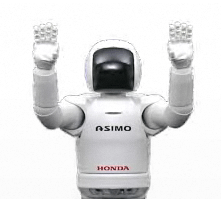

| Asimo shows off dance moves |

| Written by Lucy Black | |||

| Sunday, 02 October 2011 | |||

|

Honda's humanoid robot, Asimo, was demonstrating its latest abilities at the 2011 IEEE International Conference on Intelligent Robots and Systems recently held in San Francisco. Scientists at the Honda Research Institute in Mountain View, California are working on robotics technologies to assist humans, aiming to improve robot mobility and communication. The surprise is that they are using the Kinect 3D sensor. It seems that this low cost sensor is good enough to use with big as well as small robots. Victor Ng-Thow-Hing, Behzad Dariush, and colleagues were at the 2011 IEEE International Conference on Intelligent Robots and Systems recently held in San Francisco where Spectrum captured this video demonstrating two recent advances.

At the start of the video, we see Asimo mimicking in real time the movements of the human in the background. To achieve this the researchers use Microsoft's Kinect 3D sensor to track selected points on a person's upper body, and their software uses an inverse kinematics approach to generate control commands to make Asimo move. The software prevents self collisions and excessive joint motions that might damage its system and is integrated with Asimo's whole-body controller in order to maintain balance. The researchers say that the ability of mimicking a person in real time could find applications in robot programming and interactive teleoperation, among other things. You might well have seen similar displays based on using smaller robots such as the Nao but this is proof that the idea scales to larger robots and it looks even more impressive. Later, the scientists explain how they're using gestures to improve Asimo's communication skills. They're developing a gesture-generating system that takes any input text and analyzes its grammatical structure, timing, and choice of word phrases to automatically generate movements for the robot. To make the behavior more realistic, the scientists used a vision system to capture humans performing various gestures, and then they incorporated these natural movements into their gesture-generating system. Watching the video the upper body dance movements seem very naturalistic and it certainly seems that Asimo enjoys dancing.

To be informed about new articles on I Programmer, subscribe to the RSS feed, follow us on Twitter or Facebook or sign up for our weekly newsletter.

|

|||

| Last Updated ( Sunday, 02 October 2011 ) |