| Network Coding Speeds Up Wireless by 1000% |

| Written by Mike James | |||

| Friday, 26 October 2012 | |||

|

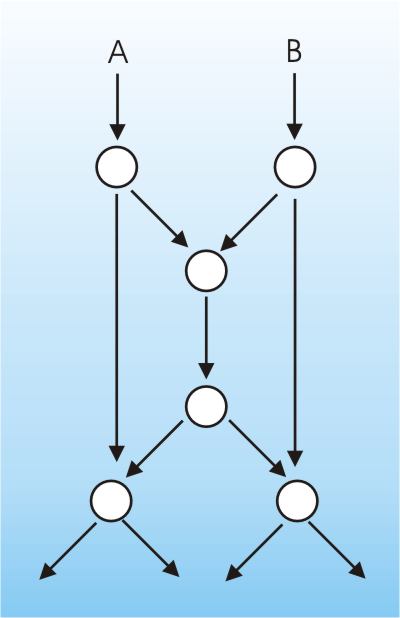

Adding some error correction to a standard TCP wireless connection can speed things up by 1000%. But isn't your ordinary error correcting code this is new. In a demonstration by a team led by MIT's Muriel Médard, the throughput of a phone connection went from 0.5Mbps to 13.5MbpS. Claude Shannon single-handedly invented information theory and since then nothing as revolutionary has happened. We can compute the capacity of any channel and then is just a matter of finding the right coding to deliver the capacity. You can't do better than the channel capacity, but by messing up the coding and protocol you can do much worse. It all looked like a solved problem but networks with nodes that can communicate with one other and pass messages have posed something of a problem in that it was difficult to make a clear statement about what the capacity of the entire network was. The breakthrough came in 2000 when the idea that a multinode network could transform the data by mixing it together as it flowed though the nodes was presented to the world by R. Ahlswede, N. Cai, S.-Y. R. Li, and R. W. Yeung, "Network Information Flow", (IEEE Transactions on Information Theory, IT-46, pp. 1204-1216, 2000). Using network coding it is possible to work out the maximum capacity of multicast networks. All of this sounds mysterious but you can see the sort of behavior that leads to network coding being interesting in the following canonical example. Suppose you have a network as shown in the diagram. Each link can pass a single package in unit time and your problem is to deliver packets A and B to the sink nodes in minimum time.

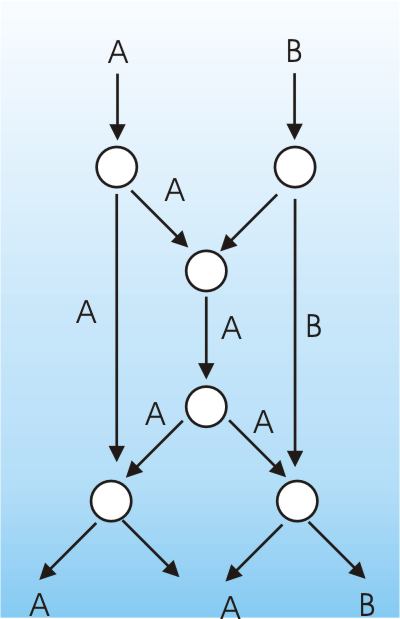

Using standard packet switching the best you can do do is to send A and B to the sink node and at the same time use the center channel to send either A or B.If we send A then the packet traffic looks like:

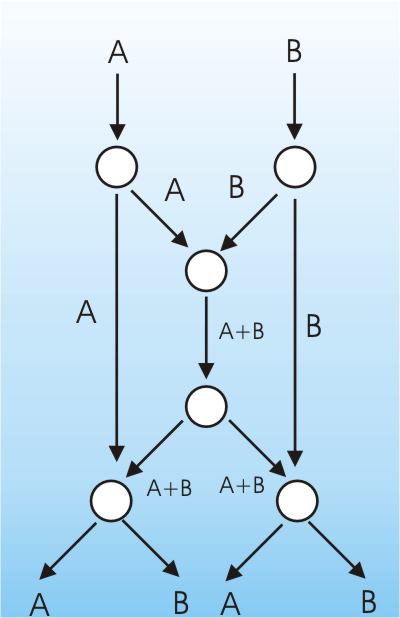

You can see that one of the sink nodes gets A B but the other just gets A. If you use the send B down the center channel then the left-hand node gets A B and the right-hand node only gets B. So can you arrange for both sink nodes to get both packets in one step? If you allow the nodes to form linear combinations of the packets and transmit these in place of the original packets then, yes, you can. If the first central node transmits not A or B but A+B then we have:

Now the sink nodes can obtain A and B at the same time by subtracting A from A+B and B from A+B. This is the essence of network coding. By transmitting linear combinations of packets you can improve the total information transmitted in a multicast system. The latest work uses random linear network coding, which is a little more advanced. All we do in this case is allow each node to form random linear combinations of the packets. To do this the node picks random coefficients and then use these to form linear combinations of the packet. Each node also adds the coefficients to the packet headers and then sends them on their way. Sink nodes no longer receive the original packets but the linear combinations. By extracting the coefficients, the sink nodes can construct a matrix which represents the transformation that the original packets have been subjected to. All the sink node has to do is invert the matrix and multiply to recover the original packets. (In practice the equations are solved by Gaussian elimination which is fast.) The reason that this works better than just sending the original packets is that sink nodes simply have to wait until they have a matrix of coefficients that is non-singular and then they can recover the data. As long as sufficient linear combinations are sent then this is likely to happen with a high probability even if some packets are lost. The overheads are simply having to send some extra packets and the tiny extra storage needed for the coefficients. This is more like packet erasure correction than error correction as missing lost packets don't stop the original packets from being recovered. At this point you might be thinking that incorporating some sort of error correction into the packets would be a good idea but this would require a bit change to the TCP protocol. TCP isn't a good protocol for an unreliable channel. It sends packets one at a time and relies on the receiver to acknowledge them. If any packets go missing then the receiver signals this and the transmitter sends the packet again. Over wired connections this works reasonably well because few packets are lost and the problem is usually network congestion for which slowing things down tends to help. However, over wireless networks, especially phone networks, packet loss is common. What happens in this case is that the network capacity is wasted in resending packets and in the handshaking needed to request a resend. As the packet loss increases so the actual useful throughput of the network falls of rapidly. Even quite small percentage packet loss can reduce the capacity of the connection to 10% of its value. The latest tests led by Muriel Médard, professor at MIT's Research Laboratory of Electronics, used an Amazon EC2 setup to transmit network coded packets to their mobile phones on the on a New York-to-Boston Acela train which is known for poor reception. The coding boosted the effective bandwidth from 0.5Mbps to 13.5Mbps. The improvement was enough to watch You Tube videos while other passengers struggled to get on line. The actual details of the coding methods used are secret and a company has been set up to arrange licensing deals. Presumably in the future the coding could be built into routers and phones, leaving the software still believing that it is using old-fashioned, inefficient, unmodified, TCP. Of course, how this improvement would scale if every user was connected using network coding is another matter. In principle though, network coding means that TCP can be greatly improved but with only minor tweaks.

More InformationMIT Technology Review

Comments

or email your comment to: comments@i-programmer.info

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

|

|||

| Last Updated ( Friday, 08 July 2022 ) |