| The Display That Does Away With Glasses |

| Written by David Conrad | |||

| Monday, 28 July 2014 | |||

|

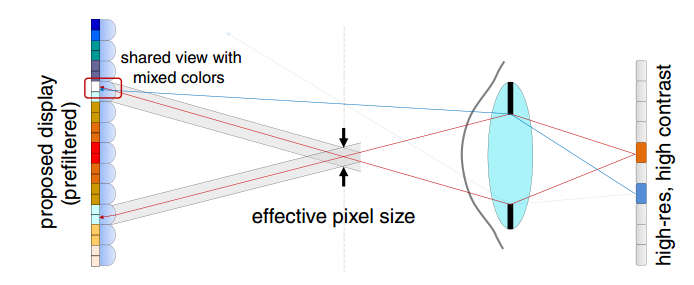

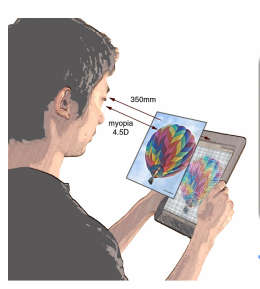

The light field approach to computational photography has more uses than capturing 2.5D images - it can create a display that you can view clearly without your glasses. This is a fun piece of image processing but it isn't clear what its killer application is so read on and see if you can think of one. The idea is fairly simple from a computational point of view. Each eye defect, well the common ones, is equivalent to a convolution of a mask with the sharp image. If you are short sighted then, without glasses the focal length is wrong and a pin point of light is imaged on your retina as a small disk. The when you look at a full image you see it as if it had been convolved with a small disk and the result is that each sharp point is smeared out - hence the entire image looks blurred. Other eye defects are equivalent to convolving the original image with a shape other than a disk and hence the mechanism is very general. To cure the aberration all you have to do is deconvolve the mask with the image and this is what prescription eye glasses do even if we don't think of it in this way - we generally think of it as adjusting the focal length back to what it should be. Another approach is to modify the original image so that the convolution operation restores the image to its sharp original form. In other words you change the image so that a normal sighted person things it looks odd but when a short sighted person looks at the image it is sharp. This isn't an easy task but it has been tried before but the new approach, described in a paper to be presented at this years SIGGRAPH, presents a sharp full contrast image that can be viewed from different positions. What is really interesting is that the mechanical implementation is very simple and depends on low cost off the shelf components. This said the technique is general and a range of method of creating the light field could be used and a specially designed component could increase the resolution and widen the viewing angle. In the prototype system all that was used to create the light field was a pinhole mask but a grid of micro-lenses would do the same job. The display used was an iPod touch. Roughly speaking what the light field grid is used so that the out of focus eye fails to bring a pair of rays from one of the points to a focus at a single point but does bring light from two points on the screen to a single focus - see the diagram below:

You can see that now two points on the display control what is imaged at a single point on the retina. The algorithm used computes the transformation of the original image needed to produce a sharp image on the retina - this is called prefiltering. The only problem is that the prefiltering has to be performed per image and to correct for a given degree of short sightedness and takes about 20 seconds per image after an initial setup phase. The researchers hope to extend the method in the future to video and using a GPU for realtime performance. You can see more about this and previous work in the following video:

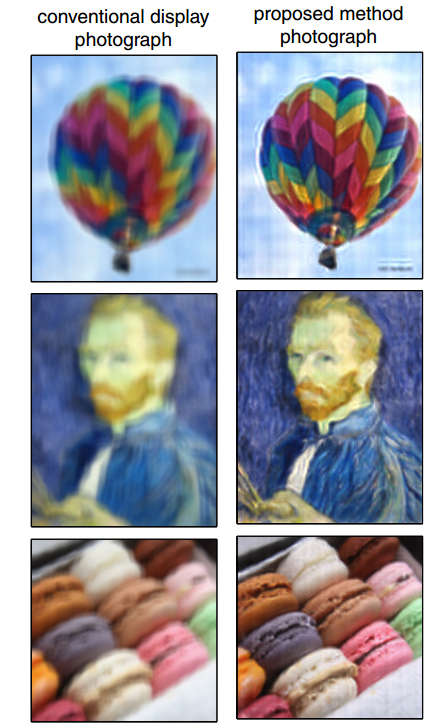

So does it work? To check that it worked an image was projected using the method and photographed using an out of focus camera.

As you can see it does seem to work. Remember the pictures on the left are what you see with an unmodified screen with the camera out of focus and the pictures on the right are what you see with the correction method in use and the same out of focus camera. The same technique can be modified to correct more complex eye defects - coma, spherical aberration and so on. Some of these defects are difficult to correct well using lenses. However we come to the bottom line - why? If you work with a computer then you know that seeing a screen clearly without glasses might be a big advantage but who is to say that the correction method introduces additional eye strain - like 3D viewing. It might be a good way to view a monitor and be kind to your eyes but more research is needed. Also do you really want to have the screen sharp and everything else blurred? Perhaps the real killer app is correcting heads up displays and 3D virtual reality headsets so that they work without glasses but a pair of compensation lenses as used in camera viewfinders would do the job as well. Any suggestions?

More Informationhttp://graphics.berkeley.edu/papers/Huang-EFD-2014-08/index.html Related ArticlesComputational Camouflage Hides Things In Plain Sight Google Has Software To Make Cameras Worse CSI Style Zoom Sees Faces Reflected In The Eye Any Mobile Camera Can Be A 3D Scanner Google - We Have Ways Of Making You Smile Computational Photography On A Chip Super Seeing Software Ready To Download Blink If You Don't Want To Miss it Better 3D Meshes Using The Nash Embedding Theorem Light field camera - shoot first, focus later

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Monday, 28 July 2014 ) |