| Fun Kaggle Challenge To Tell Dogs From Cats |

| Written by Alex Armstrong | |||

| Wednesday, 02 October 2013 | |||

|

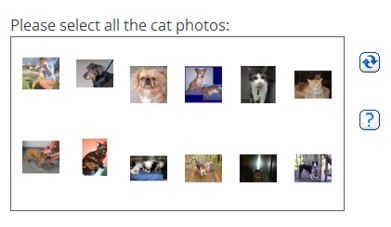

Dogs vs Cats is recently launched Kaggle contest that is both practically and theoretically interesting - and while it has a serious side it's also a chance to show that machine learning can be fun. Can you devise an algorithm to automatically distinguish dogs from cats? Normally when we report news of a contest there are cash prizes to be won. In this this case participants are competing mainly for the fun of it. This is the first of a new category of competition on Kaggle, the website that hosts predictive modelling competitions, that take place in the Kaggle Playground. Announcing the Playground, Will Cukierski, who is also the administrator of competition to automatically distinguish photos of cats and dogs wrote: The Playground will be a place for romping around the machine learning landscape, carefree and full of algorithmic zest. The problems may be a bit quirky, but there’s nothing stopping them from being serious. Instead of being demand-driven (e.g., a company wants an algorithm to solve a problem), Playground competitions will be idea-driven. The idea behind Dogs vs Cats, which runs until February 1, 2014 is that while humans (and dogs and cats themselves) find it easy to tell the difference between the two species, computers have found it difficult to the extent that photos of cats and dogs have been used as a HIP (Human Interactive Proof) challenge to ensure that visitors to websites are indeed human.

Meanwhile machine learning classifiers have already been devised to tackle this recognition task and the paper outline current state of research is provided as part of the competition details. The competition provides contestants part of the Microsoft Research Asirra (Animal Species Image Recognition for Restricting Access) data set, a collection of over 3 million photos gathered in partnership with animal adoption charity Petfinder.com, although you are also allowed to use external data if you also provide sufficient information for other contestants to access the same photos. If you are tempted to join in, be warned - the competition is going to be fierce. Although there's still four months there already a score of over 0.96 at the top of the leaderboard. But can this near perfect (or should that be purrfect) solution be improved upon? The leaderboard is calculated on around 30% of the test data with the other 70% used to calculate the final results.

The second Playground competition has already launched. With the title Partly Sunny with a Chance of Hashtags and being run in collaborating with CrowdFlower it provides a set of tweets related to he weather and asks teams to determine for each tweet whether it has a positive, negative, or neutral sentiment, whether the weather occurred in the past, present, or future, and what sort of weather it references. If this sounds your sort of fun it runs until December 1, 2013. If you want to devise a Playground competition the three ingredients required, according to Cukierski, are: (1) a non-public ground truth, (2) a fun idea and (3) someone who knows their way around a machine learning competition. More InformationRelated ArticlesHidden Benefits of Online Machine Learning One-Shot-Learning Gesture Challenge GCHQ Challenges Hackers To Crack Cryptic Codes

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Wednesday, 02 October 2013 ) |