| New Facebook Computer Vision Tags |

| Written by Nikos Vaggalis | |||

| Monday, 09 January 2017 | |||

|

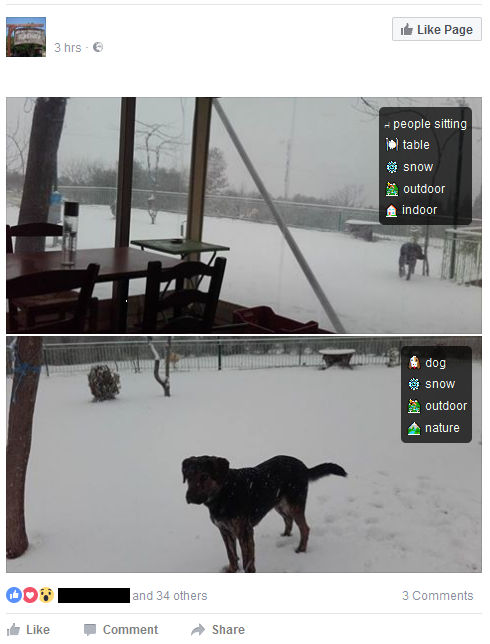

Show Facebook Computer Vision Tags is a new Chrome and Firefox extension that overlays all images appearing on your Facebook timeline with a neural-network derived classification. This reveals what Facebook's algorithms makes of them. Should we be worried? Before any image reaches your timeline, Facebook's Deep ConvNet deep learning framework scans it in order to recognize the objects that it consists of so that it classifies it based upon those findings. Subsequently, these classifications make their way into HTML alt tags in order to annotate the image before it appears on the web and your timeline. As an example of how it works, consider the following picture of a dog out on the snow, shot by people inside a café.

is tagged with:

These classification tokens end up as :

Spot on, I should say. The Show Facebook Computer Vision Tags browser extension then reads those tags and overlays them on the image shown on your timeline. Despite being added for accessibility purposes, to provide visually impaired and blind people with a text description of a photo, this feature once more highlights privacy concerns The technology makes available extra information which can be leveraged for commercial or other more nefarious purposes, depending on how you look at the matter, For example, in searching for a destination for your next weekend break you look for and click on pictures of places that look of interest. As innocent as that action might be, it permits Facebook's algorithm to derive relevant data from the actual content of the images, in order to feed your timeline with targeted ads that advertise not just any hotel but a hotel that is close to the ones you've already looked at, since the pictures have already supplied information about your requirements (snow, nature, outdoors, fire place, logs and wine). Of course it's not the first time that images come under scrutiny for leaking info.EXIF metadata embedded in the stored picture's file header that hold location information. GPS geotagging has already existed for years. But in the presence of a neural network, data harvesting reaches new levels of privacy evasion, as now you can also derive meaning and use it to build exact profiles of who the person is and what their preferences are, to the degree of being able to make pretty accurate predictions on the ways they behave or act. A prime example was the story of an online ad service called Target which by exploiting and analyzing the huge amount of information residing on its big data stores managed to gather that a teenage girl was pregnant before her parents did! Of course, what is 'seen' by the algorithm depends on its strength and use case. For example Facebook's deep network is currently artificially limited by its engineers on recognizing objects from a fixed set of about 100 concepts based on their prominence in photos as well as the accuracy of the visual recognition engine. These have very specific meaning not open to interpretation, such as people's appearance (e.g. baby, eyeglasses, beard, smiling, jewelry), nature (outdoor, mountain, snow, sky), transportation (car, boat, airplane, bicycle), sports (tennis, swimming, stadium, baseball), and food (ice cream, pizza, dessert, coffee). Thus, it's easy to figure that more advanced or tweaked algorithms in possession of governmental agencies are able to 'see' and derive more 'shadowy' information. For example, under Neural Networks Storytelling, a picture could be literally be worth a thousand words, as the network is able to string together a hypothesis from purely observing its contents. As such, in the case of the aforementioned picture of a dog outdoors, the network could come up with an estimation of what's really happening :

For the rest of us, through this extension, we can just take a glimpse at the ways Facebook's computer vision algorithm works and interprets our pictures.Bear in mind that it only works with English language settings, so make sure that you've changed your Facebook's profile UI language before using it. Also, despite it putting an icon on your browser's toolbar, clicking on it does absolutely nothing. Instead, you just scroll down your timeline, as you've always have done, and watch the images getting overlaid with their classification. Summing up, Facebook's new feature is immensely useful to those who need it, quite fun to those who don't strictly need it, and potentially privacy evading for all, whether in they need it or not. More InformationShow Facebook Computer Vision Tags Chrome Extension Related ArticlesNeural Networks for Storytelling OpenFace - Face Recognition For All

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Monday, 09 January 2017 ) |