| TJBot - Using Raspberry Pi With Watson |

| Written by Lucy Black | |||

| Sunday, 13 November 2016 | |||

|

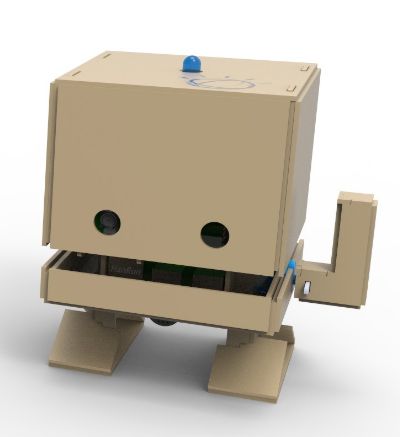

In order to get makers and tinkerers to get involved with its Watson services, IBM has come up with an open source project to create a cute cardboard or 3D printed bot, named TJ after IBM's first ever chairman, Thomas J Watson, whose name is also used for IBM's AI-based services. TJBot is listed on the IBM Watson Maker Kits page at GitHub, where it currently is the only item and is described as: an open source project designed to help you access Watson Services in a fun way. The project is essentially an enclosure for a Raspberry Pi and GitHub has dowloadable files for a 3D printed version or for a laser cut template with a third option, not yet available of ordering one. TJ is the creation of Maryam Ashoori,IBM's "cool things" czar and in this video we see her assembling a DIY kit consisting of a cardboard cutout, a Raspberry Pi and a variety of add-ons – including a RGB LED light, a microphone, servo motor, and cameras to build TJBot.

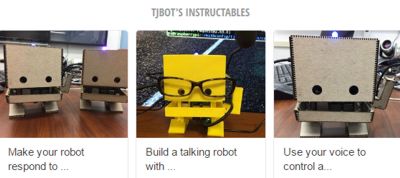

One you have built TJ, what next? You use it to access Watson Services and to help you get started there are three supplied "recipes" that are on GitHub where you'll find Node.js code and on Instructables where step-by-step instructions are provided. These recipes only work for the Raspberry Pi. You'll also need a Bluemix account. In the the first the idea to makeTJ respond to emotions using the Watson Tone Analyzer and Twitter API to control the color of the NeoPixel RGB led based on public perception of a given keyword such as "happy". Next try a listening and talking TJ. For this you need Watson Speech to Text to convert your voice to text, Watson Conversation to process the text and calculate a response, and Watson Text to Speech to talk the response back. The third recipe again uses Watson Speech to Text to obey a command to change the color of the LED. So far TJ has attracted two community-contributed recipes from TJ Bot enthusiasts, SwiftyTJ for controlling the bot's LED from a Swift program, which overcomes the fact that currently there is no support for the NeoPixel LED in Swift, and TJWave which, using Node.js, controls TJ's arm via the embedded servo by parsing commands and also states response, Other suggestions for recipes are:

TJBot does sound like a lot of fun for exploring the new world of conversational and responsive bots, see What Makes A Bot? And given there are millions of Rapsberry Pis in the wild looking for a new challenge, adding a cardboard or plastic body and learning about the Watson apis certainly seems a cool project.

More InformationRelated Articles$200 Million Investment In IBM Watson Thomas J Watson Sr, Father of IBM Challenge To Create Conversational Apps

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook, Google+ or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Sunday, 13 November 2016 ) |