| Google Glass API Full Details |

| Written by Mike James | |||

| Tuesday, 16 April 2013 | |||

|

With the shipping of the Google Glass Explorer kit to the lucky people who qualified, Google has released detailed specifications for the hardware and the full Mirror API.

One thing about Google Glass that is becoming tiresome is the range of glass related names being invented. What is a Glass app called? Glassware of course. The Mirror API, as explained in our earlier news item Google Glass The Mirror API - How It Works, is a Restful API that works within a fairly simple interaction framework. The user can navigate through a timeline of display cards by swiping and taping on the side arm of the glasses. Using the API you can:

If you take a look at the API specification then you might be surprised at how simple it is, but you need to remember that Glass is just an I/O device - an innovative one but the main actions are to display small chunks of information to the user and receive some very simple user gestures.

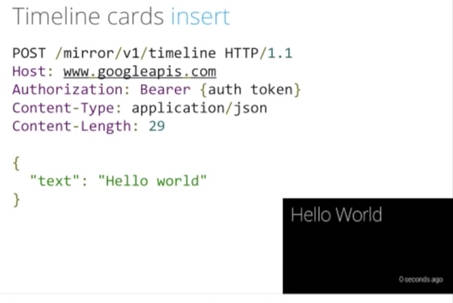

The layout of the Timeline cards doesn't use HTML directly. It uses a JSON encoding of a range of allowed items. For example, the text:string property sets the text to be displayed in the card. There are properties that allow you to take advantage of the advanced hardware. For example, speakableText adds a menu option that the user can tap to hear the string read aloud. For more complex layouts there is an html property that you can use to specify the HTML that determines the look of the card. What isn't clear at the moment is the range of HTML tags that you can use. The documentation suggests sticking with the supplied templates. Also important are the technical details of the display:

You can also include attachments to the card in the form of a photo, audio or video files. Cards can also be bundled into sets that the user can work with as a group. There is also an online tutorial to get you started. It only covers using Java or Python at the moment - but REST isn't difficult in any language. As you read through the reference material the main impression that you form is "simple". This is both good and bad. It is good that you can understand the API in a few minutes, but it does slightly depress the enthusiasm - surely cutting edge technology should have more going for it? The point is that Glass is just an I/O device and as such the interaction with it should be simple. What makes the system exciting is what you can build on the server side that delivers the information to the wearer and responds to requests in novel ways. As well as the API we also have details of the hardware: Display High resolution display is the equivalent of a 25 inch high definition screen from eight feet away. Camera

Audio

Connectivity

Storage

Battery One full day of typical use. Some features, like Hangouts and video recording, are more battery intensive.

There is also an Android app that lets Glass use your phone as a communications and GPS device. It seems that Glass is better paired with an Android and this should come as no surprise. As well as the documentation Google also have released some videos on creating Glassware: In this video Timothy provides guidance on developing for Glass:

Here Timothy explains how Contacts work in the Google Mirror API:

Next, Timothy explains how Menu Items work in the Google Mirror API:

And now he explains how Timeline Cards work in the Google Mirror API.

Finally, Timothy explains how Subscriptions work in the Google Mirror API.

More InformationRelated ArticlesGoogle Glass The Mirror API - How It Works Google I/O 2013 Website Opens With Lots Of Fun Opportunity To Become a Glass Explorer Sight - A Short Movie About Future AR Google Glass - The Microsoft Version Google Glass - How it Could Be

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Tuesday, 16 April 2013 ) |