| Code By Voice Faster Than Keyboard |

| Written by Ian Elliot | |||

| Sunday, 18 August 2013 | |||

|

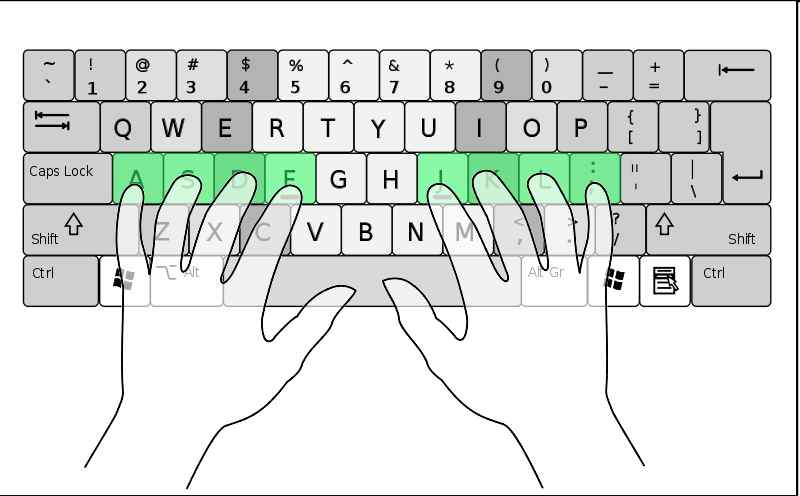

Is it possible that we have been wasting our time typing programs. Could voice recognition, with a little help from an invented spoken language, be the solution we didn't know we needed? About two years ago Tavis Rudd, developed a bad case of RSI caused by typing lots of code using Emacs. It was so severe that his hands went numb and he could no longer work. After trying all of the standard "conventional" solutions, such as different keyboard and generally paying attention to the ergonomics of his work station, nothing helped. As he puts it: If you have tried voice recognition, especially if it was a few years ago, you probably think that the project is doomed to failure. Even if you tried voice recognition recently, and discovered it has got a lot better, you probably still think that talking your code isn't going to work. This is where the creativity comes into the picture. The Dragon Naturally Speaking system used by Rudd supported standard language quite well, but it wasn't adapted to program editing commands. The solution was to use a Python speech extension, DragonFly, to program custom commands. OK, so far so good, but ... the commands weren't quite what you might have expected. Instead of English words for commands he used short vocalizations - you have to hear it to believe it. Now programming sounds like a conversation with R2D2. The advantage is that it is faster and the recognition is easier - it also sounds very cool and very techie. After a lot of practice, there are around 2000 commands, it is claimed that the system is faster than typing. So much so that it is still in use after the RSI cleared up. It is now time to watch the video and see what you think. It has a fairly slow, but interesting, start but if you want to cut to the action skip to about 9 minutes in:

To quote: "I hope to convince you that voice recognition is no longer a crutch for the disabled or limited to plain prose. It's now a highly effective tool that could benefit all programmers." I personally am not convinced but perhaps that is because I type faster than I talk. So many programmers never learn to type properly and even if you are a really good "hunt and peck" typist you are still only using two channels of a ten-channel output device. Of course, if RSI is an issue then the whole game changes and talking code looks like a big step in the right direction. Rudd says that he will release the code in a few months on GitHub.

More InformationUsing Voice to Code Faster than Keyboard Related ArticlesWeak typing - the lost art of the keyboard

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Sunday, 18 August 2013 ) |