| Predix IoT for Developers |

| Written by Nikos Vaggalis | |||

| Monday, 06 February 2017 | |||

|

We have seen businesses transform into software houses to withstand competition. To continue surviving into the near future they'll also have to embrace IoT, as the industry responds to the ever-increasing presence of connected devices. In this quest of automating everything, smart and embedded devices will play an increasingly significant role in collecting data at its source, data that they then transmit to remote monitoring centers for further analysis and real-time automated decision making. A prime examples of this is monitoring the state of a wind turbine and automatically shutting it down to avoid overheating. Therefore in essence IoT provides an ecosystem that consists of the hardware and the software running on the device, as well as a remote management center, typically cloud based, that hosts the facilities necessary for deriving the crucial insights from the raw data received in order to use them as the basis of all further decision making.

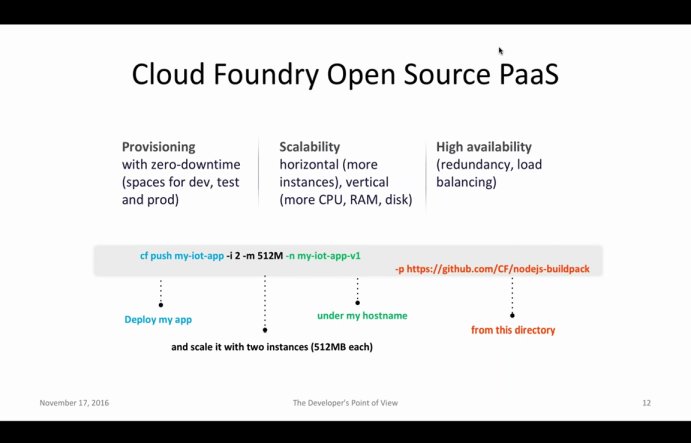

Another example is monitoring the condition of cargo in transit, for regulatory or quality assurance purposes, with sensors that read its storage conditions by way of moisture, temperature, air quality, pressure and flow, and forward them to a remote service through 3G or WiFi internet connections for further analysis and for business intelligence reporting. The system includes automatic alerts if monitoring detects goods deteriorating - something that is essential when pharmaceutical goods are in play. Because IoT is a multidisciplinary science, drawing from fields including big data, AI, statistics, deep learning, embedded programming, web development and security to name a few, the term "developer" is open to broad interpretation. In fact, it's an umbrella for all kinds of professions that find application in IoT, such as embedded systems programmers, electrical engineers, researchers, physicists, web developers and data scientists. You can't expect everybody in this group to possess the know-how to create an application, especially when two thirds of the time spent is not on code; setting up hardware and software for the infrastructure such as web and application servers, maintaining, patching, allocating resources, scaling, and continuous delivery should all be in place in order to host and run the code. As such the PaaS-Cloud based solution takes care of all those details so the developer doesn't have to. Instead it sets us free to focus on the stuff that really matters.

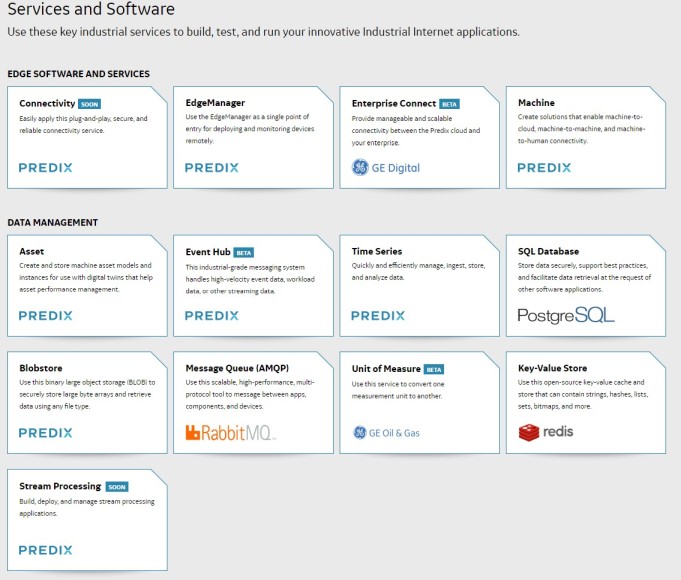

GE Predix is such a platform, that makes available a set of services that provide 'canned' and proven solutions to commonly encountered IoT problems, exactly as design patterns do for software engineering, that you can cherry pick depending on the business rules in place. Data scientists for example don't need to fiddle with setting up monitoring nodes to collect the raw data, therefore don't require the relevant services for their kind of business, but instead require the use of the analytics services of the platform, the Analytics Catalog, Analytics Runtime, and Analytics User Interface which take it from the data aggregation stage and beyond. These services and components, albeit self contained, can be strung together in order to build workflows similar to pipelines that shift and transform data from one place to the next to visualize or further analyze it or even secure it with encryption and user authentication.

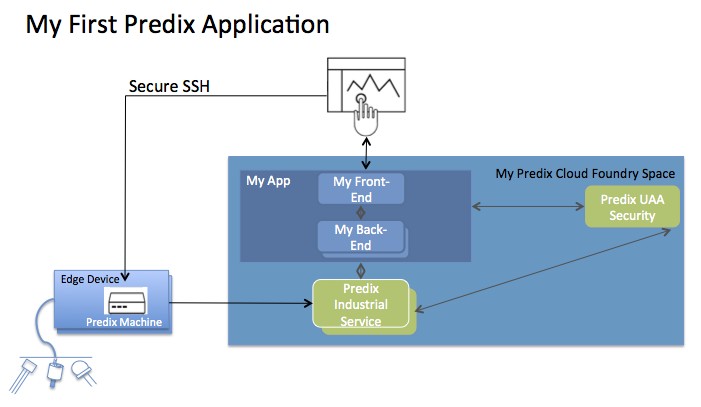

At the very basic level of developing a rudimentary application, you could start off with the Asset, Time Series and User Account and Authentication services. The Asset service is the logical counterpart of a physical asset i.e a device, or a collection of devices, hence the heart of the system. Without it the user can't connect the various sensors for obtaining information, run analytics to understand the health of the asset, or provide actionable recommendations. The Time Series service keeps track of the flow of the data on the receiving end, and can provide visual views like histograms for any given period of time, therefore able to provide actionable insights. Finally with the User Account and Authentication service, you create a web front end for users that requires OAuth2.0 token based authentication. On the actual hardware, you create an instance of a Predix Machine, in essence a Java container that is able to run on a variety of hardware and embedded systems. This acts as the interface that connects the device to the outer world by collecting the raw data from its sensors through common industrial protocols such as Modbus and OPC-UA and forwards them to the Time Series endpoint over a Web Socket connection. In Predix terminology, the data is received on a 'Data Node', from which an 'Adapter' collects it and sends it via more intermediate modules to finally reach to the Predix Time Series endpoint securely and reliably.

To get going as quickly as possible, Predix even provides a template which you can alter or change for your specific use-case, with which you can set up a Predix Machine in a snap and start sending data from a Raspberry Pi or Intel Edison device onto the cloud. Application templates are recommended when going for enterprise-grade applications, and are as easy to get and use as starting points for your own industrial apps, as cloning their repositories from GitHub. To sum the steps it takes to set up a Predix Machine:

As far as Cloud Foundry and its open source software infrastructure goes, Predix is built with it. The great thing with Cloud Foundry as far as developers goes is its Buildpacks which makes deployment a breeze. According to the Cloud Foundary documentation: Buildpacks provide framework and runtime support for your applications. Buildpacks typically examine user-provided artifacts to determine what dependencies to download and how to configure applications to communicate with bound services. In other words, Buildpacks takes your binaries, detects their language environment and generates a container for deployment. The languages inherently supported are Java, Node.js, .NET Core, PHP, Python, Ruby, and Go and it also has support for frameworks such as Grails, Java Main, Play Framework, Spring Boot, etc. To make it easier to start developing on the platform, Predix provides DevBox, a CentOS virtual machine with all the tools and the environment setup to start coding, Cloud Foundry CLI, User Authentication and Authorization CLI, Maven, Java, Ruby, Python, RabbitMQ and Predix Machine 16.x, included. There are many tutorials online to get you started but I would recommend commencing your exploration with:

Despite IoT being in its infancy, it nevertheless succeeds in opening a window onto our connected future, partially or fully automated by smart devices, sensors or AI. To get started developing on Predix, you can register for a free account here. More InformationPredix Cloud

Related ArticlesIoT Developers Gaining In Experience Predix - A Platform for the Industrial Internet Of Things IoT Anomaly Detection Using A Kalman Filter How to Authenticate a Device in the Industrial Internet of Things

Comments

or email your comment to: comments@i-programmer.info

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

|

|||

| Last Updated ( Thursday, 23 February 2017 ) |