| The Meaning of Life |

| Written by Mike James | |||||

| Thursday, 20 February 2020 | |||||

Page 3 of 4

Implementing LifeWhat might surprise you and it would probably have surprised Conway is the huge size of the constructions. If you take a look at the Turing machine example shown above you will appreciate that we are taking about implementing simulations with thousands of cells on very large grids. This sort of large implementation is essential to push experimental life into new areas - but how do you make the algorithms run fast enough. The simple and direct algorithm for implementing life seems to be the only one possible. For each generation you scan the entire grid i.e. two nested loops, and for each cell you examine its eight neighbors and count how many are alive. From this number you can determine if the center cell is alive or dead in the next generation. Notice that you can't just update the cell because that would change its neighbors neighbor count. The simplest thing to do is to compute all the neighbor counts for all the cells in another array and then perform a single update pass to get the next generation. The direct algorithm is capable of being implemented on parallel hardware and even on spreadsheets but to simulate large grids we need to find something faster. So far the best approach has been Hashlife which was also invented by Bill Gosper. This works by storing the life history of small clusters of cells over a number of generations. It is an example of memoization - i.e the swapping of computation for storing precomputed results in a table. The way that this works is that small regions of the grid are converted into a hash value which is used to lookup the pattern in a quadtree data structure. This stores the development of that pattern for a few time steps ahead. If the pattern isn't in the quadtree it, and its history are added using the standard algorithm. The clever part is the way that the grid is divided up into "macro" cells such that the There are lots of other optimizations that can be applied but the program is much more complicated than the simple approach. The original Hashlife program advanced different areas of the grid to different generations and was really only suitable for computing an end configuration from a starting pattern. More modern programs such as Golly can show the development at each step. Hashlife isn't a complete cure when it comes to speeding things up and for many high entropy patterns it doesn't help at all. Modern life programs such as Golly let you switch on Hashlife or just use the standard algorithm. ConstructionIf you look at many of the patterns that have been invented you simply have to be amazed and wonder what sort of mind manged to put things together in this way. You might even conclude that it was random luck as nothing so complex could be invented by the human mind. The truth is more interesting. What seems to be happening in life physics is that people seem to build a kit of parts which do things that in themselves might not be useful. They then put these bigger components together to for working machines. For example Gemini is a spaceship that moves in an oblique direction and this was invented by Andrew Wade in May (2010) by putting together three Chapman-Green construction arms set at the end of a number of active "tapes". This gives the pattern the appearance of a long diagonal line which when examined more carefully has two blob-like patterns at the end. The total pattern starts out with 846278 live cells - this is not a small configuration. As the pattern evolves through 33699586 generations it slowly erodes the original blobs and builds up two new copies and then the tapes slide across to complete the replication of the entire machine.

The starting configuration looks like a diagonal line ...

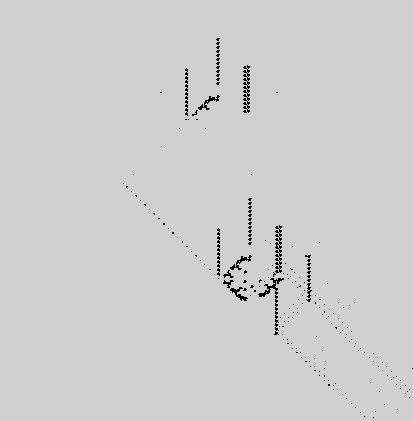

...but if you zoom in at the end you can see a new copy being made while the original is being erased,

Gemini is a complex self replicating machine and using it as a component no doubt you can move on and build even more complex "machines". It seems that life enthusiasts are climbing a ladder of complexity. Moving from the simple rules to ever more complex sub-units. These sub-units do particular jobs that seem well removed from the original form of the rule that makes life work. The whole thing mirrors what we have done in understanding the way that the world works with different types of rules applying at each level of the complexity ladder. Unfortunately we may have some sort of engineering based on larger units but we don't have anything like the physics to go with it. |

|||||

| Last Updated ( Thursday, 20 February 2020 ) |

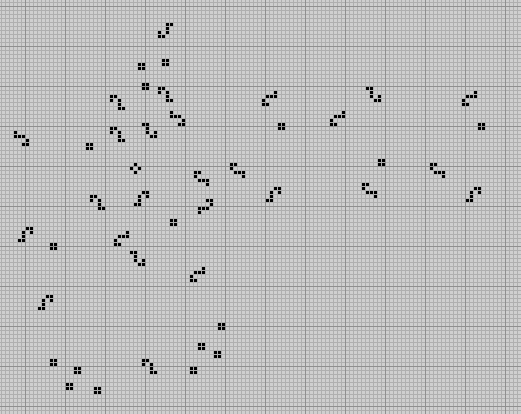

and if you zoom further in the incredible complexity of the pattern becomes clear - this is one of the "blobs" in the process of being erased.

and if you zoom further in the incredible complexity of the pattern becomes clear - this is one of the "blobs" in the process of being erased.