| Peer-to-Peer file sharing |

| Written by Administrator | ||||

| Tuesday, 09 March 2010 | ||||

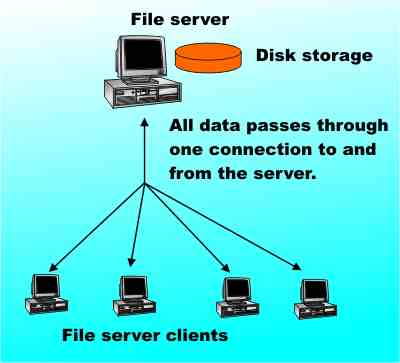

Page 1 of 3 Peer-to-Peer (P2P) file sharing is both a technology and a legal, if not moral, battle. There are good technical reasons based on efficiency and making best use of networked resources for wanting to build P2P systems. It's an important way of doing things and companies like IBM and many media companies have P2P software that you can use. If you use a video "catchup" program to see the episode of a series that you missed via the net then the chances are it uses P2P techniques to reduce the bandwidth needed and as a result your machine is part of a P2P file sharing network. Similarly Skype, the Internet telephony system uses a P2P approach as do most instant messaging (chat) systems. Apart from its technical advantages P2P manages to get through some legal loopholes in the copyright law and surprisingly this has, in turn, influenced the technology. So what is it that makes P2P so special? File sharingThe traditional method of sharing files, or any resource, via a network is to use a machine as a central server. That is, a single machine is dedicated to the task of storing files (like a dedicated server) and making them available to any valid clients. The server is not only responsible for looking after the files but for checking that a client has permission to access the files and then delivering them. A typical example of a central file server is a web server and this illustrates most of the advantages of the approach. Users know where to find the server and they can easily find out what is stored on it. The disadvantage is that each client that tries to download a file, or a web page in this case, adds an extra load on the server. In addition all of the data has to flow to and from the server and this creates a communications bottleneck. This might not be a problem when there are only a few clients but as the number of simultaneous requests for web pages or files in general grows there comes a point where the server cannot cope. Trying to increase the performance of a central server is a difficult task and we say that the approach doesn't "scale" well. This is the potential situation for any net TV company. If they adopt the central server approach then it's only a matter of time before the connection between the server and the net becomes saturated. Such applications need P2P architecture.

P2PFor a solution that scales well we have to look to P2P architectures that get the same job done. The basic idea of P2P is simple – do away with a central server and let all of the machines store files that the other machines want to gain access to. In this way when a client wants to download a file it doesn't always get it from same machine. This means that different parts of the network can share the load of lots of clients trying to download files. Of course now each client has the task of finding the file and a suitable download location. This is the real problem that has to be solved by any P2P system and strangely enough it's the one that the law has something to say about. <ASIN:0123742145> <ASIN:3642035132 > <ASIN:0313354731> <ASIN:076459981X> |

||||

| Last Updated ( Saturday, 30 October 2010 ) |