| The Best Sub-Array Problem |

| Written by Joe Celko | |||

Page 2 of 2

ANSWERS: The first assignment is done by realizing two bits of algebra:

where (b+1 <= n) and likewise:

where (a >= 1) Instead of re-computing sub-arrays over and over, save the results and re-use them. This reduces the complexity from O(n3) to O(n2).

But can we do better? This is a nice optimization but to go any further you really need to re-think your entire approach. There are a number of possible solutions based on the application of the divide and conquer technique, or even recursion, but the simplest and most direct approach to the problem is Kadane's algorithm. Let's see how this works and how you have to switch the way you think of the problem to invent it. The most obvious way to look for a faster solution is to see if you can find a way of decomposing the problem so that each step makes use of results from a previous step. This is an application of the dynamic programming approach. Suppose you already know the maximum sub-array that starts from each element of the array. For example, you know that the maximal sub-array starting at A[i] is A[i:m] and its sum is M for each element i of the array. Armed with this information you could find the global maximum sub-array by simply scanning the array once and finding the maximum of all the sub-arrays. That is, you would have an algorithm of order n, i.e. O(n). The problem is when you try to find the maximum sub-array starting at A[i] you have to scan all of the array elements to the right, i.e. i+1, i+2 and so on, to build up each sub-array starting at A[i]. This is clearly no better and there is no obvious way that you can use the result at A[i] to make the computation of A[i+1] any easier. So this approach doesn't work However, if you just shift your thinking a little, this approach can be rescued and the algorithm works. See if you can figure it out before reading on. Instead of finding the sub-arrays that start at A[i], focus instead on the sub-arrays that end at A[i]. It is obvious that the maximal sub-array ending at A[1] is A[1] itself, as there is only one candidate (assuming arrays start from 1). The next step is subtle and really clever. The maximal sub-array ending at A[2] has to be either the maximal sub-array ending at A[1] plus A[2] or it has to be just A[2]. You can see that this is true but can you prove it? In general the maximal sub-array ending at A[i] is:

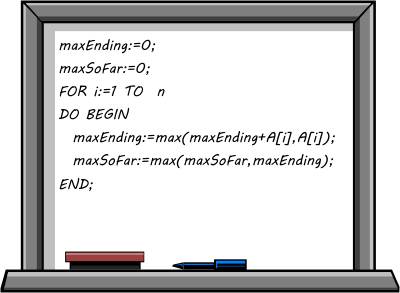

You can turn this into a recursion if you want to, but there is a very simple expression as a simple for loop. To simplify things let's just compute the size of the maximal sub-array and forget the indices that give where it is located:

At the end of the loop you have the value of the maximal sub-array in maxSoFar. It is fairly easy to add a couple of variables to keep track of the start and end of the maximal sub-array, but it makes the loop more difficult to follow. Notice that this computes the result you are looking for with one pass through the array, which makes the initial brute force algorithm look silly. Even so, who would have thought that a problem that seems to involve examining every sub-array could be reduced to an O(n) algorithm? If you still think that all this is easy and obvious, try the same task but in two dimensions - where the problem is known to have an O(n3) solution.

Joe Celko is best known as the database expert who writes books on SQL, data and databases. But before that, he was an honest developer obsessed with finding good algorithms and clean code. Other Articles In Sharpen Your Coding Skills If you've not already met experienced developer Melvin Frammis and his junior programmer sidekick, Bugsy Cottman you'll find they were initially introduced in the Elevator Puzzle, the first in Joe Celko's series of Sharpen Your Coding Skills and they have posed us further challenges ever since: Programmer Puzzle - Hermit Boxes Jigsaw Puzzles and The MacMahon Squares Vertex Coverings And The Cool Kids Problem Merkles and Social Engineering To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info <ASIN:0465045669> <ASIN:0691143420> |