| Getting started with Windows Phone 7 |

| Written by Harry Fairhead | ||||

| Friday, 20 August 2010 | ||||

Page 2 of 3

How to test WP7?WP 7 supports multi-touch, as does Windows 7 and Silverlight, but there is a small problem with trying it out. The WP 7 emulator also supports multi-touch but only if you are running it with hardware that supports multi-touch. In practice this means a multi-touch monitor and these are far from standard and comparatively expensive. In many ways a better option if you want to test multi-touch applications is to acquire a real WP 7 phone and test using the sort of device the application will run on. As to the matter of how you actually support multi-touch then it helps to compare the situation with how you support drag-and-drop say. Drag-and-drop, like a simple click, is something you have to support by creating the appropriate event handlers. For something simple like a click you implement a single event handler and respond to the click event by doing whatever the click is supposed to set in motion. For a more complex user interaction such as drag-and-drop you have to implement multiple event handlers - one for mouse button down, one perhaps for mouse move and one for mouse button down. These even handlers correspond to picking the object up, dragging it and dropping it. Now think about multi-touch in the same terms. You clearly need to create event handlers that respond to events at two or more locations on the screen and manipulate the object concerned in an appropriate way. What this means is that multi-touch is inherently more complicated. The good news is that multi-touch events that correspond to single touch events are automatically converted to mouse events and can be handled in the usual way. Hence if the user taps on the screen a mouse click event is generated. This means that unless you really need to handle true multi-touch events you can more or less ignore the problem. If you do want to handle all multi-touch events you can switch off the auto conversion. To handle multi-touch you need to look at the new Manipulation events:

You can think of these as being the multi-touch analogs of MouseDown, MouseMove and MouseUp events. A multi-touch action will always start with a ManipulationStarted event, a sequence of ManipulationDeltas and a final ManipulationCompleted events. The details of each of the events is passed via their own event argument object and includes the object that was the source of the event and location. The key to understanding how this all works is to realise that even a multi-touch event generally only involves a single object. For example, if you touch a single object - an image say - with a single finger then a single ManipulationStarted event is generated and if you move the finger a set of ManipulationDelta events is generated until you remove the finger and a ManipulationCompleted event occurs. If you touch another object then the current manipulation ends and a new ManipulationStarted is generated. If you touch the same object with another finger then the manipulation isn't terminated and ManipulationDeltas are generated as the fingers move with the location being an average position of all of the fingers. You might think that this isn't of much use but there is another event argument that makes a difference. Let's suppose that an object is touched by two fingers that move position on the screen and move apart. The Translation property gives the average movement of the two fingers but the scale property indicates how far they have moved apart. The ManipulationDelta events give the incremental translation and scale values so that you can show the object being manipulated in intermediate states. The ManipulationCompleted event provides cumulative translation and scale so that you can determine a final position and scale. You should now be able to see how you could implement a two finger drag-and-scale quiet easily. You might also have noticed that there appears to be no rotation parameter. At the moment you can't use Manipulation events to implement a translate, scale and rotate operation. If you want to do this then you have to work at the next level down with events that give you the position of individual fingers - this is not as easy a task. Rotation will be supported in future releases of the system. At this point you are probably waiting for an example of a two-finger drag-and-scale. Unfortunately I don't have access to a touch screen device that works with the emulator and so I can't provide one. Even if I could provide one the chances are that you don't have the hardware to try it out at the moment - so it's something that we will have to return to when enough WP 7 devices are available to make it a useful exercise. In addition the latest (July) release of the development tools still has some problems with multi-touch implementation. What to do in the interim? Unless multi-touch is in some way central to your application your best course of action is to ignore it for the moment. Basic multi-touch support is provided by the operating system and it is best to concentrate on building the standard UI and behaviours of your application and retrofit any multi-touch support you need later. After all how important is drag-and-drop in most of your desktop applications?

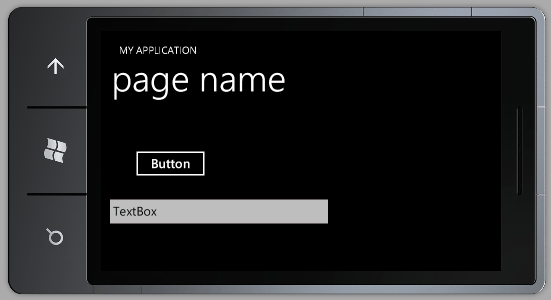

Screen RotationTo return to something that we can try out. How do you support rotation? The answer is remarkably simple as the framework automatically supports rotated layouts. All you have to do is state that it is ok for the system to rotate your application. You can do this by setting a property: public MainPage() You can also set the property using XAML via the properties window. With this small change we can now run the application again and if we rotate the emulator using its control menu the application is re-layed out in the new orientation - what could be easier.

<ASIN:1430232196> <ASIN:1430229284> <ASIN:0735643423> <ASIN:1430232161> <ASIN:0672332221> <ASIN:0735643350> |

||||

| Last Updated ( Monday, 20 September 2010 ) |