| Universal Hashing |

| Written by Mike James | |||

| Friday, 27 January 2023 | |||

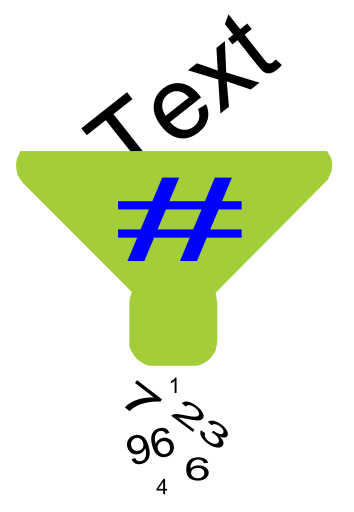

Page 1 of 2 Hashing is a fun idea that has lots of unexpected uses. Here we look at a novel type of hash function that makes it easy to create a family of universal hash functions. The method is based on a random binary matrix and is very simple to implement. Put simply you give a hash function an item of data x and it returns a number h(x).

You can use this number for all sorts of things but in general a hash function has a number of desirable properties.

Typically hash functions are used for storage, store x in Data[h(x)], or for security, if h(x) changes then x has been changed. If you are using a hash function for security then you need a higher grade of hash function - a cryptographic hash. The hash function described in the example can be extended to a cryptographic hash but in its current form it is suitable for use as a storage hash. Notice that as a hash function "condenses" down an item of data to a smaller number of possible hash values it is not unique. That is, for any hash function there will be usually quite a lot of other data values, y say, for which h(y)=h(x). This is often called a collision and it is perfectly natural for a hash function and all hashing algorithms have to deal with the situation in one way or another. In this article the focus is on hash functions used for data storage and retrieval. Families of hash functionsIn the early days of hashing you generally just needed a single good hash function. Today things are getting increasingly complex and you often need whole families of hash functions. Once such family is the Universal Hash. This is a set of hash functions with an interesting additional property. First we need to look at the problem that this additional property is designed to solve. Consider a hash storage scheme based on storing x in a location given by h(x) which ranges from 0 to N-1. What is the worse thing that can happen? The answer is that you are given N things to store that all map to the same hash value - i.e. you try and store everything in the same location and every access involves a collision. You can prove quite easily that for any hash function it is possible to find a dataset that provides this worst case. For example if the number of things you could ask to store is greater than N*N then if you attempt to store N*N data elements in the array with only N storage locations it is obvious that each location will store N data elements i.e. there are going to be N collisions. Just pick the data mapped to the same location and you have your worst case dataset. It is a simple consequence of mapping a big set to a small number of locations - there have to be collisions - and you can find any number just by filling the storage and keeping going. So can you protect your hashing scheme against this attack? Yes you can by having a family of hashing functions H that you randomly select from before starting the algorithm. In this case any worst case set of data that some one has selected has only a probability of being worst case for the hash function selected. If the family of hash functions is such that given x and y then the probability that h(x)=h(y) for a hash function drawn randomly from m possible hash functions is 1/m then the family is called a universal hash family. Universal hash families are particularly useful for algorithms that need multiple hash functions or which need the data structure to be rebuilt if too many collisions occur (look out for Cuckoo hashing coming soon). So we need an example of a universal hash family. There are standard examples of universal hash functions created using the usual "multiplication mod a prime" - i.e. similar to congruential generators. However, there is a little known method based on using a random matrix. It has lots of advantages - it's a universal family, it performs well, it's easy to understand and it's quick to compute. The idea is very simple. Suppose you have an input data item that you have input data with m bits and you want a hash function that produces n bits then first generate a random nxm binary matrix M. The hash function simply consists of working out

where you consider x to be a binary vector. For example, suppose you have a four bit input value and want to generate a three bit hash value. Then generating a random matrix gives say:

and if the data value was 1011 the hash value would be computed as:

or in other word h(1011)=M(1011)=010. If you find the math difficult to follow it might help to be reminded that in binary (or mod 2) arithmetic 1x1=1, 0x0=0 and 1x0=0, also 0+0=0, 0+1=1 and 1+1=0. There are a number of other ways to look at the way the arithmetic is done that suggest different ways of implementing the algorithm. The first is to notice that what you are doing is anding each row with the data column vector. That is taking the second row as an example:

and then you add up the bits in the result:

Adding up the bits in the result can also be interpreted as the parity function because the result is zero if there are an even number of ones and one if there are an odd number of ones. Notice also that this involves m Ands and m parity determinations. The second way of looking at the arithmetic is to notice that the multiplication picks out the columns of M corresponding to where the data has a one and then does a bitwise addition or exclusive or. For example:

picks out the first, third and fourth columns of M and adds or exclusive ors them together:

Notice that this might involve as many as m columns and probably m iterations to form the result. As m>n the first method is worth looking at more closely. There may be other ways to interpret the arithmetic as logical operations but these two are the most useful. Which is best depends on the hardware available. For example, if the machine you are working with has a fast way to determine the parity of a word then the first method should work well. An implementationTo demonstrate how the idea works let's create a small C# class that implements both approaches to the random binary matrix hash. The code is easy enough to convert into any other language. First we need to create the random matrix. Instead of working with a bit array it makes more sense to use an Int32 for each row of the array and assume that the input data is an int32. For simplicity we can use Int32 and just use the lower 31 bits to give a positive number range for the data. So start a new C# project and add a new class complete with random number generator and Int32 array ready to hold the rows of the bit matrix:

The constructor creates the random matrix with m rows and stores m for other methods to make use of:

Once the constructor is finished we can use it to create a hash object capable of taking a positive Int32 and returning an m bit hash. The next step is to create the method that does this job:

The method takes each row of the matrix and ands it with the data. The result is then converted by the parity function into a 0, for even parity or a 1, for odd parity. Don't worry how parity works for the moment. At the end of the routine we have an m bit hash to return. If you want to write the method a little more compactly you could write the body of the for loop as:

Now to try it out all you have to do is:

and you have an 8-bit hash for any positive Int32 you care to supply. |

|||

| Last Updated ( Friday, 27 January 2023 ) |