| Deep Learning Finds Your Photos |

| Written by Mike James | |||

| Monday, 27 May 2013 | |||

|

It is amazing that some of the most advanced AI on the planet is being rolled out as yet another way to entice you to use Google+. Now you can search for untagged photos that have particular objects - like a car - in them. If you did Geoffry Hinton's Neural Network course last year, then you will remember the example used to introduce convolutional neural networks for object recognition. The ImageNet Large Scale Visual Recognition Challenge 2012 provided an opertunity for different AI techniques to be used to recognize objects in a big library of photos. All the program had to do was correctly classify the photo into one of 1000 categories and put a bounding box around the object named in the category. The big surprise at the time was that the best performing technique was a big deep neural network, called SuperVision, built by Alex Krizhevsky, Ilya Sutskever and Geoffry Hinton based on the sort of convolutional network pioneered by Yann LeCun.

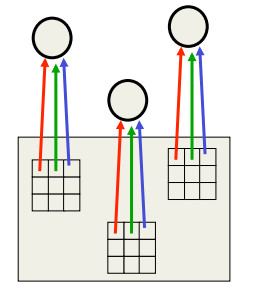

A convolutional network is the same network applied at different locations within the image

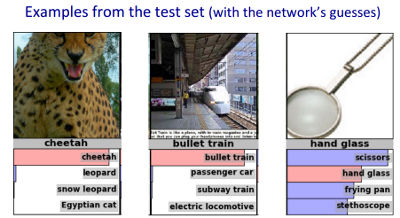

A convolutional network is like any neural net but it is designed to take account of the fact that the object can be in the photo at any location or orientation. The way that this works is that that network is replicated at every point in the image so that it has the chance to react in the same way to the object no matter where it is in the photo. SuperVision is described by its creators as: "Our model is a large, deep convolutional neural network trained on raw RGB pixel values. The neural network, which has 60 million parameters and 650,000 neurons, consists of five convolutional layers, some of which are followed by max-pooling layers, and three globally-connected layers with a final 1000-way softmax. It was trained on two NVIDIA GPUs for about a week. To make training faster, we used non-saturating neurons and a very efficient GPU implementation of convolutional nets." What was interesting is that even when the network got it wrong you could see why it got it wrong and its second guesses were also generally reasonable.

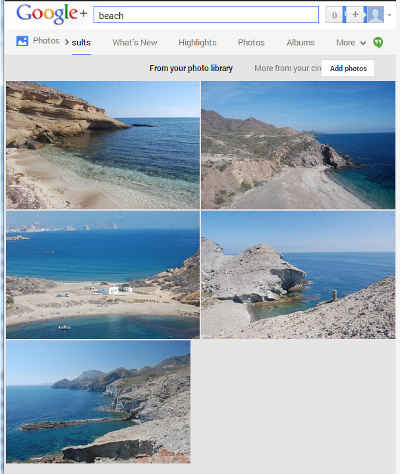

Impressive, but just another AI experiment working towards something practical maybe years down the road. However, if you go today to your Google+ photos and try a search for "car", "flowers" or "sea" or "beach" you might be surprised that you get a reasonable set of results - despite not having tagged any of your photos in a way that could help the search. What is more, even the failures have a reasonableness that might even bring a smile to your face. Could this be a deep convolutional network? Well we reported back in March that Hinton, Krizhevsky and Sutskever had been recruited by Google - so I'd be very surprised if it wasn't. But all Google wants to talk about is the fact that you need to be a Google+ user to try out this futuristic technology. What the Google Search Blog does say is: "Starting today, you’ll be able to find your photos more easily and connect with the friends, places and events in your Google+ photos. For example, now you can search for your friend’s wedding photos or pictures from a concert you attended recently. To make computers do the hard work for you, we’ve also begun using computer vision and machine learning to help recognize more general concepts in your photos such as sunsets, food and flowers." It works quite well! And when if fails you can forgive it because you can see that what it has offered could be confused with what you were looking for - search for a river and you might be offered a lake or a dry river bed and both seem reasonable.

This is the first time that a neural network has been used in a mass market application and for AI to be recognizing objects in photos in a useful way is a big breakthrough - surely Google could make a bit more of a fuss about the technology than it is doing at the moment! More InformationFinding your photos more easily with Google Search Neual Networks For Machine Learning Related ArticlesDeep Learning Researchers To Work For Google Google's Deep Learning - Speech Recognition A Neural Network Learns What A Face Is Speech Recognition Breakthrough

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Tuesday, 08 December 2020 ) |