| WebKit JavaScript Goes Faster Thanks To LLVM |

| Written by Ian Elliot | |||

| Friday, 16 May 2014 | |||

|

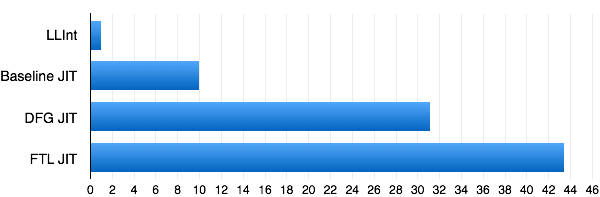

The efforts to make JavaScript faster have now spread to WebKit and, of course, to Apple's Safari browser. The way that this has been achieved is very clever. Webkit uses a JIT compiler that applies three stages of optimization. The first stage is a standard interpreter that makes sure that the JavaScrpt code runs without any delay. It may not run as fast as possible but it has low latency which is usually what users want. If code is called more than six times or if it loops more than 100 times then it moves on to the next stage and is JIT compiled without much optimization. If it is called more than 60 times or loops more than 1000 times then an optimizer is applied. The speed gained by the optimized code is about a factor of 3 faster than the JIT and 30 times faster than the interpreter.

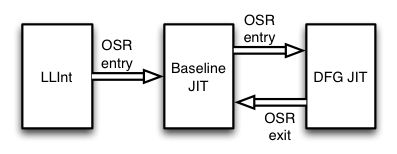

LLInt= Low Level Interpreter, Baseline JIT= unoptimized, DFG JIT=Data Flow Graph JIT, OSR = On Stack Replacement. The optimizer was good, but it didn't do the sort of job that a C or C++ optimizer does in optimizing register layouts and so on. Instead of implementing its own fourth stage, the WebKit team decided to use the existing LLVM code optimization layer. The LLVM fourth stage is called FTL, which is clever because most people think "Faster Than Light" and not "Fourth Tier LLVM". The results are impressive - about 40 times faster than the interpreter and 3 times faster than the original optimized JIT.

You can read more about how the optimizer works, and how the time that the LLVM backend takes was reduced, in the original blog post - see More Information. FTL is currently in WebKit nightly builds and soon you can expect to see it in release versions. Of course, Google and Mozilla aren't going to let their JavaScript engines be outdone. It is worth recalling that while Chrome used to be a WebKit-based browser, it split recently from the project and is building its own rendering engine, Blink. It also makes use of the V8 JavaScript engine and not Webkit's. In many ways this is a case of WebKit catching up with Chrome and Firefox. Could it be that Chrome and Firefox could get similar improvements by using the state-of-the-art LLVM backend optimizer? Firefox also has its own particular approach to making JavaScript fast by optimizing a subset of JavaScript - asm.js - which is annotated and restricted to make optimization easy and more effective. It looks as if it is still possible to squeeze out more performance from JavaScript.

More InformationIntroducing the WebKit FTL JIT Related ArticlesThe Astonishing Progress Of Blink Chrome V8 JavaScript - Ever Faster Webkit.js - Who Needs A Browser? Mozilla Enhances Browser-Based Gaming

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Friday, 16 May 2014 ) |