| Kinect SDK 1.7 |

| Written by Harry Fairhead | |||

| Monday, 18 March 2013 | |||

|

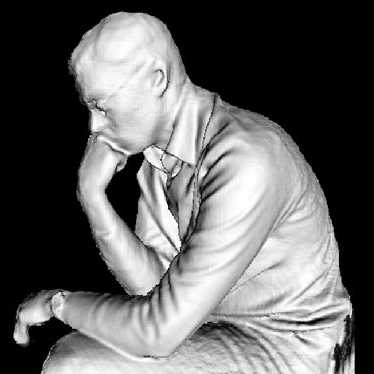

The latest revision of the Kinect SDK brings a number of really important high-level features and a few surprising lower-level innovations. You might think that there isn't much scope for the Kinect SDK to develop after so many revisions but the latest version 1.7 brings with it some amazing possibilities. The feature we have all been waiting for is Kinect Fusion - the 3D scanner and model creation software. As long as you have a machine with a supported GPU then you can now use the Kinect to scan large objects interactively. Lower performance hardware can still be used but not interactively. Basically you can "paint" a scene by moving the Kinect as it it was light illuminating the 3D scene area by area. There are a number of sample programs provided and these could be just used to create 3D models. The real exciting part is that you also get access to the underlying processing. Libraries are provided for both managed and C++ development and you can use them to create new applications that make use of the Fusion algorithm to scan objects and work with the complete or partial wire frame models.

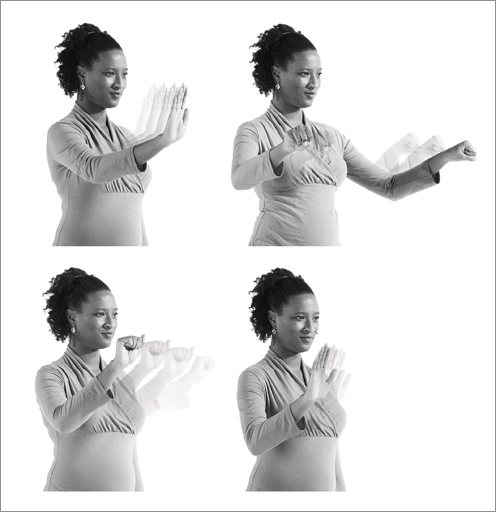

Although Kinect Fusion was the most anticipated feature of the SDK the new Interactions framework might just be the one that pushed the Kinect into more application areas. It is a pre-packaged set of components that allow an extended range of gestures to be used without having to create the code that recognizes them. The standardized gestures include push-to-press buttons and grip-to-pan and move. The idea is that the user stands 1.5 to 2 meters from the sensor which is mounted above or below the screen. The user can then interact with the application using simple hand gestures. Up to two users can be tracked at the same time. A sample gives a good idea of the sorts of things this can be used for. There is a native code API that can be used from C++, but the WPF based controls seem like the much easier-to-use option. There are also several new samples. As well as demonstrating the use of Kinect Fusion and Interaction, you can also discover how to use MatLab and OpenCV via Kinect Bridge to create image processing applications. Being able to mix the Kinect sensor with the state of the art AI and image processing algorithms found in MatLab libraries and in OpenCV should see another round of innovations for us to enjoy. One recent notable experimental feature which hasn't made it into the SDK is the recent Kinect web browser integration - perhaps we will see it in the next update. With SDK 1.7 the Kinect development environment has made a significant step from low-level interface to higher-level framework.

More InformationRelated ArticlesMicrosoft Not Open Sourcing Kinect Code Kinect Can Detect Clenched Fist PrimeSense Imagines A 3D Sensor World Kinect Fusion Coming to the SDK Kinected Browser - Kinect On The Web

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Monday, 18 March 2013 ) |