| LEAP Skeletal Tracking API |

| Written by David Conrad |

| Monday, 02 June 2014 |

|

The LEAP motion controller made a big hit until it actually came to market when the excitement seemed to dissipate without any real explanation other than it just didn't quite live up to the promise. Now there's a new API the could make the splash the device initially promised.

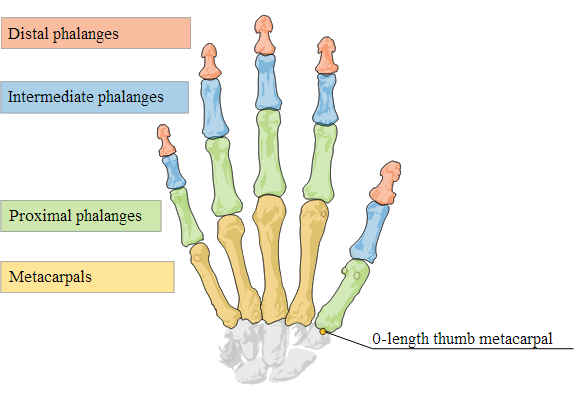

The new skeletal tracking API provides a much better robust hand tracking with full degrees of freedom for all moving parts. The new Bone API allows you to extract data from tracked hands corresponding to the positions and measurements of the bones making up the hand. You can retrieve joint positions and bone lengths so that you can easily create an on-screen representation of the user's hand positions. The idea is that you can allow the virtual hands to interact with virtual objects created by physics engines. This plus the original skeletal tracking features such as the pinch, grab and other gestures can work together to provide natural interaction

The LEAP hardware is simple enough - three infrared LEDs create a pattern of dots which are viewed by two IR cameras in the box. The parallax data generated is sent back to the PC over the USB connection where it is processed by the controller software. The point is that the simple hardware relies on the software to do the 3D extraction and there is probably a lot more that can be done to improve it. The most important part of the API, however, is that it is getting feedback from early adopters that sounds more like the sort of thing the original LEAP launch should have generated. It seems to work much better than the original tracking API and it seems to be easy to use. The main problem seems to be how the system handles occlusions of a finger by a hand or other fingers. This does seem to be a hardware problem and it could be solved by using two sensors.

The real question is - is it good enough to give the LEAP a better chance in the market? You can't beat it on cost at around $80. All it needs is the software to make it attractive

More InformationRelated ArticlesEasy 3D Display With Leap Control Leap 3D Sensor - Too Good To Be True? Muscle Input Device Getting Ready To Ship

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

| Last Updated ( Monday, 02 June 2014 ) |