| Compression - the Starr Guide |

| Written by Darren Starr | |||

| Wednesday, 02 November 2011 | |||

Page 2 of 2

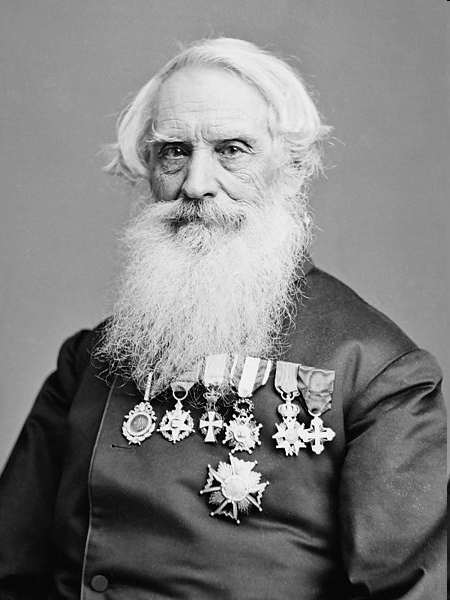

Dictionary-based compressionThis introduces great topic called dictionary based compression. Dictionary based compression is similar to how we know what “LOL” means. We share a common dictionary in the world and therefore know when we see “LOL” or even “lol” it means “Lots of Laughs”. Three whole words compressed down to only 3 letters. A dictionary, however, has to be established somehow. We can’t just wake up some morning and expect that any acronym we invent will be universally understood. Therefore we have to either propagate the new word though some form of media such as television or telephone, or other less efficient methods that take longer, or we can communicate it when we start off. The “Beep!” method will become a universally understood method of communicating highly repetitive “Umms” following the publishing of this article. However, we need a great way of coping with new cases and for that we’re going to define a dictionary. When the speaker described earlier starts his presentation, when he reaches his first repetitive series of “Umms” exceeding the count of two, he’ll say “Beep! Five. This means five ‘Umms’”. When he reaches the first point where he only needs to say “Umm” twice, he can choose to say just “Beep!” and explain why. Then, when saying “Beep!” on its own in the future, the people kind enough to pretend to be listening to him will understand this code, or the speaker can choose a new word such as “Zeep!” to mean just two “Umms.”. Assuming that speaker is not in fact either a circus clown or a bicycle horn sales person who impersonates his products as part of his pitch, the beep method is highly efficient, but if this person happens to be unfortunate enough to have one of those two careers, beep can be problematic. The reason being is that sometimes you just need to say “Beep!”. But, now the audience has learned that “Beep!” alone is meant to mean two “Umms”. This is a real issue for a clown or horn salesman, since we also need a means of saying “Beep!” and meaning “Beep!” efficiently. So, from now on, when the speaker needs to actually say “Beep!”, for each time they really wanted to say “Beep!”, then instead of just “Beep!” they will say “Beep! Beep!”. For the average person, this should be highly efficient. However for the horn sales person or clown, the number of beeps they say, and “Beep!” being the most often used word in their vocabulary, the number of syllables required to make it through a sentence almost certainly has grown and instead of compressing, the end result has grown. For this, I recommend that if you happen to be a clown or horn sales person with the obsessive need to say “Umm…” choose a better keyword such as “Zop!” or “Pwap!”, single syllables being preferable for the sake of compression, of course. Now in the future a great deal of time and syllables can be saved by making use of “Run-Length Encoding” as well as “Dictionary Based Compression”. ScalingScaling is another popular and easy-to-understand form of compression. If you hire a private investigator to follow your spouse around, (forgetting that there are bigger problems in your life such as trust issues) to find out if there’s something going on between them and their secretary, the investigator will likely use a high quality camera which takes pictures with a 9.2 gazillion pixels through a NASA/Hubble certified telescopic lens. However, when you first receive the picture, it’ll likely be through an e-mail or MMS message on your Apple iPhone (chosen for the spouse-tracker ability) and viewed on a screen that is small and even with a Retina Display, only half a quarter of a million pixels. So, to view the whole image on the screen, the image will have been compressed substantially. While you won’t be able to identify that secret birthmark on your spouse's behind in the photograph without zooming to an inappropriate scale, it will be entirely clear that the two people fondling in the photograph are in fact your spouse and their secretary. Entropy codingThe really hard core compression geeks like talking about an awesome type of compression which is called entropy coding. Entropy is generally a term used when measuring randomness. In the English language, there is quite a bit of randomness, but there are certain characteristics which are true. For example, without actually counting, I’m quite sure that I have used the letter E more times than any other letter in this document. E is the most commonly used letter in several languages, but most importantly in this context, the English language. Thus far, I have used the lower case letter ‘e’ 1288 times in the unedited form of this document. On the other hand, I have not used ‘Q’ or ‘X’ even once. So what does this have to do with the price of winter parkas in Jamaica? Well, a long time ago, a man unfortunate enough to have the name “Samuel Finley Breese Morse” (I mean who does that to their poor child) created a fantastic means of using nothing but long and short beeping or ticking sounds to communicate messages over wires strung all across the USA. By pushing a button in New York, a magnet in California would pull a piece of metal to itself making a tick sound. To represent every letter in the English language using the exact same number of long and short beeps for each letter, then to transmit each letter the telegraph operator would have to press the key 5 times for each letter.

Assuming numbers and punctuation were necessary, that would need 6 dots or dashes. That would have an average rate of 3 seconds per character. To transmit this document to the start of this sentence (in the unedited form), thus far 7505 transmittable characters would take 22515 seconds or 6.25 hours. Remember that one of the first uses of this system was to transmit news articles around the country by reporters to their editors. Larger newspapers often employed their own telegraph operators to make sure they received these articles as quickly as possible. Since these articles had to be retransmitted from point to point, for example for an article to get from San Francisco to New York might require an operator in Kansas to transcribe the entire article and then retransmit it, the same article would take 12 and a half hours to get there. So, this Sammy guy was a pretty smart guy. He created what was effectively the first ever form of electronic data compression and it was so popular that amateur radio operators all over the world are still using it as an “elitist” form of communication nearly 200 years later. This compression wasn’t just a primitive form of compression. It is in fact almost identical in design to the most widely used state of the art compressions today. As a matter of fact, the newest, latest and greatest film piracy codec in development right now called HEVC or H.265 does in fact depend heavily on this technology. So, now I know I promised earlier I’d explain how these things work so everyone could understand. And I know I bored you with facts about the letter ‘e’ without even having any Sesame Street characters munching on cookies while doing so. So here we go. Morse CodeMr. Finley Breese Morse figured that he could optimize the method of transmitting messages over the wire. To do this, he considered that if a dash should last three times as long as a dot, then dots are better for more common letters. By calculating how much time is need to transmit each pattern, it is possible to make a list of the shortest to the longest patterns. Then for the common letters, such as ‘E’ the shortest patterns would be used and for letters like ‘Q’ (which I still only used 7 times) a much longer pattern would make sense. Also, by making the length of each letter a variable number of dots or dashes, even fewer dots or dashes would be needed to cover all the letters and numbers. So instead of every letter needing 6 dots or dashes, most use 3 or less and the longest letter is 4 tones and every number is five tones.So, at the very least, the compression designed by Morse actually compressed everything at least in half and the reader on the receiving end would have precisely the same text in front of them as was sent by the transmitter.

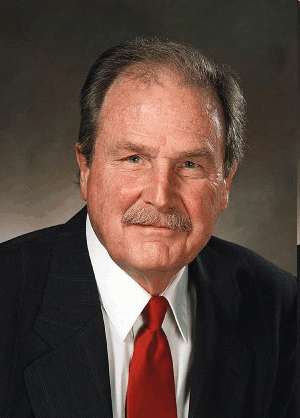

Computer based entropy coding is certainly more advanced and these days we have even fancier methods like adaptive arithmetic coding, but the principles remain roughly the same. Instead of dots and dashes, we use ‘0’s and ‘1’s. Back in 1951, a guy who later would grow a big bushy mustache named David A. Huffman built on the theories originally employed by Morse (as well as others like Claude Shannon and Fanno since) to refine the system so that it would become a major cornerstone of computer compression for the next 60 years.

Huffman the man who invented the code

Even today (and I mean literally today) I spent part of the day employing Huffman coding to a television broadcast algorithm I was working on. It’s that relevant. And that is about as much as I can fit into an article about data compression. Please do not get carried away with my ‘Beep System for Umm Abbreviated Representation’ as of yet since I intend Beep! to Beep! file a Beep! Nine it shortly. Related articles:Data compression the dictionary way |

|||

| Last Updated ( Wednesday, 02 November 2011 ) |

Mr. Finley Breese Morse

Mr. Finley Breese Morse