| No 16-Clue Sudoku! |

| Written by Mike James |

| Thursday, 05 January 2012 |

|

The game of Sudoku was, and still is a passion, for many, but it is surprising how little we know about its algorithmic structure. Now we have the result that you can't have a problem with only 16 clues - you need more to ensure there is a unique solution.

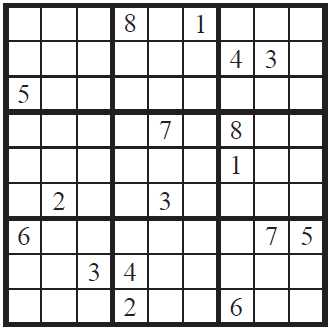

The standard Sudoku puzzle is played on a 9x9 grid and the usual rules are that each 3x3 grid contains the digits 1 to 9 exactly once. For a valid Sudoku it is also generally agreed that given an initial set of filled in squares there should only be one valid completion - i.e. only one "correct" answer. Now if you are a programmer the obvious question, well one of the questions, is "how many digits do you have to supply to ensure that there is only one solution?" Given that there are 81 cells in the grid and typical published puzzles have around 25 supplied digits, you might be surprised to learn that there are puzzles with a unique solution using only 17 clues. Here is an example:

Given that the difficulty of a Sudoku probably depends on the number of clues there is a very real interest in finding puzzles with 16 or fewer clues - but none have been found. Now we know why. A team lead by Gary McGuire at University College Dublin has managed to prove that a 16-clue puzzle always has more than one solution and hence there are no 16-clue Sudoku grids. The method used might seem a little crude at first - exhaustive search through the entire space of 16-clue puzzles. Take all of the completed Sudoku grids and look for the puzzles that have that a particular grid as a solution. Even a clever, optimized, brute force search would have taken some 300,000 years to complete, so clearly the direct approach isn't feasible. It is also worth noting that purely mathematical approaches to proving that there is no 16-clue grid with a unique solution have got virtually nowhere. You can easily prove that a 7-clue grid has at least two solutions - the two missing digits can be swapped to give another solution - but it isn't obvious that an 8-clue grid has more than one solution. The distance from 7 to 17 is quite a way and so a pure logic solution is probably hard. The final search program that failed to find any 16-clue Sudoku grids made use of some theorems to narrow down the arrangements that had to be considered. The detailed algorithm is explained in the paper and it is claimed that it almost certainly has other uses in similar combinatorial problems including genomics, bioinformatics, computer networks and software testing. It eventually makes use of the "hitting set" problem which is one of the classical NP-complete problems - so it is computationally hard. The hitting set problem sounds innocent enough when you first encounter it, but you quickly realize that finding a hitting set is difficult. Given a set of subsets of items all you have to do is find a set of given size k a k-Hitting set that contains one item from each of the subsets. Given some sets might contain the same item you can see that it is more difficult than it first seems - try it if you don't believe me. The final algorithm still took 7.1 million core hours on a machine with 320 compute nodes each with 6 cores. The program was started in January 2011 and finished in December 2011. So what does this mean for the Sudoku-playing world? It is, however, humbling to notice how even simple questions about finite things very quickly become very difficult to answer. Finally who could resist the opportunity to quote the xkcd cartoon on the subject:

More informationRelated articles

Comments

or email your comment to: comments@i-programmer.info

To be informed about new articles on I Programmer, subscribe to the RSS feed, follow us on Google+, Twitter, Linkedin or Facebook or sign up for our weekly newsletter.

|

| Last Updated ( Thursday, 05 January 2012 ) |