| Zip Tests Quantum Mechanics |

| Thursday, 21 April 2016 | |||

|

If you know a little about quantum mechanics, or computer science, then the idea that there is anything about it that can be tested using the familiar zip compression algorithm will seem as strange as the theory. The Bell inequality is one of the key tests that separate true quantum physics from classical theories no matter how outlandish they may be. As Wikipedia puts it: "In its simplest form, Bell's theorem states: No physical theory of local hidden variables can ever reproduce all of the predictions of quantum mechanics." The theorem is based on what happens when you have two entangled states. No matter how far away from each other the states are, the measurement of one affects the measurement result of the second. This effect can seem to be instantaneous and seems to involve faster-than-light travel but no information can be transferred using this mechanism. The point of Bell's inequality is that it rules out a classical explanation for the effect using things like local hidden variables and as such makes clear the difference between quantum and classical physics.

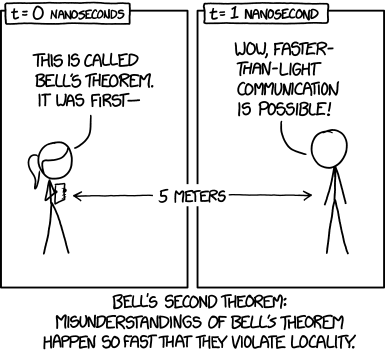

More cartoon fun at xkcd a webcomic of romance,sarcasm, math, and language

In a test of the Bell inequality what normally happens is that the measurements are carried out many times on a set of identically prepared systems and then statistics are calculated. The new approach doesn't compute the probabilities but processes the measurements, i.e. zeros and ones, using a zip compression package. The compression ratio achieved is used as a sort of proxy for the Kolmogorov information measure. This measures the regularity in a stream of values as the size of the smallest program that can generate it. An ordered sequence can be generated by a very small program but a truly random sequence needs a program as long as it is to generate it. The quantity in question - the normalized compression difference - can be shown to be less than zero if the universe is classical, even if mimicking quantum effects. For a quantum universe it could be as high as 0.24.

To settle the matter an experiment was performed that took measurements of thousands of entangled photon pairs. The researchers then used the 7-zip archiver to compress the data and came up with a value greater than zero: 0.0494 ± 0.0076, which implies that the universe is quantum. The small value is probably due to the fact that the zip algorithm isn't working at the Shannon limit, i.e. it isn't achieving as much compression as theoretically possible. So what does all this mean? Is this proof that the universe is strictly quantum and we can't patch up classical theories to cover its behavior any time soon? Yes, this is the case, but we already know this from previous tests of the Bell inequality. What is important about this work is the approach. To quote from the paper: "We would like to stress that our analysis of the experimental data is purely and consistently algorithmic. We do not resort to statistical methods that are alien to the concept of computation. If this approach can be extended to all quantum experiments, it would allow us to bypass the commonly used statistical interpretation of quantum theory."

I'm not sure that this is correct as the workings of the zip algorithm do have a statistical interpretation directly relating to the autocorrelation of the data. It seems that it is more to do with making a connection between quantum mechanics and algorithms in general and that this work puts things into a different perspective. Does it have any future uses - who knows?

One thing that is certain, however, is that we really don't know how to think about quantum mechanics - perhaps algorithms are a better way. More InformationProbing quantum-classical boundary with compression software Hoh Shun Poh, Marcin Markiewicz, Pawe l Kurzy´nski, Alessandro Cer`e, Dagomir Kaszlikowski, and Christian Kurtsiefer Related ArticlesQuantum Physics Is Undecidable Quantum Mechanics: The Theoretical Minimum QScript A Quantum Computer In Your Browser

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on, Twitter, Facebook, Google+ or Linkedin.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Thursday, 21 April 2016 ) |