| Introduction To Kinect |

| Written by Harry Fairhead | ||||||||

Page 4 of 4

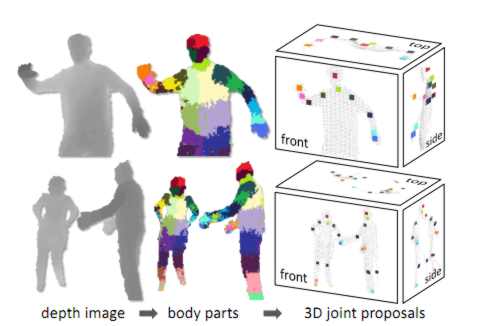

Training a classifierWhat the team did next was to train a type of classifier called a decision forest, i.e. a collection of decision trees. Each tree was trained on a set of features on depth images that were pre-labeled with the target body parts. That is the decision trees were modified until they gave the correct classification for a particular body part across the test set of images. Training just three trees using 1 million test images took about a day using a 1000-core cluster. The trained classifiers assign a probability of a pixel being in each body part and the next stage of the algorithm simply picks out areas of maximum probability for each body part type. So an area will be assigned to the category "leg" if the leg classifier has a probability maximum in the area The final stage is to compute suggested joint positions relative to the areas identified as particular body parts. In the diagram below the different body part probability maxima are indicated as colored areas:

Notice that all of this is easy to calculate as it involves the depth values at three pixels and can be handled by the GPU. For this reason the system can run at 200 frames per second and it doesn't need an initial calibration pose. Because each frame is analysed independently and there is no tracking there is no problem with loss of the body image and it can handle multiple body images at the same time. Now that you know some of the detail of how it all works the following Microsoft Research video should make good sense:

(You can also watch the video at: Microsoft Research)

The Kinect is a remarkable achievement and it is all based on fairly standard classical pattern recognition but well applied. You also have to take into account the way that the availability of large multicore computational power allows the training set to be very large. One of the properties of pattern recognition techniques is that they might take ages to train but once trained the actually classification can be performed very quickly. Perhaps we are entering a new golden age when at last the computer power needed to make pattern recognition and machine learning work well enough to be practical. What to do with a KinectYou can use a Kinect to just play games - but there is more fun and perhaps even profit to be had from putting it to other uses. So what else can you do with a Kinect? A device that measures the depth of every point in a scene may not seem to have much potential but this isn't the case. Inventive uses of a Kinect fall into a number of different categories.

And no doubt there are many more and we haven't really discussed the fact that the Kinect has a powerful audio capability that mostly goes ignored. The skills you will need to master the Kinect and build something useful, fun or exciting are many. You do need to program and for this book you need to be able to program in C#. Ideally you also need to have some idea of how 2D and 3D graphics work - the more you know the more you are likely to see how the Kinect might be used in new ways. If you really want to do something groundbreaking then some idea of how artificial intelligence works and how artificial vision in particular works would be an advantage. The big problem here is that to implement anything that is completely new you are going to need lots of computer time to train any recognition algorithms. So if you have plans to design say a hand recognition algorithm be prepared to find out as much as you can about AI and pattern recognition. You probably could also make use of some hardware skills - not to hack the Kinect but to build devices that can be controlled by it. Some skill with a development board such as the Arduino would be ideal but this isn't the only way to do things. In short to do creative things with a Kinect system you need lots of different skills. This is one of the reasons that a Kinect makes a good resource in education. What ever you plan to do with your Kinect project the following chapters will take you though using its video, depth, skeleton detection and tracking and finally using its audio input. Practical Windows Kinect in C#

|

Codd and His Twelve Database Rules Theories of how we should organize databases are thin on the ground. The one exception is the work of E.F. Codd, the originator of the commandment-like “Codd’s Rules”. This approach to database [ ... ] |

IP Addressing and Routing Every programmer should understand how the Internet works and this means understanding IP addressing and routing. It's a good time to find out about such things with DOS attacks on the rise and IPv6 t [ ... ] |

| Other Articles |

<ASIN:B002BSA298@COM>

<ASIN:B0036DDW2G@UK>

<ASIN:B0036DDW2G@FR>

<ASIN:B002BSA298@CA>

<ASIN:B003H4QT7Y@DE>

<ASIN:B00499DBCW@IT>