| Microsoft Computer Vision Image Analysis Improves OCR Handling |

| Written by Kay Ewbank | |||

| Tuesday, 08 November 2022 | |||

|

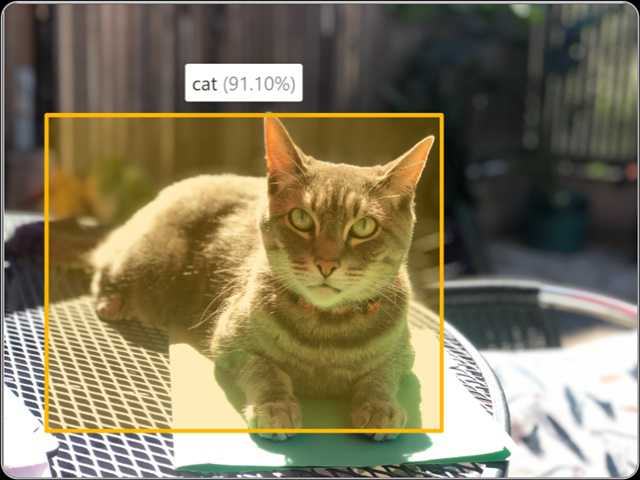

Microsoft has made available a public preview of the latest version of the Computer Vision Image Analysis API, with improvements including image captioning, image tagging, object detection, smart crops, people detection, and Read OCR functionality. The API is part of Microsoft's Azure Cognitive Services collection. Azure Cognitive Services are cloud-based AI services for developers wanting to build cognitive intelligence into applications without having to deal with training AI systems or get deep into the data science. The services are available through REST APIs and client library SDKs, and cover vision, sound, speech and analysis.

The latest version of the Computer Vision Image Analysis, 4.0, is described as combining existing and new visual features such as Read (optical character recognition), captioning, image classification and tagging, object detection, people detection, smart cropping into one API. Developers can run all these features on an image from a single call to the API.

One of the main improvements to this release is that the Read (OCR) feature integrates more deeply with the Computer Vision service. It also runs faster through performance improvements that have been optimized for user interfaces and "near real time experiences". The Read feature has also been extended to support more languages. The 164 supported languages now include Cyrillic, Arabic, and Hindi. Spatial Analysis is also in preview, and can be used for apps that carry out tasks such as counting how many people are in a room, or work out how long it will take someone to get to the front of a queue. Another element is the Azure Face service, which can be used to detect, recognize, and analyze human faces in images. However, this service access is limited based on eligibility and usage criteria, specifically for Microsoft managed customers and partners. The preview of the Azure Computer Vision Image Analysis API version 4 is available now.

More InformationQuickstart for Image Analysis API Related ArticlesMicrosoft Updates Azure Cognitive Services Microsoft Launches Azure Blockchain Service Azure Functions 2 Supports More Platforms Azure Updates Announced At MS Connect() Microsoft Cognitive Services APIs Released Google's Cloud Vision AI - Now We Can All Play To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |