| Get Started With Ollama's Python & Javascript Libraries |

| Written by Nikos Vaggalis |

| Monday, 03 June 2024 |

|

New libraries allow you to integrate new and existing apps with Ollama in just a few lines of code. Here we show you how to use ollama-python and ollama-javascript so that you can integrate Ollama's functionality from within your Python or Javascript programs. But first thing first. What is Ollama? Ollama is an open-source tool for running large language models (LLMs) locally, and despite the name, it's not dedicated to running just the LLama model. It can also work with many other models, like Mistral for instance. The keyword here is 'locally', that is on your own hardware and not some remote server. That means that you can download models and use them to perform any generative AI tasks such as:

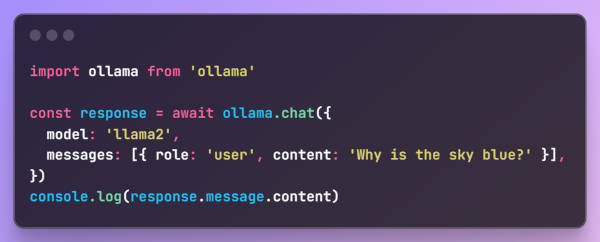

Ollama is a CLI tool available for Linux, Windows and MacOS and lately also available as a Docker image to maximize portability. So head over to ollama.ai to download the package for your operating system. After you install it, verify that it is working with: ollama --help Now you can fetch a model to play with. In this case we fetch llama2. ollama run llama2 This will download the model and start the server on localhost. Ok, now what? How do we proceed? Ollama offers a REST API which makes it accessible to libraries of a variety of programing languages, like Java's LangChain4j for example. Now two new libraries have been released ollama-python and ollama-javascript so that you can integrate ollama's functionality from within your Python or Javascript programs. The libraries abstract the REST API requests like POSTing to the server by wrapping them in method calls. As such to answer the question "how do we proceed?", here's how to chat with the underlying model from Python and the same from Javascript:

These might be trivial examples but both libraries support Ollama’s full set of features so that you can do advanced gen ai tasks like code completion. Both libraries are easily installed by running "pip install ollama" for Python and "npm install ollama" for Javascript. You can find more details and code examples up on their Github repos. Let your imagination run free on generative AI. The sky is the limit! More InformationRelated ArticlesAWS Adds Support For Llama2 And Mistral To SageMaker Canvas

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |