| Conversation and Cognition at Build 2016 |

| Written by Sue Gee |

| Thursday, 31 March 2016 |

|

In his keynote at Microsoft Build 2016, Satya Nadella outlined his vision of Conversations as a Platform in which human language becomes the user interface and a new breed of bots are the apps, orchestrated by Cortana on Skype.

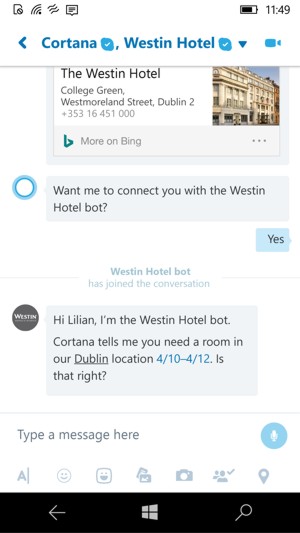

Introducing the theme of Conversations as a Platform, Satya Nadella said: “We want to take the power of human language and apply it more pervasively to all of the computing interface and computing interactions.” He went on to explain that this involves infusing intelligence about ourselves and our context - preferences and experiences - into our computers saying: We can teach computers to learn human language, have conversational understanding. But we want to take that same power of human conversation and apply it to everything else. A personal digital assistant that knows you, knows about your world and is always with you across all your devices. To demonstrate the way in which the Cortana personal digital assistant plus the new type of apps referred to as bots can prove to be useful, Skype's Lillian Rincon demoed the new integration of Cortana in Skype showing how Cortana can pick up key details of person to person conversations, can interact with the user entirely by voice and can mediate between the user and third party bots. To illustrate this once Lillian had asked Cortana to put an event in Dublin into her diary Cortana then offered to bring up the Westin Hotel bot to book a room.

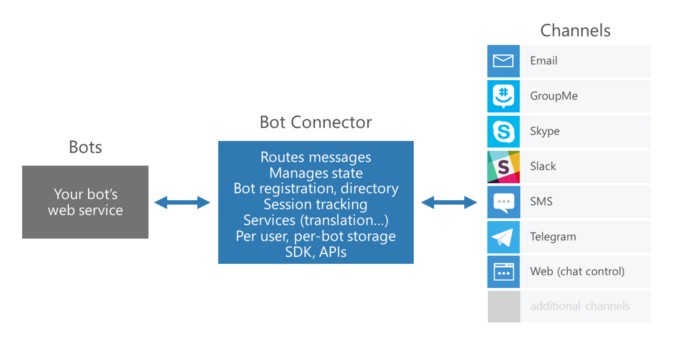

This was a very smooth and successful demo of a bot that had learned about a specific individuals preferences. This a world away from the embarrassment of the Tay bot, Microsoft's Twitter chatbot bot who picked up and adopted undesirable racism from its real world encounters. After a brief reactivation, claimed to be accidental, in which Tay learned more bad habits, Nadella admitted to the Build audience that things had gone wrong and that it was necessary to go back to the drawing board. Meanwhile however the Microsoft Bot Framework was launched and can be used by developers, programming in any language, to build intelligent bots to interact with customers using natural language on social platforms.It includes a Bot Connector for connecting your bot to different communication channels and Bot Builder SDKs for C# and Node.js.

Microsoft also released the Skype Bot Portal for developers to join and gain access to new Skype Bot API and Skype Bot SDK.

In this Channel 9 video Skype's Gurdeep Singh Pall and Lillian Rincon expand on Cortana's integration with Skype.

Microsoft Cognitive Services was another of the BUILD 2016's big announcements. The successor to Project Oxford, this is now a collection of 21 intelligence APIs that are available for devs to use. One remarkable use of the Vision API was included at the very end of the keynote. Called Seeing AI this an app under development by a team including Saqib Shaikh, a blind Microsoft software engineer, which uses the cameras in a smartphone and in Pivothead smart glasses to get information about his surroundings which is relayed to him by voice. Not only can the app read restaurant menus to him, it can tell him what is going on around him and can also let him gauge the reactions of people he is interacting with. In the video Shaikh explains that being blind it can be difficult to determine whether people he's talking to are listening intently or bored to the verge of sleep. His app discreetly informs him that in interaction taking place he is talking to a "40-year-old man with a beard, looking surprised," and a "20-year-old woman, looking happy."

Early in his keynote Nafella had stated: “We want to build intelligence that augments human abilities and experiences. Ultimately it is not going to be about man versus machine. It is going to be about man with machines.” and at the conclusion of this demo Nadella commented: Hopefully you get a feel for the richness of these intelligent services that you can use.

More InformationMicrosoft Bot Builder SDK on GitHub Related ArticlesTo be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook, Google+ or Linkedin.

|

| Last Updated ( Sunday, 17 April 2016 ) |