| Microsoft Introduces DirectX Raytracing |

| Written by Kay Ewbank | |||

| Friday, 23 March 2018 | |||

|

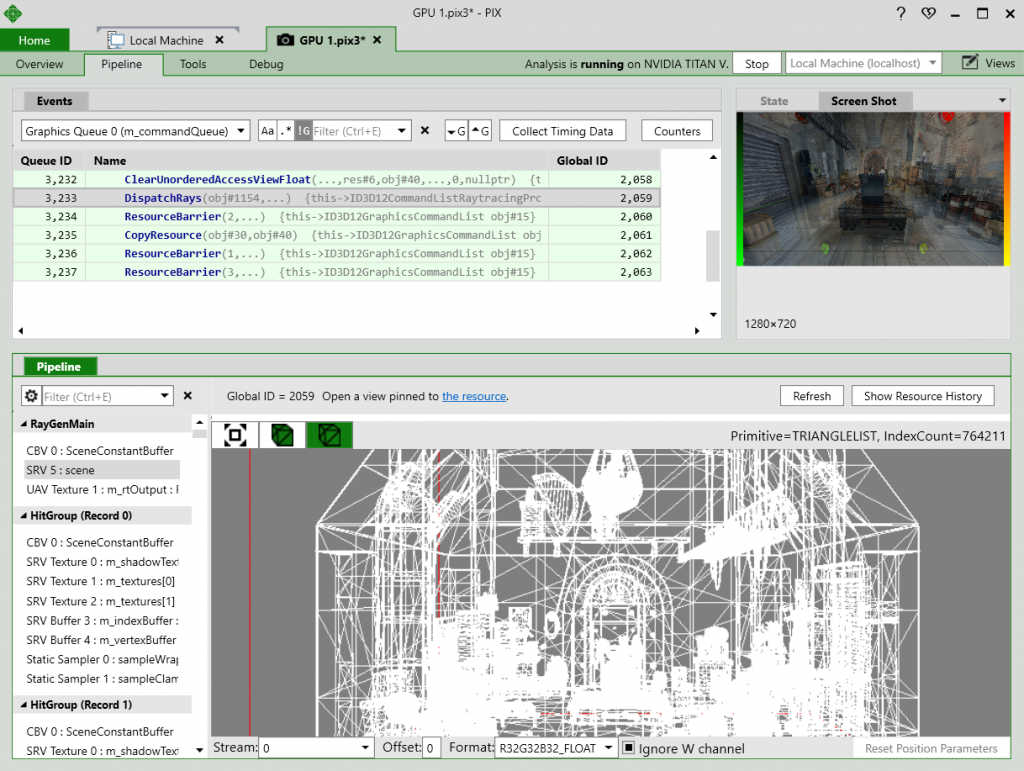

Microsoft has announced a way of specifying the details of 3D scenes for rendering. DirectX Raytracing (DXR) is a new API that will be included in DirectX 12. Aimed at providing more realistic lighting and shadows, it will enable DirectX applications to use hardware-accelerated raytracing. Currently, if you want to render a 3D image on screen, the technique used is almost certainly that of rasterization. This takes a 3D definition of an image and maps it to 2D using transformation matrices. The problem is that rasterization works out what colors should be seen at any point by taking the 2D representation of the 3D object, and at each point on the screen, working out what would be visible at that point, and applying a color and light effect. Problems arise when objects outside the field of view should cast a shadow, for example, or should be reflected in something shiny on the screen. Microsoft says that techniques such as screen-space reflection (SSR) and global illumination (which attempt to overcome the problems described above) are pushing rasterization to its limits. Because DirectX Raytracing allows the traversal of a full 3D representation of the game world, it lets current rendering techniques such as SSR fill the gaps left by rasterization. Support for DXR has already been announced for Pix on Windows. Pix is a performance tuning and debugging tool for game developers.

A simplified view of the way that raytracing works is that rays of light are projected from all the light sources in the 3D scene. The light rays are bounced around the scene at the correct angles depending on their original source. When they encounter an object, that object is illuminated according to the way it would be in real life. Full raytracing is computationally too demanding to run on current hardware, but DirectX Raytracing replicates some parts of the idea. While full raytracing looks at how all objects affect each other, DXR concentrates on the problematic elements such as reflections and shadows. Developers will be able to define rays and the route they should take. DirectX Raytracing (DXR) adds four concepts to the DirectX 12 API, starting with an acceleration structure. This is an object that represents a full 3D environment in a format designed for traversal by the GPU. You than use a new method, DispatchRays, as the starting point for tracing rays into the scene. This is how the game actually submits DXR workloads to the GPU. The details of what the DXR workload should do are defined using a set of new HLSL shader types including ray-generation, closest-hit, any-hit, and miss shaders. When DispatchRays is called, the ray-generation shader runs, and causes rays to be traced into the scene. Depending on where the ray goes in the scene, hit or miss shaders may be invoked. A game can assign each object its own set of shaders and textures. The final new addition is a raytracing pipeline state. Microsoft says this is a companion in spirit to today’s Graphics and Compute pipeline state objects, and is used to encapsulate the raytracing shaders and other state relevant to raytracing workloads. In terms of hardware support, Microsoft says: "Developers can use currently in-market hardware to get started on DirectX Raytracing. There is also a fallback layer which will allow developers to start experimenting with DirectX Raytracing that does not require any specific hardware support." In practical terms, applications using DXR will work with Nvidia's Volta architecture, and if DXR catches on there will undoubtedly be support from most hardware manufacturers.

More InformationRelated ArticlesDirectX Tool Kit For DirectX 12 - 3D For The Rest Of Us? To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Friday, 23 March 2018 ) |