| Robots Learn To Navigate Pedestrians |

| Written by Lucy Black | |||

| Sunday, 02 April 2017 | |||

|

Do you manage to walk a crowded street without bumping into people or having near misses as you try to pass? If so you probably learned how to do it in a very similar way to this socially aware robot, by reinforcement learning. With the deployment of wheeled robots, see Delivery Robots Becoming A Reality, the sharing of the walkways with robots is becoming an important topic. We humans, assuming your not a robot, have managed to evolve mechanisms for sharing the narrow strip available for movement. What is more interesting is that we haven't formalised the rules that we have invented. They are what you might call "social" rules and most of the time they do keep the traffic flowing and minimize collisions.

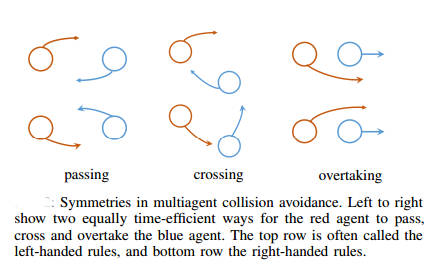

It is obvious we have to teach our wheeled robots these social rules, but the problem is what exactly are they? Researchers at MIT decided to side step the whole issue by using reinforcement learning to let the robots learn the rules for themselves: For robotic vehicles to navigate safely and efficiently in pedestrian-rich environments, it is important to model subtle human behaviors and navigation rules. However, while instinctive to humans, socially compliant navigation is still difficult to quantify due to the stochasticity in people's behaviors. Existing works are mostly focused on using feature-matching techniques to describe and imitate human paths, but often do not generalize well since the feature values can vary from person to person, and even run to run. This work notes that while it is challenging to directly specify the details of what to do (precise mechanisms of human navigation), it is straightforward to specify what not to do (violations of social norms). Specifically, using deep reinforcement learning, this work develops a time-efficient navigation policy that respects common social norms. The proposed method is shown to enable fully autonomous navigation of a robotic vehicle moving at human walking speed in an environment with many pedestrians. You can see the results in the following short video: Did you notice the occasional human almost diving out of the way of the robot? If you missed it look at the video again. This isn't necessarily a fail for the project, however, because the way a human reacts depends on what they expect and most humans are not going to trust a fast moving robot cart to avoid them. Given time to learn that the robot does follow the rules, diving out of the way should become less common. One remark from the paper is worth repeating: It has been widely observed that humans tend to follow simple navigation norms to avoid colliding with each other, such as passing on the right and overtaking on the left

Not knowing this might be the key to why you keep bumping into people and engaging in impromptu dances with strangers. More InformationSocially Aware Motion Planning with Deep Reinforcement Learning Related ArticlesDelivery Robots Becoming A Reality Robots Improve Performance At Warehouse Picking Achieving Autonomous AI Is Closer Than We Think

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Saturday, 01 April 2017 ) |