| Source Code Search Engine Adds Internal Page Indexing |

| Written by Kay Ewbank | |||

| Monday, 20 August 2018 | |||

|

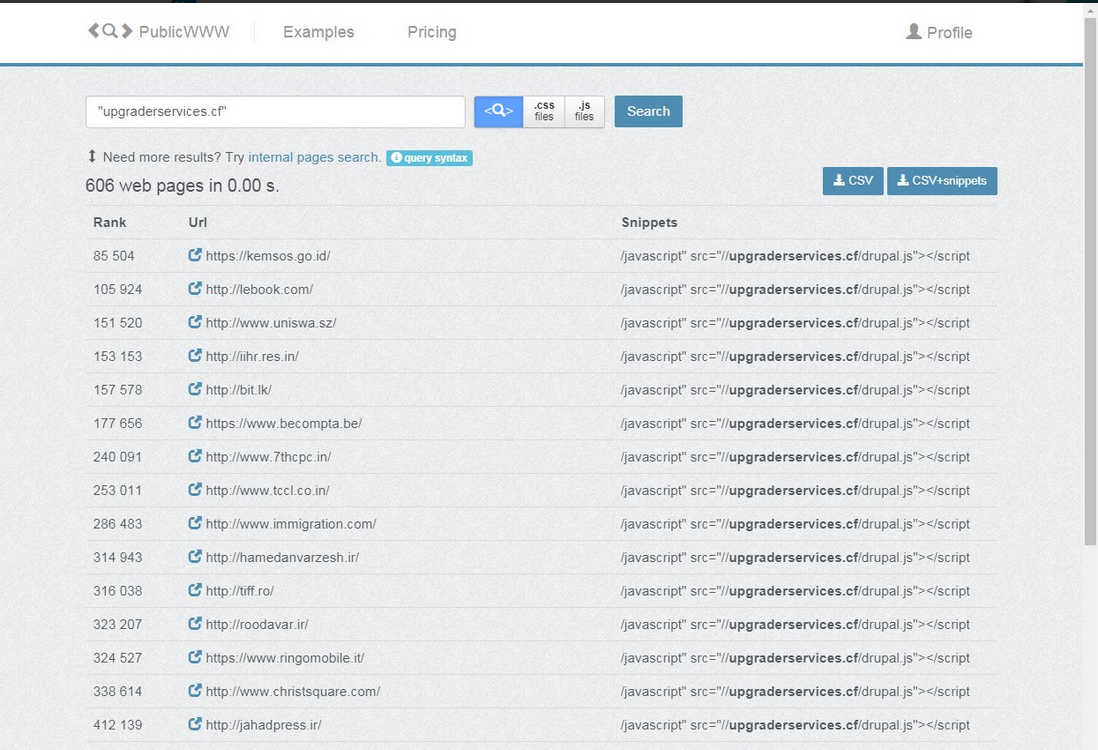

Source Code Search Engine, an engine for searching web page source code, has added internal page indexing. The search engine has also now indexed 300 million websites. The engine can be used to search for any HTML, JavaScript, CSS or plaintext in web page source code and download a list of websites that contain it. Developed by PublicWWW, the search engine can also be used to find related websites by looking for specific HTML codes such as publisher IDs or custom widgets that are shared across the related sites. You can also search for specific images or logos as the identifying elements. The updated version of the service has has added internal page indexing, meaning it's possible to carry out searches that look deeper on websites, on homepages and internal web site pages. One suggested use of this is to identify webpages infected with cryptojacking malware such as coinhive.

The developers explained to i-programmer that: "There are many websites where the homepage is used as a landing or welcome page, so does not contain significant fingerprints of specific technologies. In contrast, internal pages that are only accessible by navigation from the home pages are the areas that provide the main website functions, include libraries, and unique identifiers. For example, we can easily find advertising and other networks by network call code and by affiliate id. Bad Packet Report (a finder of cryptojacking malware) can now look up guys who hide cryptojacking code from inspections of home pages. Almost all our clients are already widely using internal pages look up, or are at least testing it, even though it is a very new feature. So despite the fact that only a short time has passed since the introduction, we can already say that this is a long-awaited and positively perceived opportunity." The developers suggest that one potential use of the engine is to find out if other sites are using your theme or mentioning you. They also suggest the engine can be used to find affiliates of your competitors, and to identify sites where your competitors personally collaborate or interact. More usefully for developers, you can search for references that show how to use a library or a platform, to find code examples on the net, and the work out which sites are using what JavaScript widgets on their sites. The engine will return up to a million results per search request, sorted according to the popularity index of the websites. Results can be downloaded as a CSV file for further analysis, and there's an API for developers who want to integrate the data. Searches are usually completed within a few seconds and in addition to the main page areas, the Webserver response HTTP headers are also indexed. Websites in the top one million positions are revealed for free, those further down the list but still in the top three million are available on registration, while others in the results are only available on payment.

More InformationRelated ArticlesBing Adds Intelligent Code Search Google Now Helps You Search For || And More RankBrain - AI Comes To Google Search Hummingbird - A New Google Search Algorithm Google Search Goes Semantic - The Knowledge Graph Google Needs a New Search Algorithm Google's War On Links - Prohibition All Over Again Google Explains How AI Photo Search Works

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Monday, 20 August 2018 ) |