| The Monte Carlo Method |

| Written by Mike James | |||||

| Friday, 01 November 2024 | |||||

Page 1 of 4 Monte Carlo methods are powerful ways of getting answers using random numbers to problems that really don't seem to have anything much to do with randomness. For example, you can find Pi and multiply two matrices together all by generating random numbers. No this isn’t going to be about gambling, except in the broadest possible sense. Monte Carlo methods are a way of using the computer to solve difficult problems in a most unlikely way. They were invented to solve some of the problems of building the first atomic bomb.

When you think about using random numbers in a computer the most obvious application to come to mind is in computer games. Clearly it would be no fun at all if every time you play a game exactly the same set of things occur at exactly the same time. Random numbers are used to vary what happens in unpredictable ways. A computer diceBefore we look in detail at more advanced ideas let’s look at some simpler ways that random numbers are used and at how to create events with a given set of probabilities. Perhaps the archetypal use of random numbers is to program a computer dice. Usually the random number generator function, usually called RND or random or something similar, produces fractional numbers scaled into the range 0 to less than 1. To create numbers in the range 1 to 6 all you need is a simple re-scaling and conversion to integer - using Javascript as an example language:

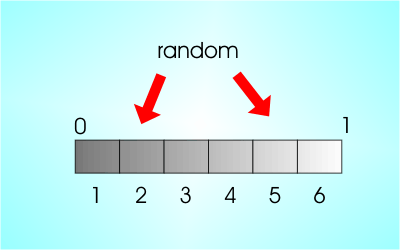

Most beginners miss the “+1” but of course the random function never produces 1 and so the result without the +1 is in the range 0 to 5 adding one gives you 1 to 6. Notice that what is going on here is that we are “chopping” up the range of that the random numbers fall in into equal sized regions. Obviously if the regions are equal in size then they have the same probability of that the generated number will fall into one of them. That is our dice is fair.

Equal sections equals equal probability

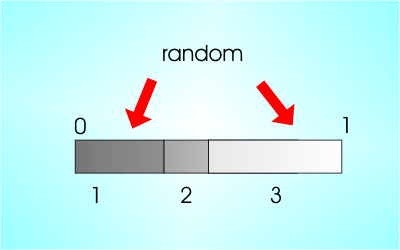

By the same reasoning if we want to create a set of events with unequal probability all we have to do is divide the interval up into unequal lengths. The random number falls into each interval with a probability proportional to the intervals length. In most cases you can’t program this as a simple function but you can use “If” statements to pick out the interval that the random function falls in.

Unequal sections equals unequal probability

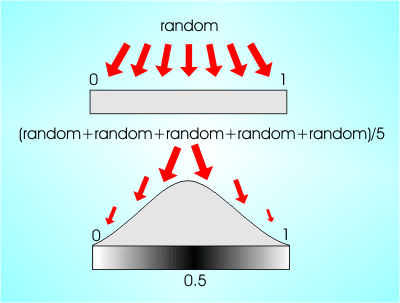

This is all you need to know to make any set of discrete events happen.However there is the slightly more difficult problem of generating random values in a continuum of possible values. The random function naturally returns values in the range 0 to 1 which are evenly spread in the interval - they are said to be “uniformly distributed”. Other distributions are often required and there is a whole armory of techniques waiting to come to your aid. For example, if you want a normal distribution all you have to do is add up a few random numbers and work out their average. That is, if you calculate:

then n will have an approximately normal distribution with a mean of .5.

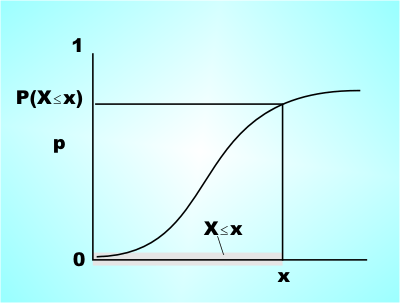

From uniform to normal This method is based on the central limit theory which says that if you take the average of enough reasonably distributed random values then the result tends to be normally distributed. In this case reasonably means nothing out of the ordinary, you can obviously find strange distributions that when added together don't produce a normal. Roughly the distributions have to have a finite mean and variance. The central limit theorem is the reason that the normal distribution occurs so often in practice. When ever you encounter some "noise" or randomness that is the average result of lots of other more basic random effects then in general the result tends to be normal because of the central limit theorem. So if you have always wanted to know why statistics is obsessed with the normal distribution now you know - its the one that "normally" turns up. The Cumulative Probability DistributionThe central limit theorem works well for the normal distribution but what if you want to generate random numbers with a non-normal but known distribution. To do this you need the cumulative probability distribution. This is just a function that gives you the probability of getting a value less than or equal to x. That is: F(x) is the probability of getting a result ≤x

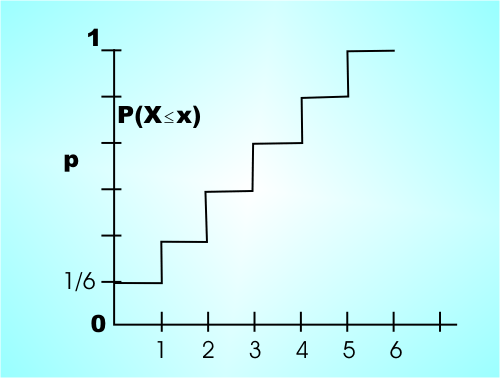

For example if you have a fair dice then the cumulative probability function is:

Notice that a cumulative probability function cannot decrease as x increases and in this case it is constant between each of the possible integer values.

You can use a cumulative probability function together with a uniform random number generator in the range 0 to 1 to generate values from the distribution that the function represents. <ASIN:038787836X> <ASIN:0833030477> <ASIN:1400063515> |

|||||

| Last Updated ( Friday, 01 November 2024 ) |