| Claude Shannon - Information Theory And More |

| Written by Historian | |||

Page 1 of 2 Claude Shannon, who's 100th anniversary is this year, deserves your attention as a genius of the computer age. He not only pioneered binary logic and arithmetic, he invented a whole new subject area - information theory and still had time to have fun with computer chess and Theseus, the amazing maze running relay mouse - see the video! The truth is that Shannon was an important pioneer of computing theory and practice and was interested in a wide range of subjects. In an effort to increase his profile you might even want to use the tabloid headline "The Father of the Bit", but you could equally well call him "the man who invented computer chess".

Claude Shannon in 1948 The Father of the BitClaude Shannon is quite correctly described as a mathematician. He gained his PhD from MIT in the subject, but he made substantial contributions to the theory and practice of computing. When Shannon was a student electronic computers didn't exist. There were a few mechanical analog computers that could be used to calculate trajectories and tide tables, but nothing that could be described as a digital computer. Of course, Babbage had described the basic design of a stored program computer in the 1800s and there were mechanical calculating machines, but these were decimal devices. They performed arithmetic by using gear wheels marked up 0 to 9 and if you watched them at work you would have seen a mechanical copy of the way we humans do arithmetic. That is, there is a unit wheel, a tens wheel, hundreds wheel and so on, with each cog having ten possible states. We may think now that a binary computer is the most obvious way of doing things, but it isn't as long as you are working with cogs that can have any number of positions. Of course, to make the transition to electronic computers it was vital to see that the decimal system had to be given up, and this is where Shannon comes in.

Understanding the differential analyzerIn 1930 Vannevar Bush built the most advanced mechanical analog computer of the day, possibly ever, at MIT. It was a technically a differential analyzer and it could solve differential equations but it was different in two respects. The first is that it was general purpose in the sense that you could reconfigure it to solve any equation and it used some electronic components - valves and relays.

The differential analyser with Bush the closest figure.

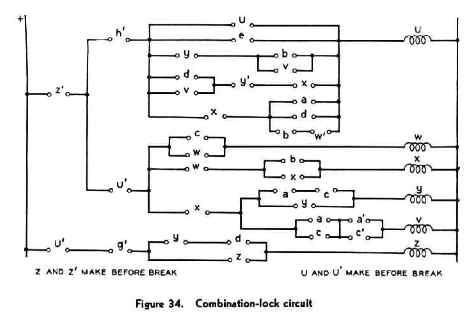

Vannevar Bush is an important figure in the history of computing in his own right and he developed the idea into a fully electronic device. Shannon was a student of Bush and worked the differential analyzer to earn a few extra dollars. Relays were used configure the machine and these circuits had been designed in a fairly ad-hoc manner. Bush suggested to Shannon that, being a mathematician, he might be able to work out what the principles were that lay behind such circuitry because it was obvious that there was some organizing principle. Shannon certainly saw what the organizing principle was and described if fully in a paper with the catchy title "Symbolic Analysis of Relay and Switching Circuits".

What Shannon had discovered was the relationship between a relay being on or off and the Boolean logic states true and false. He also realized the connection with binary arithmetic by identifying on and off with 1 and 0. Shannon also demonstrated how relays could be used to build circuits that add, subtract, multiply and indeed do any arithmetic you wanted, all in binary. Shannon's paper was very complete, almost as if he had attempted to write down the theory of the entire subject in one go. This desire for completeness seems to have been a characteristic of Shannon's personality as we shall see. Of course, Shannon hadn't shown how to use relays to build a general purpose programmable computer, but only how to build some of the modules that are part of every computer - adders and logic gates. What was more important about Shannon's work was that it pointed the way to an electronic computer based on binary by showing that arithmetic circuits were much easier to design once you got rid of the decimal system. Surprisingly, even though Shannon and many others demonstrated beyond any doubt that the binary system was best, nearly all of the first early electronic computers used the decimal system. You have to see this not as ignorance or stupidity, but a mark of just how deeply embedded the decimal system was in the minds of the engineers and scientists of the time. Artificial IntelligenceOf course with such an interest in relays it was natural enough that he should move, in 1941, from MIT to the Bell Telephone Labs. The telephone companies of the time were very heavy users of relays of all types to connect phone calls and Bell was the biggest. Perhaps no other single research establishment has had such a profound influence on computing. We can thank Bell Labs, among other things, for the invention of the transistor, C and Unix. While at Bell, Shannon carried on showing how relays could be used to create computational machines. He wasn't just interested in arithmetic, though, and he should be counted among the earliest pioneers of what we now call artificial intelligence, or AI. In 1950 he wrote a paper called "Programming a digital computer for playing chess" which essentially invented the whole subject of computer game playing. Interestingly he also felt a need to give the idea a wider audience and wrote a more approachable article called "Automatic Chess Player" in a 1950s copy of Scientific American, which was reprinted in The World of Mathematics Vol 4. He even built a relay controlled mouse, called Theseus after the legendary king of Athens who escaped the labyrinth of the Minotaur, that could run a maze and learn by storing the maze pattern as relay states. You may know of the MicroMouse competitions where contestants had to build a micro-processor controlled mouse that would beat all others at learning to navigate a maze and then running that maze. Well Shannon did it first using relays!

Theseus

Theseus in action

<ASIN:0780304349> <ASIN:487187804X> <ASIN:0252725468> <ASIN:0486240614> <ASIN:0486411524> <ASIN:0486604349> |

|||

| Last Updated ( Sunday, 04 June 2023 ) |