| History of Computer Languages - The Classical Decade, 1950s |

| Written by Harry Fairhead | ||||

Page 2 of 3

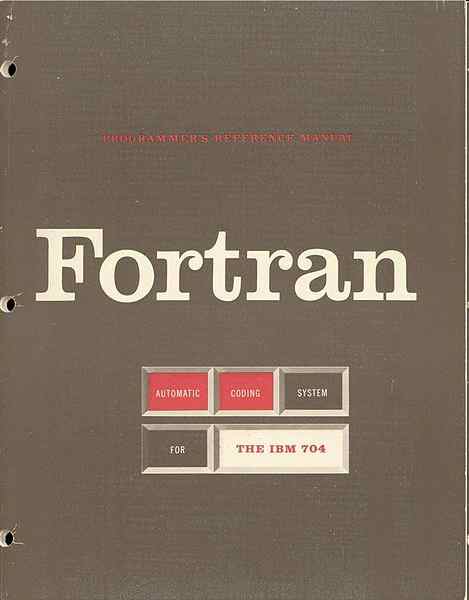

Macro AssemblerAfter straightforward assemblers came macro assemblers and auto codes. An assembler translated a single name such as ADD into a single machine code. A macro assembler extended this idea to the translation of a user defined name into a number of instructions in machine code. For example, if you found that you were often adding one to a particular memory location and testing to see if it was less that an upper limit you might write a macro called INCRT x that increments and tests memory location x. The macro assembler would expand every occurrence of INCRT in a program using a definition provided by the programmer. Today most assemblers are macro assemblers and even high level languages have macro facilities. It is difficult from our perspective to appreciate how powerful macro assemblers were - you could create some very sophisticated macros that almost turned assembler into a high level language. The birth of the compilerIn a sense a macro assembler just allows programmers to establish shorthand forms for commonly used chunks of code. But they were also important because they introduced the idea of moving away from the primitive machine code towards more powerful and sophisticated instructions that are translated into machine code. This is the fundamental idea of a compiler and it frees the design of programming languages from simply copying the underlying machine code. Instead of using macros to extend simple assembly language, why not use the same technique to create a completely new and machine independent high level language? From our point of view this seems like an obvious and desirable next step. It means that you can produce an easy-to-use and powerful language that can be understood by any computer that has a compiler for it. But the programmers of the time had many doubts about high level languages. They were sceptical about the possibility of creating a high level language compiler at all and if it was possible they doubted it could be efficient. Some of the reasons for these attitudes were due to emotion rather than good sense. After so many years of using machine code directly the best programmers thought about program in terms of what the machine actually did and high level languages threatened to get between the programmer and the machine. With computing time costing so much it seemed to be crazy to invent a way that might well simplify programming but at the very real expense of the computer's time. Accepting high level languages was seen as a loss of control over the programs that were being created. A common complaint was that if a compiler was used to generate machine code then the dangerous situation would arise of no one really understanding the final code. Today of course no-one worries about the fact that no human eye ever examines the machine code of the major applications programs. But in those days machine code was the language that most programmers thought in! Fortran proves it's possibleThe only way to prove that an efficient compiler could be produced was actually to produce one, but this brings us to the central difficulty of early compiler construction - arithmetic expressions. If it wasn't for arithmetic expressions the transition from macro assemblers to compilers would have been trivial. Indeed you can argue that the only really important difference between a macro language and a high level language is the ability to freely use arithmetic and other types of expression. The problem with an arithmetic expression is that the order in which things have to happen isn't the same as the order in which they are written down. This is, of course the reason that school children find complicated arithmetic and later algebra difficult. For example, if you present a compiler with the expression 2+3*5 it will scan the characters from left to right and generate the code to add 2 to 3 and then multiply the result by 5 but this, of course, would give the wrong answer. It has been traditional in mathematics to do multiplications before additions and the correct machine code should do the multiplication first and then add 2 to the result. When you first see this sort of difficulty it is tempting to believe that it can be fixed up by some simple ad hoc addition to the method that the compiler uses to translate the high level language. If you try this you will find it nearly impossible because an arithmetic expression can be arbitrarily complex e.g. (2+3*5+4/6)*10+16-(14+15)*8. What is needed is a general way of translating an arithmetic expression, any arithmetic expression into code that carries out each operation in the correct order. Such a method, stack evaluation was found during the development of Fortran by John Backus leading a team of IBM programmers. The Fortran project took four or five years to complete starting in 1954 and it succeeded in proving the viability of high level languages. The name Fortran stands for FORmula TRANslator - its full title was The IBM Formula Translating System. Fortran was an IBM product but even the baby Big Blue of the time must have been surprised at how fast it became the language of computing. Contemporary programmers are equally surprised that 60 year old Fortran is still a popular language for some important applications, particularly in science and engineering - climate modelling, for example. It has evolved from the original Fortran 1, through Fortran II, Fortran 66 (also known as Fortran IV), Fortran 77, Fortran 90 and 95 and curently we are using Fortran 2008 and work is underway towards a later-dated version (2015) which should be released in 2018. Even though it has evolved it hasn't changed out of all recognition and is still so true to its original design that a Fortran 1 programmer would be able to follow something in Fortran 2008. As the first high level language Fortran is clearly important but it also influenced many generations of programmers, especially when you take into account the popularity of BASIC which was a language derived directly from Fortran. Fortran is also important because it marked the start of the rise of IBM as a dominating power in computing. As Fortran succeeded so did IBM. Many of the key facilities that we have come to expect of a high level language including arithmetic and logical expressions; the DO loop (an early form of the FOR loop); the IF statement; subroutines; arrays; and formatting were introduced by Fortran. And yet Fortran stayed close to the underlying machine with constructs such as the arithmetic if

where the instruction that was jumped to depended on the value of the expression - negative, zero or positive. The reason for this odd instruction was the the IBM machine that Fortran was being created for had a machine code instruction that was a three way branch on the value in a register.

As well as starting high level programming Fortran fueled the growing computer book market. Daniel D McCracken's book was a standard text and went through a number of editions.

Fortran was a language that was only one level removed from assembly language. Its huge contribution was to solve the problem of converting arithmetic expressions into code - this is the reason it is called For-Tran after all. Today a simple language like Fortran 1 would be a student project to implement and the method that it used to perform the translation - a stack algorithm - isn't highly regarded but it was the first to prove that it was possible and practical. Of course being so close to the machine's hardware Fortran wasn't a block structured language and it had the infamous GOTO line number that would later cause so much controversy. The GOTO and the line numbers were also inherited by Basic. Of course today we don't use line numbers and direct jumps and this is one of the marks of a Fortran like language. Another interesting fact is that Fortran introduced the idea that variables with names starting with any of I, J, K, L, M and N were integers - i.e. letters from I to N for INteger. Even today you will find programmers have a tendency to use the same set of letters for integers perhaps without ever knowing why. Given the worries about the efficiency of high level languages you cannot really be too hard on Fortran's machine dependencies.

<ASIN:0321524039> <ASIN:1591020344> <ASIN:0472081047> |

||||

| Last Updated ( Wednesday, 29 January 2025 ) |