| SAGE - Computer of the Cold War |

| Written by Historian | |||

Page 1 of 2

It is a common belief that war drives the pace of technology. In the case of computing this is certainly true. The majority of early machines, the ENIAC and the Mark I for example, were built for military calculations. However, in the case of computing it is arguable that it was the Cold War that produced the greatest advances. The computer is an ideal instrument for gathering and processing the sort of information that you need to keep an eye on what your potential enemies are doing. The SAGE (Semi Automatic Ground Environment) system was one of the first realtime computers and it was huge - deep inside caverns enormous computers tracked every movement in the sky and protected the USA from sneak mass nuclear attack. Beyond WhirlwindThe Whirlwind computer, built at MIT was the first computer that operated in realtime and in the 1940s it was the fastest computer available - but it was going nowhere. The Whirlwind was suitable for flight simulators and the like but at the time very few people understood the potential of such a machine. Then the Russians exploded a nuclear device and the USA was shocked out of its complacency and the Cold War had started. The USA had to face the prospect of nuclear missiles entering its air space and remaining undetected until they gave themselves away in the last split microsecond of their existence. The Air Defense Engineering Committee was formed in 1949 and they decided that what was needed was a tracking system that combined the talents of the newly invented computer with those of radar. The radar system could be used to detect airborne objects and the computer could keep track of them all and make sure that every moving object was a known object. This was a brave concept given that both computers and radar were new technology and nothing like what they proposed had even remotely been shown to work. A network of radar stations communicating with a central computer data processing station really looks ambitious when you realize that at the time no one had bothered to invent the modem, a reliable method of mass storage, a graphical visual display unit, and computers were still built using valves! A research lab was founded in 1951 to create the new system. Known as Project Lincoln and then the Lincoln Laboratory it drew on developments from other research centres. In particular MIT supplied the computer hardware in the form of Whirlwind I. The data communications came from the Air Force Cambridge Research Laboratory (CRL).

The Lincoln Lab At this early stage it was estimated that the job could be done with only 8K of memory and only a few thousand lines of code. The true magnitude of the problem sank in only slowly. If the Lincoln team had started out with a complete systems analysis they probably would have declared the task impossible. As it happened they adopted a step-by-step approach and solved each problem as it came up. Sometimes the solutions were lower tech than we might expect but often they involved pushing the limits of the current technology well beyond anything that had been achieved before. The Cape Cod SystemBefore committing to a full system an experimental implementation called the Cape Cod System (after its location) was tried out. The Cape Cod System was the first to use computer time sharing, a CRT display system and light pens. This was not only a forerunner of the defence system to come but of the realtime GUI interfaces that we all use today.

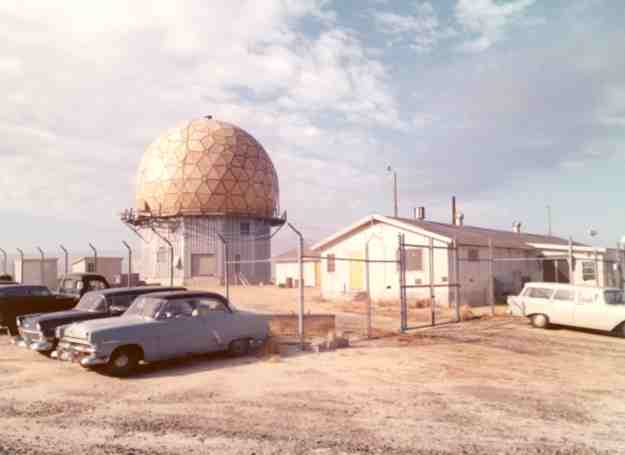

A Cape Code radar Cape Cod was a test bench for the Whirlwind computer, the software, the radar installations and the communication techniques needed to link them all together. Telephone lines were leased and data from remote radar stations was transmitted using the first modems to the Whirlwind machine. At first results were poor. Crosstalk and dialing noise generated multiple false targets. The data transmission worked for only 50% of the time but at least it worked. To test the entire system the team enlisted the help of the local Air National Guard who flew a pair of small propeller driven planes up and down the coast. In early 1951 the Lincoln lab succeeded in demonstrating live the ability to issue intercept instructions with an error of only 1000 yards. Impressive even if the planes were only moving at one quarter of the speed of a typical jet. The Cape Cod System used the Whirlwind computer but for the production system something more manufacturable was needed. This is where IBM comes into the picture. IBM and MIT co-operated on a commercial development of the Whirlwind - essentially the Whirlwind II but the production version was known as the AN/FSQ-7. It is clear that IBM's involvement had a spin off into its early range of machines pre-dating the System 360 project.

The AN/FSQ-7 or second generation Whirlwind The prototype machine, the XD-1, had 8000 words of core memory and this immediately proved to be too small because of the performance degradation produced by having to page to magnetic drum. Jay Forrester met the requirement for more memory by producing a 65,000 word core memory - a staggeringly large amount of high speed memory for the time. The machine was as reliable as any valve machine could be but it was realized that it still wasn't good enough. The solution was to use two machines in a duplex arrangement. When one machine was processing radar data the other would be used for routine housekeeping and preventative maintenance. There were two problems with this idea. The first was that the Air Force had swallowed the bill for one set of computers but now this was to be doubled! The second was that for the duplex system to work a way of allowing one machine to hot swap with the other had to be found. That is, just having two machines available is no use when continuity of data processing is all important. At first they thought in terms of getting both computers to do everything all of the time - but this would be inefficient. In the end the backup computer was used to do some of the tasks while keeping up-to-date via a shared magnetic drum. The duplication of the main machines was only part of the problem. They also had to duplicate all critical peripherals and arrange a way to select which machine controlled which peripheral. The radar centres had to transmit their data back to the command centres for processing. To achieve this they had to invent the modem. At first they couldn't understand why it wasn't working. They asked Bell for a specification their telephone lines conformed to and were surprised by the answer "The lines are all right if you can talk over them" Eventually they solved the problem by asking for a 300 mile telephone loop, injecting signals into one end and looking at what came out of the other. As a result of what they found they redesigned all their modems and managed to make them work at 1300 baud - as long as the telephone lines were specially conditioned. <ASIN:0262182017> <ASIN:0262550288> <ASIN:0684835290> <ASIN:0262532034> |

|||

| Last Updated ( Friday, 29 November 2019 ) |