| Machine Learning For .NET |

| Written by Kay Ewbank |

| Tuesday, 15 January 2019 |

|

Microsoft has released an updated version of ML.NET, its a cross-platform, open source machine learning framework for .NET developers. The updated version has API improvements, better explanations of models, and support for GPU when scoring ONNX models. ML.NET was announced at Build last year. Developers can use it to develop custom AI machine learning models that can then be included in their apps. You can create and use machine learning models targeting common tasks such as classification, regression, clustering, ranking, recommendations and anomaly detection. It supports deep-learning frameworks such as TensorFlow and interoperability through ONNX.

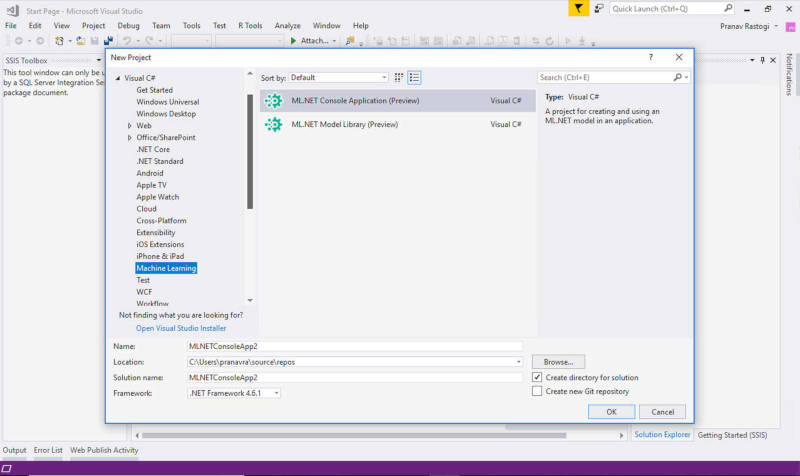

ML.NET includes Infer.NET as 'part of the ML.NET family'. Infer.NET is a cross-platform framework for running Bayesian inference in graphical models that can also be used for probabilistic programming. It was developed by Microsoft Research, and made open source last year, and in October was brought into the ML.NET family and its name changed to Microsoft.ML.Probabilistic. The improvements to the new release start with the changes to the API. These make it simpler to carry out text data loading; and add a prediction confidence factor when you're using Calibrator Estimators. This shows a probability column that shows the probability of this example being on the predicted class. There's also a new Key-Value mapping estimator that provides a way to specify the mapping between two values based on a keys list and a values list. The developers have also released a preview of Visual Studio project templates for ML.NET. The templates are designed to make it easier to get started with machine learning.

In practical terms, one of the main changes to the new release is Feature Contribution Calculation (FCC). This shows which features are most influential for a model’s prediction on a particular data sample by working out how much each feature contributed to the model’s score for that particular data sample. The developers say that FCC is particularly important when you initially have a lot of features in your historic data and you want to work out which features to use. Using too many features can reduce the model’s performance and accuracy.

More InformationVisual Studio template gallery Related ArticlesInfer.NET Machine Learning Framework Now Open Source Google Provides Free Machine Learning For All Machine Learning Superstar Andrew Ng Moving On Haven OnDemand Offers Machine Learning As A Service Azure Machine Learning Service Goes Live Machine Learning Goes Azure - Azure ML Announced To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

| Last Updated ( Tuesday, 15 January 2019 ) |