| GANPaint: Using AI For Art |

| Written by Nikos Vaggalis | |||

| Saturday, 13 July 2019 | |||

|

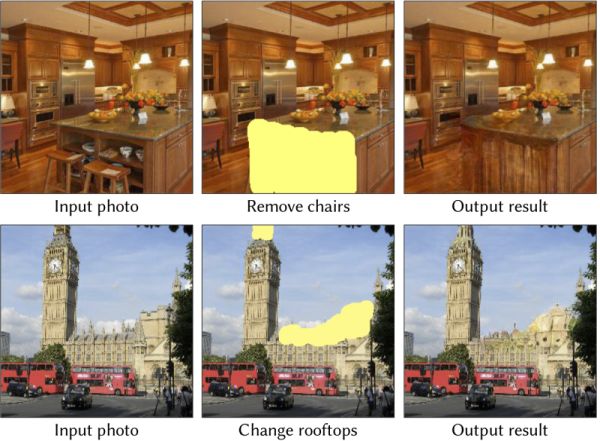

What if tools powered by Neural Networks could save artists considerable amounts of time, or even enrich their work? GANPaint Studio offers a glimpse into the creative tools of the future: The tool takes a natural image of a specific category, e.g. churches or kitchen, and allows modifications with brushes that do not just draw simple strokes, but actually draw semantically meaningful units – such as trees, brick-texture, or domes The innovation lies in that its paint tools/brushes don't paint with pixels but are object aware and instead paint those objects into the picture.A brush is associated with a group of neurons which themselves are associated with trees therefore using this brush paints trees; others can do doors or windows. The opposite holds true as well.Neurons can also remove the associated objects, with the neural network being that smart as to compensate for the void left behind by filling in with a matching background.

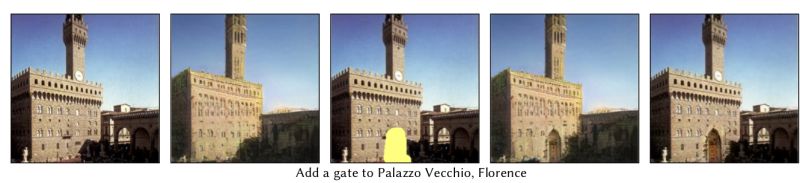

This is also what Deep Angel does but for video instead of still pictures. In both cases, adding or removing elements, you can't easily tell that the picture was manipulated. An entertaining example is that of adding a gate to Palazzo Vecchio by just brushing over it and letting the neurons work their magic in replacing the brushed over territory with the object it is associated with;in this case it is a gate.

It goes without saying that this kind of functionality saves immense amounts of the artists' time as it can synthesize realistic images without the pain.It goes without saying also that it can be used in forging realistic deep fakes, that way adding to the expanding arsenal of deep fake manipulation tools, be it in manipulating pictures video, or text.Does OpenAI's GPT-2 Neural Network Pose a Threat to Democracy? The technology behind it is described in the paper "Semantic Photo Manipulation with a Generative Image", Generative for Generative Adversarial Network, a neural network that learns to compose an image the way humans perceive it.This means that the network also understands when it can and cannot compose objects. For example, turning on neurons for a door in the proper location of a building will add a door, but in contrast the neurons will not cooperate if you want to do something irrational like inserting a door in the sky: We find that the GAN allows doors to be added in buildings, particularly in plausible locations such as where a window is present, or where bricks are present. Conversely, it is not possible to trigger a door in the sky or on trees However I was not able to verify these claims using the online demo. GANpaint Studio Semantic Photo Editing with GANs That's probably because of a temporary glitch I guess, but for the conversation's sake let's assume that it works. This would prompt questions aboutof the underlying network, specifically what does the GAN actually know? For example, when a GAN generates a door on a building but not in a tree we wish to understand whether such structure emerges as pure pixel patterns without explicit representation, or if the GAN contains internal variables that correspond to human-perceived objects such as doors, buildings, and trees. And when a GAN generates an unrealistic image , we want to know if the mistake is caused by specific variables in the network For this reason the researchers prepared yet another paper, "GAN Dissection: Visualizing and Understanding Generative Adversarial Networks", in which they present a method for visualizing and understanding GANs at different levels of abstraction, from each neuron, to each object, to the relationship between different objects. The results however were inconclusive as many questions could not be satisfactorily answered using that method. Certainly something like TCAV, see TCAV Explains How AI Reaches A Decision, would help in that respect. In the end this is a further improvement of GANs; while they were always capable of easily synthesizing images, they were not so useful at manipulating them. Well until now.

More InformationGANPaint Studio-Semantic Photo Manipulation with a Generative Image Prior GAN Dissection: Visualizing and Understanding Generative Adversarial Networks

Related ArticlesDeep Angel-The AI of Future Media Manipulation Does OpenAI's GPT-2 Neural Network Pose a Threat to Democracy? TCAV Explains How AI Reaches A Decision

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Saturday, 13 July 2019 ) |