| Does OpenAI's GPT-2 Neural Network Pose a Threat to Democracy? |

| Written by Nikos Vaggalis | |||

| Wednesday, 26 June 2019 | |||

|

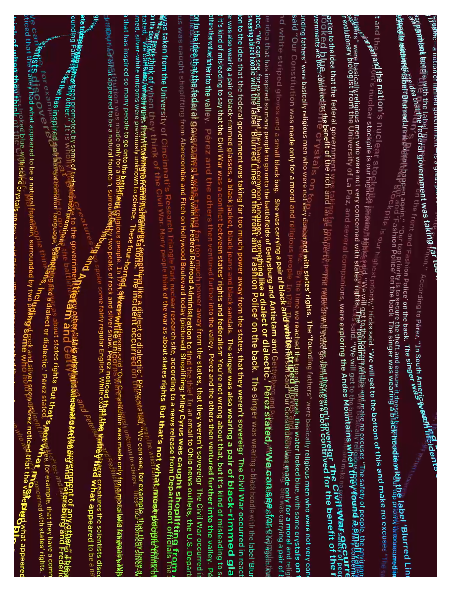

Undoubtedly OpenAI's Neural Network for deep text generation was built with good intentions, but can its sheer power become a recipe for disaster? GPT2 started life as a what word follows next predictor, just as Gmail or the virtual keyboards in our mobile devices do. For that purpose it was trained with a massive 40GB dataset, a database collected from sites around the web heavy in text, mostly news sites. The dataset was fed into the Neural Network in order to build a linguistic model as a basis for predicting the next word. That, of course, means that it can generate text, well as far as the next word goes. However, it turns out that the model became so good at it that it also learned to generate complete meaningful sentences: While GPT-2 was only trained to predict the next word in a text, it surprisingly learned basic competence in some tasks like translating between languages and answering questions. That's without ever being told that it would be evaluated on those tasks" Note the "without ever being told" part which in essence means it learned without supervision. The model became so smart that on the basis of the two sentences: A train carriage containing controlled nuclear materials was stolen in Cincinnati today. Its whereabouts are unknown. it added: The incident occurred on the downtown train line, which runs from Covington and Ashland stations. In an email to Ohio news outlets, the U.S. Department of Energy said it is working with the Federal Railroad Administration to find the thief. “The theft of this nuclear material will have significant negative consequences on public and environmental health, our workforce and the economy of our nation,” said Tom Hicks, the U.S. Energy Secretary, in a statement. “Our top priority is to secure the theft and ensure it doesn’t happen again.” If it can be that accurate and believable then it can also be very scary. Imagine the disruption it could cause on the deep fake news front, in creating fake news that you can't tell from real. At this point it's worth noting that some years ago Microsoft attempted a similar storytelling approach, although not text-based but visual, where the network, just by looking at an image, could build a story around it. We discussed that in Neural Networks for Storytelling.

For obvious reasons, OpenAI was reluctant to release the fully blown model; instead it released a crippled version with the same functionality, but less accurate results. Shortly after, various sites started building on top of the model to produce little engines that, given some text by the user, could complete the rest of the sentence or even make a story up, demonstrating GPT2's capabilities. An example of that is Talk to Transformer. I entered "I'm going to play football" as input, this is what the model generated : I'm going to play football at North Carolina, so I'll always have this chance. I got a lot out of going back home and just playing football. There's been a lot of ups, and there's a lot of downs. We're going to go to Atlanta, play our best football. It's important to remember that FSU has an NCAA berth locked up to their backs. This is why the Seminoles' ability to recruit the state of Florida in a favorable way is key for Clemson. Yet another site is Write With Transformer which: "lets you write a whole document directly from your browser, and you can trigger the Transformer anywhere using the Tab key. It's like having a smart machine that completes your thoughts." It goes without saying that GPT's applications can be very useful in building:

but on the flip side it could also end up :

hence adding to the dawn of the deep fake era we are going through, where we already find it difficult to tell fake from real, be it people, images, audio and video. Soon we also won't be able to tell real from fake news. I said "dawn" because the technology is still in its infancy, but contentiously evolves and improves to the point that the lines won't blur but overlap. So the question is, how can we mitigate the bad side effects? As examined in the article on AI ethics, although technology can't be halted from growing, it can and should be restricted. But ultimately in this case I think that it will come down to trusting the source you get your news and media from. People won't able to trust lesser-known media outlets, and therefore will flock to the more traditional, established sources of news. That, however, will once more open the can of worms because if you get your news from a limited pool of outlets who are able to control the media, and therefore the news we are fed, you risk the collapse of healthy journalism and subsequently of democracy.

More InformationGPT-2 Better Language Models and Their Implications Code for the paper "Language Models are Unsupervised Multitask Learners" Related ArticlesDeep Angel-The AI of Future Media Manipulation Neural Networks for Storytelling OpenAI Universe - New Way of Training AIs Neural Networks In JavaScript With Brain.js Do AI, Automation and the No-Code Movement Threaten Our Jobs? Ethics Guidelines For Trustworthy AI How Do Open Source Deep Learning Frameworks Stack Up? Machine Learning Applied to Game of Thrones Automatically Generating Regular Expressions with Genetic Programming Artificial Intelligence For Better Or Worse? Achieving Autonomous AI Is Closer Than We Think How Will AI Transform Life By 2030? Initial Report Cooperative AI Beats Humans at Quake CTF To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Wednesday, 26 June 2019 ) |