| Too Good To Miss: Neurons Are Two-Layer Networks |

| Written by Mike James | |||

| Friday, 03 January 2020 | |||

|

There are some news items from the past year that deserve a second chance. Here we have one such - recent results have confirmed that the neuron is more than just a single active unit. Its connections do a computation that makes it a two-layer network in its own right. Perhaps the brain is far more capable a machine than we ever imagined. (2019-07-27) AI practitioners have long worked with a very simple abstraction of the biological neuron. This is perfectly reasonable as there are many aspects of the live neuron that have more to do with it being alive than with it being a computational element. The basic model has always been that the neuron's input cause it to become excited until it reaches a point where it "fires" and sends a signal on to other neurons connected to it. This is a ludicrously over-simplified model, especially when implemented in an artificial neural network (ANN) where each neuron is modelled as a non-linear transducer. The inputs are weighted and summed and the output is a non-linear function of the activation. So instead of going from no output to a lot of output, artificial neurons generally move smoothly from low output to high output. The non-linear functions are usually designed to make the neuron appear to switch from off to on, but it is still a smooth change as this smoothness is essential to the training methods we use. As already said, an embarrassingly simple model given that a neuron doesn't output a steady signal but outputs spikes with more spikes indicating more activation. A real neuron is so much more complicated and over time people have wondered if this model really is too simple. Given how effective our artificial neural networks are, the evidence suggests that perhaps we haven't ignored something profound - or have we?

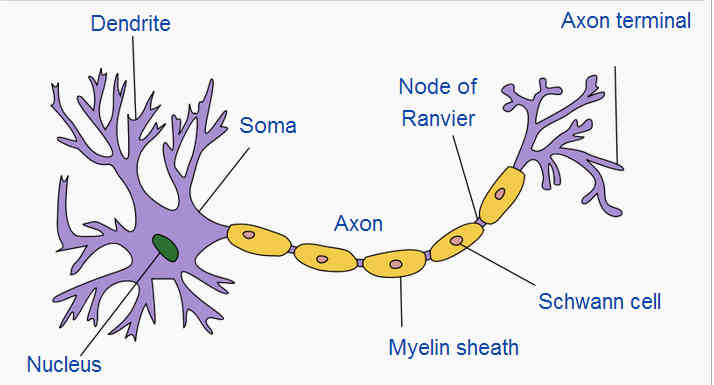

Image Credit: Quasar Jarosz The dendrites from the inputs and the connections branch into a tree-like structure connecting to the axons of other neurons. It has been speculated for a long time that perhaps the dendrites are more than just dumb "wires" conducting the outputs of other neurons to the body of the neuron. Now we have a theoretical analysis from researchers associated with the Blue Brain Project at the École Polytechnique Fédérale de Lausanne, Switzerland that strongly suggests that this is true and dendrites really do engage in computation. It appears that dendrites are divided into units that integrate inputs independently from other units. "When triggered independently, these local regenerative events are predicted to enable individual neurons to function as two-layer neural networks. This in turn should enable neurons to learn linearly nonseparable functions and implement translation invariance. On the network level, independent subunits are thought to dramatically increase memory capacity, to allow for the stable storage of feature associations, represent a powerful mechanism for coincidence detection , and support the back-prop algorithm to train neural networks " The important part of the quote says that a single neuron functions as a two-layer network. This is important because it is well known that a single-layer network cannot learn a very important class of problem. In fact it was the discovery of how to train multi-layer networks that was the big breakthrough - coupled with a huge increase in the processing power we could use in training. The new research proposes and tests a model for how and why these dendritic units form. It also explores how the units behave: "Thus, the computation a neuron is engaged in may vary across brain states; when background conductance is high, neurons may prioritize in local dendritic learning, whereas otherwise, they may favor associative output generation." There is clearly a lot more to be done, but perhaps our current models of biological neural networks aren't quite capturing everything that is going on.

More InformationElectrical Compartmentalization in Neurons Willem A.M. Wybo, Benjamin Torben-Nielsen, Thomas Nevian and Marc-Oliver Gewaltig. Related ArticlesNeuromorphic Supercomputer Up and Running Deep Learning from the Foundations To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Friday, 03 January 2020 ) |