| Deep Learning from the Foundations |

| Written by Nikos Vaggalis |

| Thursday, 04 July 2019 |

|

Fast.ai has just published a follow-on to its free online course Practical Deep Learning for Coders. At advanced level, Deep Learning from the Foundations is also free. How do these two courses stack up.

Fast.ai was founded last year by Jeremy Howard and Rachel Thomas and has as its slogan: "the world needs everyone involved with AI, no matter how unlikely your background" and has an: ongoing commitment to providing free, practical, cutting-edge education for deep learning practitioners and educators. Currently it has two courses, each with around 15 hours of content. At introductory level Practical Deep Learning for Coders, now at version 3, requires minimal knowledge of Python, high school math, a GPU and the appropriate software; a humble Jupyter notebook for starters. That holds true for its new counterpart, Deep Learning from the Foundations, just released on the June 28th, with the difference of being a bit wiser both with regard to Python and Deep Learning's workings. You also need to be comfortable moving to more powerful backend server platforms such as Crestle, Gradient, Google Cloud and Microsoft Azure. Part 1 starts by teaching the ways to train a state-of-the-art image classification model, ending up with building and training a “resnet” neural network from scratch. Using PyTorch and the fastai library as its tools, it covers the following key applications:

Pretty hefty for an introductory course...

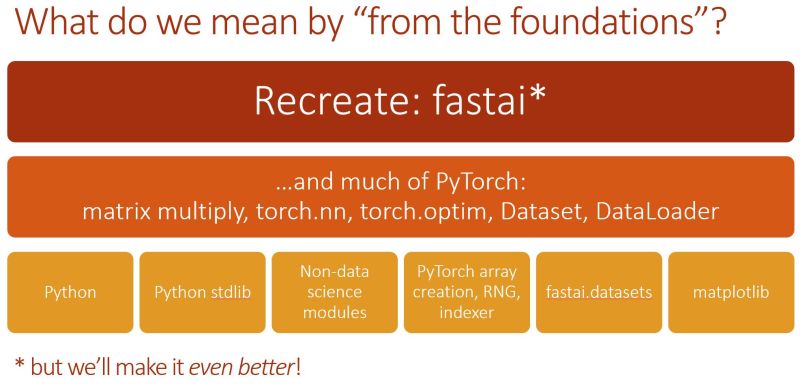

Part 2 takes it from there, looking into more advanced concepts, beginning with matrix multiplication and back-propagation, to high performance mixed-precision training and the latest in neural network architectures and learning techniques. The tools still included PyTorch and fastai, but at the end you also get to use Swift for TensorFlow. Unlike the introductory one, this course goes behind the scenes looking into the underlying theory that drives Deep Learning. Building on those foundations, students will not just become capable of building a state of the art deep learning model from scratch, but also able to re-implement parts of the fastai library.

It's important to note that throughout the course noteworthy research papers such as "Understanding the difficulty of training deep feed forward neural networks", "Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification" or "Three Mechanisms of Weight Decay Regularization" are explored and drawn upon. But that's not all.The course continuously updates with new lessons expected to be added in the coming months. As AI becomes more and more pervasive, even being taught in schools as part of the Artificial Intelligence for K-12 curriculum, you'll have to deal with it sooner or later. So why not enhance your understanding on the ways it works or even use it for your own applications by simply joining this easy-to-follow and free course, altruistically built as a service to the community and the generations to come? More InformationPractical Deep Learning for Coders, v3 Part 2: Deep Learning from the Foundations Related ArticlesDoes OpenAI's GPT-2 Neural Network Pose a Threat to Democracy? How Do Open Source Deep Learning Frameworks Stack Up? Machine Learning Applied to Game of Thrones Cooperative AI Beats Humans at Quake CTF

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

| Last Updated ( Thursday, 04 July 2019 ) |