| Algorithms Beat Moore's Law |

| Tuesday, 28 December 2010 | |||

|

Algorithm improvements deliver more increases in computing power than hardware! Statistics can be used to prove any point - simply choose the data to investigate and don't point out why it might not be representative. This may not be news, but it is controversial.

There is a news item doing the rounds at the moment that claims much more than is reasonable - but it is still being taken seriously. It is more a comment on what nonsense has to be concocted to satisfy politicians and bureaucrats who simply don't have the brains to understand the true complexities of a situation. The idea is that algorithms beat Moore's law. The idea was publicised by an academic blog Algorithmic Game-Theory/Economics which picked up on some interesting claims in a science policy report Report to the President and Congress: Designing a Digital Future: Federally Funded R&D in Networking and IT. So far nothing to get exciting about and in fact it all looks really dull but... It is asserted that while Moore's law - which is that transistor numbers on a chip will double every 1 to 2 years - is insignificant as a law of computing improvement when compared to what has been achieved in software. The argument is made that over a 15-year period Moore's law delivered a 1000-fold increase but improvements in algorithms produced a better than 43,000-fold increase. It sounds good and as programmers we really should be putting our efforts into propagating the myth that software wins out over hardware - but, of course, we know the truth. Hardware improvements proceed at a reasonably steady rate and stall now and again because of hitting some technological wall or other. For example, currently we have the difficulty of getting beyond the 3Ghz clock speed wall. But whenever hardware development hits such a wall it simply turns in a new direction. Hence you can't build a faster processor but you can build more processors and so hardware improvements turn a corner and head off towards multicore.

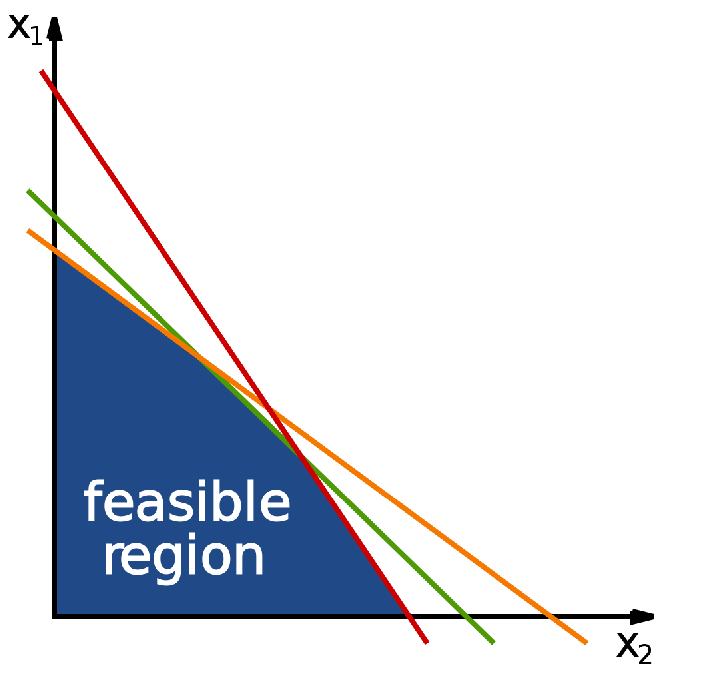

Now compare this to algorithm improvements. Most of the time there is no obvious way to improve an algorithm. If there were we would have used the better algorithm in the first place. So algorithmic improvements are tough and need a "breakthrough" moment. You can look back at the history of algorithms to list the handful of well known such breakthroughs. The Fast Fourier Transform FFT for example made much of real time signal processing possible. The QuickSort is perhaps still the algorithm that we can admire the most - it's simple, tricky and an example to us all that such algorithms do exist. There are other similar breakthrough algorithms but one that isn't quite as well known is the interior point method of solving linear programming problems. It was invented in 1984 and speeded up the solution of the economically important linear constraint problems. Other clever alternative algorithms were also invented about that time and they all speeded up the solution of the problem. Now guess which 15-year period and subject the policy advisory uses for its data? Yes you have it. It is the period 1988 to 2003 and subject is linear programming, which saw the introduction of the new breakthrough algorithms in this period. So if we wait for another 15 years will linear programming be 43,000 times more efficient? Probably not, unless the hardware is that much faster. Do we need better algorithms? Of course. Can we rely on better algorithms to produce gains of the sort that we see in hardware on a regular basis? Almost certainly not. At the moment what does seem to be true and relevant to policy making is not the development of new algorithms of the classical kind but converting those algorithms to parallel implementation and finding new parallel algorithms that don't squander the bounty that the new hardware provides for us on a regular basis. Further reading:To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Saturday, 25 March 2023 ) |