| Google Bans Obfuscated Code - Who's To Judge? |

| Written by Ian Elliot | |||

| Wednesday, 03 October 2018 | |||

|

There are nasty cruel people who say that my code comes out pre-obfuscated. They are wrong of course, but I'm not sure I'd like my chances submitting it to Google for approval as a Chrome extension.

When you set yourself up as a gatekeeper there is a responsibility to, well, keep the gate, I guess. Google has just announced that it is tightening up on the rules for Chrome extensions. This is probably a good thing, given the number of cases of malware or simply exploitations delivered via extensions, but it it still an "interesting" position for Google to be in: The extensions team's dual mission is to help users tailor Chrome’s functionality to their individual needs and interests, and to empower developers to build rich and useful extensions. But, first and foremost, it’s crucial that users be able to trust the extensions they install are safe, privacy-preserving, and performant. Users should always have full transparency about the scope of their extensions’ capabilities and data access. Back in the days when the only walled gardens were real gardens with brick walls and plants, users had to fend for themselves. Mostly they paid big money for applications that big trustworthy companies spent a lot of time developing and ensuring their quality and security. Today we tend to download almost anything without any idea who wrote it or what it might be hiding. Rather than this being the end user's problem, it has now been taken on by the browser makers and the bosses of the apps stores in particular. Google now has to defend the user by checking the code of the applications that it allows into the Chrome Web Store. You can argue that recently it hasn't been doing too good a job and hence the new measures. The user can now restrict an extension's access to the data in a web page either by asking permission or by including it on a white list. OK, this seems like a sensible change and really only leaves you asking why it has taken so long to figure out. The remaining changes are to the code review process and this is a little more problematic. It is almost like, but not really, tackling the halting problem or something theoretically impossible. Just by looking at the code decide if it contains malware or anything covert. If only this was possible. So the steps are fairly small and probably ineffective. First apps should only ask for the permissions they really need and if they need "powerful permission" they will be "subject to additional compliance review". Yes it seems reasonable to suppose that powerful permissions lead to dangerous behavior. Another fairly obvious improvement is to insist on two-step verification for Chrome Web Store accounts. It has been too easy in the past to hijack an account and modify the published code. It also seems obvious that if you are going to get malware through a code review you probably need to hide it. So the next big step is to outlaw obfuscated code. You can still minify your code, specifically the following are all permitted:

To be honest, knowing JavaScript, I think I could make things fairly unreadable and unfathomable using just these techniques and a small number of isolated tricks that would be difficult to see using this level of minification. But wait, isn't Google asking you to put your code out there in a form that makes it easy to steal! After all obfuscation is about the only protection interpreted code has - in fact make that all code has. ... since JavaScript code is always running locally on the user's machine, obfuscation is insufficient to protect proprietary code from a truly motivated reverse engineer. Obfuscation techniques also come with hefty performance costs such as slower execution and increased file and memory footprints So there you have it - obfuscation isn't so good at protecting your code, but surely, if it is so weak, why ban it? Why not just make your validation procedures cope with it? Then there is the small issue of what exactly is obfuscated code? Clearly there is a spectrum and at the far end code that is mangled well beyond reasonable practice is obfuscated, but what about clever or inventive code that uses JavaScript in ways that you might not have thought about before. What if some clever programmer invents something like the revealing constructor pattern, which later became the basic way to create Promises. Does this get the extension banned because it isn't obvious? CODE QUALITY 2

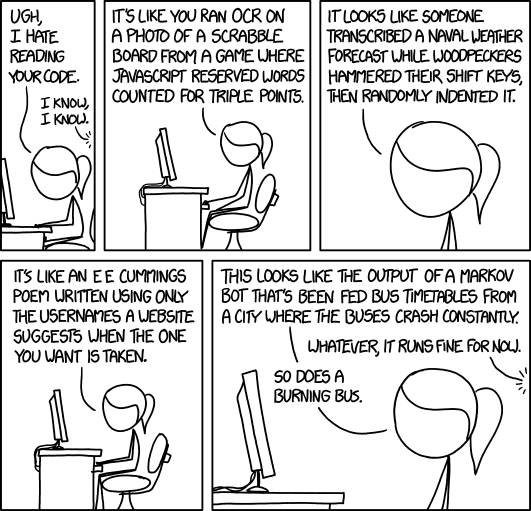

More cartoon fun at xkcd a webcomic of romance,sarcasm, math, and language As I joked at the start of this report, some people claim my code is naturally obfuscated. I hope they are joking, but I can see that this new rule might make for some interesting enforcement problems.

More InformationTrustworthy Chrome Extensions, by default Related ArticlesSecurity by obscurity - a new theory Deobfuscated JavaScript Through Machine Learning Frankenstein - Stitching Code Bodies Together To Hide Malware Chrome Closes Down Inline Installation To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info <ASIN:1871962560>

|

|||

| Last Updated ( Wednesday, 03 October 2018 ) |