| The Emterpreter JavaScript As Byte Code |

| Written by Ian Elliot | |||

| Wednesday, 25 February 2015 | |||

|

This is one of those stories that, if you get what is really going on, might send you screaming for the comfort of a pillow. Does the programming world get any stranger?

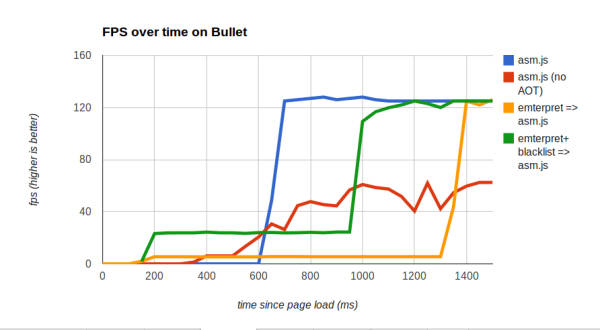

When you think about Emterpreter, the natural reaction is to get a strange feeling - much like the one you probably got when you first encountered a bootstrapping compiler, i.e. a compiler written in the language it compiles and can compile itself. Alon Zakai the founder of the Emscripten, C to asm.js compiler, project has a very strange idea. He has modified Emscripten to convert asm.js to a bytecode format and emit a JavaScript interpreter for that byte code. Wait for a moment for this to sink in. We take C/C++ code and convert it to asm.js a subset of JavaScript. Then we convert the asm.js to byte code and spit out an interpreter for it written in JavaScript. The JavaScript interpreter is loaded in the usual way into the web page and the bytecode is loaded as data. The big advantage of this scheme is that the byte code doesn't have to be parsed and this speeds up the entire load process. Reducing latency is the aim of this seemingly mad idea. When you load a large asm.js it has to be parsed and this means there is often a big gap between load and getting results. If you also use an ahead-of-time AOT compiler the latency is even bigger because the whole thing has to be compiled before it starts running. A test program took around 300ms to startup and with AOT this doubled to 600ms after page load. The same program as byte code started up in 150ms with AOT and slightly less without. Of course there is a cost. The Emterpreter code runs anything from six to twenty times slower. Clearly if you are using asm.js speed of execution is going to be important. A partial solution is to mark some routines as critical and leave them as asm.js. This minimises load time for the majority of the code but leaves the asm.js to run when it is needed. This works, but the best solution is to use a two-phase startup. First load emterpreted code and then load asm.js in the background. With this change we get a strange hybrid behavior. The code first loads in about 200ms but runs at about a fifth of the speed and then after 900ms we get back to full asm.js speed.

The whole Emterpreter idea is experimental, but you can try it out and see if the mix of low latency and eventual full performance works for you. Personally I'm still trying to cope with the idea of C/C+ to JavaScript, to byte code, run by a JavaScript interpreter.

More InformationThe Emterpreter: Run code before it can be parsed Related ArticlesMozilla Enhances Browser-Based Gaming WebKit JavaScript Goes Faster Thanks To LLVM Firefox Runs JavaScript Games At Native Speed Java, ASM.js Or Native - Which Is Faster?

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Wednesday, 25 February 2015 ) |