| A Robot Finally Learns To Walk |

| Written by Lucy Black | |||

| Sunday, 11 April 2021 | |||

|

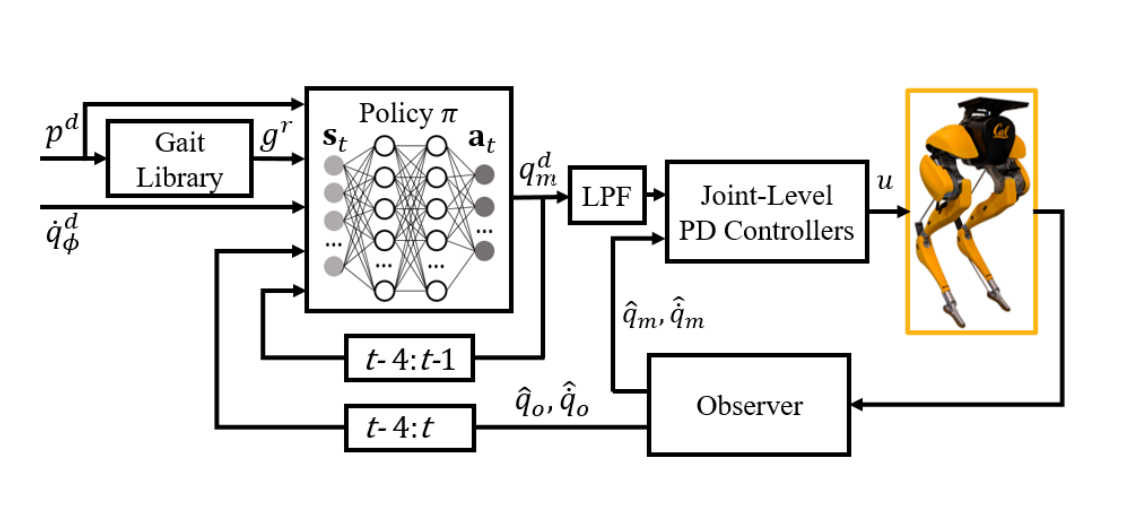

The emphasis here is on "learns". Robots have been strutting their stuff for a while, with decreasing amounts of humour as they slowly manage not to fall over. What is new is that Cassie has taught itself to walk from scratch via reinforcement learning - no teacher needed. If you have seen any of the simulations of robots trying to learn to walk by various methods then it might have occurred to you to ask why no one has tried it with real robots. Presumably the reason has something to do with the cost and the dangers involved in letting a real robot fail. A team at UC Berkeley has decided the time was right to let a real robot learn the way we humans do - and it seems to have worked.

Even so they didn't let the robot learn by falling over - instead they used the safer method of letting it learn in a simulation first and then tried it out on the real thing. The important thing is that it seems that the learned behaviors are better than programmed alternatives: "The learned policies enable Cassie to perform a set of diverse and dynamic behaviors, while also being more robust than traditional controllers and prior learning-based methods that use residual control. We demonstrate this on versatile walking behaviors such as tracking a target walking velocity, walking height, and turning yaw." See what you think: The team plans to extend the method to more dynamic and agile behaviors and think that the simulation to real robot methods can be extended to other situations.

More InformationReinforcement Learning for Robust Parameterized Locomotion Control of Bipedal Robots Zhongyu Li, Xuxin Cheng, Xue Bin Peng, Pieter Abbeel, Sergey Levine, Glen Berseth and Koushil Sreenath Related ArticlesThe Virtual Evolution Of Walking The Amazing Dr Guero And His Walking Robots To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Sunday, 11 April 2021 ) |