| Access LLMs From Java code With Semantic Kernel |

| Written by Nikos Vaggalis | |||

| Tuesday, 29 August 2023 | |||

|

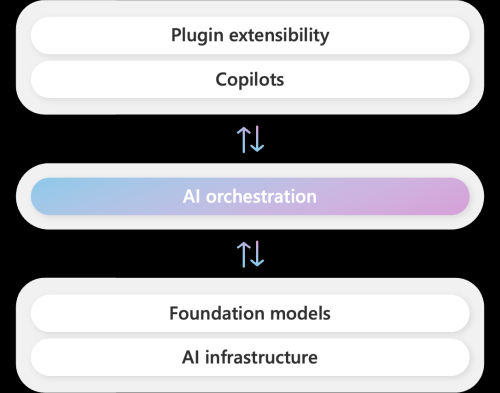

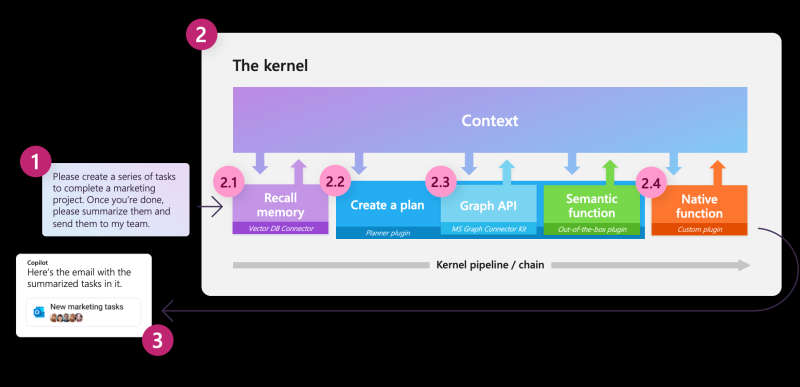

You can now do this thanks to Microsoft's Semantic Kernel SDK which integrates Large Language Models (LLMs) With Semantic Kernel for Java you can now integrate the OpenAI and Azure OpenAI services with your Java code to unleash the power of AI in your apps. The project relies on Github Copilot. At the center of Copilot's stack lies an AI orchestration layer that allows us to combine AI models and plugins together to create brand new experiences. Semantic Kernel sprung out of this as an SDK when Microsoft wanted to give the developers the capability to build their own Copilot experiences. With the Kernel you can use the patterns driving Copilot, or even Bing chat, in your own code and programming languages. Previously only C# and Python were available, but now you can use Java too.

It's main components are:

To use it, first make sure that you have an Open AI API Key or Azure Open AI service key. Then you have to build the Semantic Kernel and OpenJDK 17 or newer is required.

git clone -b experimental-java https://github. com/microsoft/semantic-kernel/ and finally build the Semantic Kernel cd semantic-kernel/java The SDK is also available on Maven Central under groupId com.microsoft.semantic-kernel. Code examples can be found on the official Github repo but the procedure is pretty much Step1: Create a Kernel Step2: Add a Function Step3: Instruct LLM to use this Function + Additional Settings Step4: Collect Functions into Plugins Step5: Represent Plugins as Java code Step6: Give memory to the LLM One final note is that because Java support is still experimental, the documentation is scarce compared to C#'s and Python's. So if you want to understand the concepts, have a look at the official examples of those languages too.

More InformationRelated ArticlesAn Attempt To Make Java Easier To Learn

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Tuesday, 29 August 2023 ) |