| .NET Core The Details - Is It Enough? |

| Written by Mike James | |||

| Friday, 05 December 2014 | |||

|

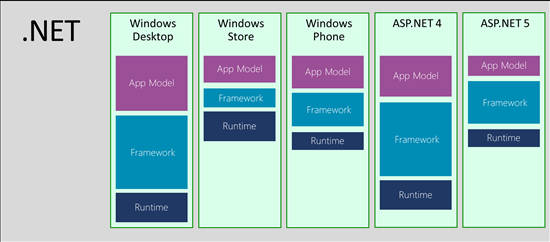

Microsoft has just provided some more details about .NET Core - the open source project it recently created. There is some good news and some bad, but possibly more bad in the short term. In a long and detailed blog post the nature of .NET Core is revealed. However, although Immo Landwerth provides a lot of detail, it isn't an easy job making sense of it. First of all the ancient history of the forking of .NET is explained. In case you didn't live though the experience, .NET currently comes in a number of flavours that have a range of incompatibilities. Perhaps the most irritating was the desktop/Silverlight split, but as Silverlight is no more this is just a memory. The splits that remain important are shown in a diagram:

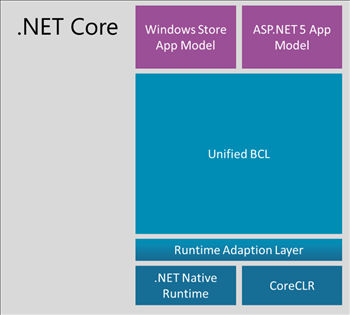

Not everything in this diagram makes perfect sense. Windows Store apps for example aren't .NET apps as they run under WinRT. But let us take this diagram more as a statement of the problem Microsoft is trying to solve. The first attempt at solving the problem was the introduction of portable class libraries. These were essentially wrappers around the different versions of of the code - not a good idea, but it did allow Microsoft to invent Universal Apps. Standing back from the situation for a moment, you can't help but ask how did things get into this situation. When .NET was introduced it was bundled with the OS and could be considered a part of the OS. Applications made use of the core framework without having to bring it with them. There were, and are, versioning issues, but in the main it was an improvement over DLL hell. It seems that the breakthrough moment for Microsoft was when it was realized that NuGet was being used to distribute updates to the framework as part of your application. The idea of a central .NET system started to look less attractive than a local bundling of what was needed with each app. Now enter .NET Core: ".NET Core is a modular implementation that can be used in a wide variety of verticals, scaling from the data center to touch based devices, is available as open source, and is supported by Microsoft on Windows, Linux and Mac OSX." This is wishful thinking in that .NET Core doesn't actually exist. All Microsoft have done is to start an open source project with the aim of creating something that fits the bill. The key to building this new world is .NET Native. This takes us back to technology that is reminiscent of the link editor. It merges the framework with the application and then removes the modules that aren't used - and then it generates the native code. ".NET Core is essentially a fork of the NET Framework whose implementation is also optimized around factoring concerns. Even though the scenarios of .NET Native (touch based devices) and ASP.NET 5 (server side web development) are quite different, we were able to provide a unified Base Class Library (BCL)." This isn't quite as amazing as it sounds as the BCL isn't really composed of classes that should be platform dependent in the first place. The next diagram shows the structure of .NET Core:

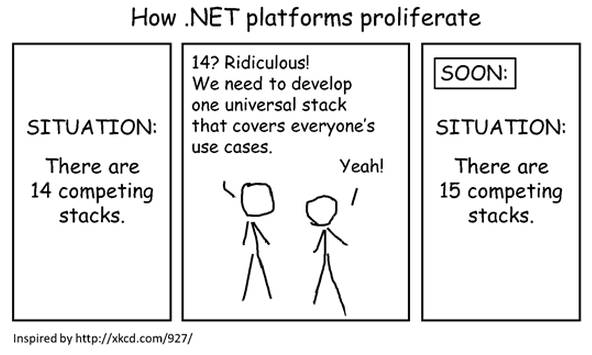

This diagram also raises many questions. What is CoreCLR doing next to Native Runtime? Presumably this represents a choice of runtime environments which is transformed to mate with the Unified BCL by the Runtime Adaption Layer - which does what? Why are there only Windows Store apps i.e. WinRT apps and ASP.NET 5 apps shown? What happens to desktop and ASP.NET 4? Where is Windows Phone? It seems to be a common problem with diagrams that concern .NET Core - the technologies go missing! Much later both Windows Store and Windows Phone apps are mentioned as being subsets of .NET Core and hence the new BCL classes will work for both of them. Apart from the fact that the BCL will be one set of classes for the different environments, something it probably should have been from the start, it all seems vague. One thing that does seem certain is the shift from central provision of the framework to using NuGet to deliver custom packages. The key piece of information seems to be "For the BCL layer, we’ll have a 1-to-1 relationship between assemblies and NuGet packages." Which suggests that for other layers the relationship will be something other than 1-to-1. So in this case is NuGet going to have to supply the correct package depending on the deployment? Semantic versioning is going to be used - which again raises the question of why wasn't it already in use? The key idea is: "Of course, certain components, such as the file system, require different implementations. The NuGet deployment model allows us to abstract those differences away. We can have a single NuGet package that provides multiple implementations, one for each environment. However, the important part is that this is an implementation detail of this component. All the consumers see a unified API that happens to work across all the platforms." Well this is nice but it's not rocket science. When .NET was first introduced the idea was that it would provide an OS-independent API that you could write to. The fact that this fell apart into incompatible APIs is entirely Microsoft's fault and was mostly driven by internal politics. There was never any technical need to fragment the APIs, even though there might have been reasons to fragment the implementations. The blog post ends with the news that work is to continue on old fashioned .NET and we can look forward to Framework 4.6, but not as open source. As for Mono: "Mono is alive and well with a large ecosystem on top. That’s why, independent of .NET Core, we also released parts of the.NET Framework Reference Source under an open source friendly license on GitHub. This was done to allow the Mono community to close the gaps between the .NET Framework and Mono by using the same code. However, due to the complexity of the .NET Framework we’re not setup to run it as an open source project on GitHub. In particular, we’re unable to accept pull requests for it." So now there are three .NET forks - the .NET Framework, Mono and .NET Core. It would be much more impressive if Microsoft had open sourced .NET proper as an ongoing project - not to mention Silverlight, Windows Forms, WPF and so on... The blog post contains a spoof of one of xkcd's best known works but I think it might just come back to haunt .NET Core:

It is difficult to say how long we will have to wait for .NET Core and how much impact it will have. The project seems to be necessary mainly to clear up the mess that Microsoft has made of the .NET Framework. The only really new idea seems to be "app-local" deployment, which means moving away from shared libraries to code that is bound into every app. Well, in most cases storage and memory isn't an issue any more - is it? Looking back on the history you can't help but think that if what we want it is a unified API to code to then perhaps a unified Windows might have been a good idea - which is what we had with Silverlight on the desktop, browser and Windows Phone. The .NET Framework started out as the unified API. For those who remember what .NET was supposed to be a solution to, it is very sad that we are going round the loop another time. We need a unified Windows on all devices then the frameworks would be unified, on Windows at least, by default. It is good that Microsoft is trying to do something about past mistakes and that it is revitalizing .NET enough for those who cling on to the technology to be enthusiastic. Is it enough of a vision to bring anyone who left the fold return to it? I seriously doubt it at the moment.

More InformationRelated ArticlesNot Dumping .NET - Microsoft's Method Dumping .NET - Microsoft's Madness Microsoft And Xamarin Collaborate To Bring Native iOS and Android To Visual Studio Windows 8 - The Desktop Destroyer The War At Microsoft - Managed v Unmanaged Silverlight is dead, long live Silverlight?

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Friday, 05 December 2014 ) |