| Automatic Off-By-One Detection |

| Written by Mike James | |||

| Wednesday, 31 March 2021 | |||

|

We all know about off-by-one errors and also know that, however aware of the problem you might be, they manage to bite us eventually. What about applying the power of deep learning? Perhaps this magic could save us from this most pernicious problem.

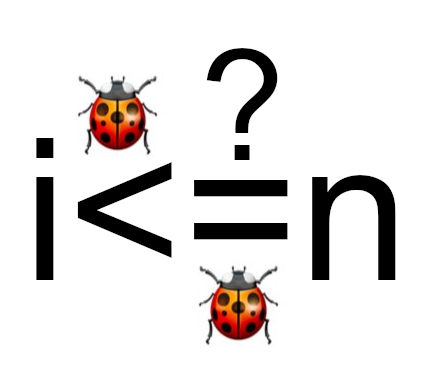

Off-by-one errors are common especially, or so I think, in languages that use the C-style For loop coupled with zero-based arrays. You may declare an array as being of size 10, but the for loop goes to <10 simply because an array with 10 elements has an index of 9 for its last element. Is it any wonder we get confused and write arraysize<10 when it should be arraysize<=10. This, of course, is just the beginning. Whenever you implement any sort of algorithm that involves boundaries off-by-one errors abound. So how can we get rid of such errors? Far be it from me to suggest that we get rid of the C-style For loop and ditch zero-based arrays. Researchers at Delft University have tried to detect off-by-one errors using deep learning. There are other examples of advanced machine learning helping out with programming, so much so that you might even suspect that programming was in danger of being automated. If off-by-one errors are anything to go by you can sleep easy - they seem to be hard to spot. If you introspect a little, never a good way of proving anything, you will probably conclude that off-by-one errors are hard because they are about deep semantics rather than shallow syntax. If you look at a potential off-by-one error you need a lot of context to figure out if it is an error. You can't usually say if it is the length of the array or the last element that is being referenced. You can't say if the operation includes or excludes the boundary. You can't say if the maximum value should be n or n+1 or n+m or... Such considerations are non-local. The paper first posts some good news: "We train different models on approximately 1.6M examples with faults in different boundary conditions. We achieve a precision of 85% and a recall of 84% on a balanced dataset, but lower numbers in an imbalanced dataset." Of course, the real world is imbalanced because there are far fewer real errors then non-errors. The precision dropped to less than 30% in this more real situation. Then things get a little less promising: "We also perform tests on 41 real-world boundary condition bugs found from GitHub, where the model shows only a modest performance. Finally, we test the model on a large-scale Java code base from Adyen, our industrial partner. The model reported 36 buggy methods, but none of them were confirmed by developers." Told you that such errors were hard. The deep learning methods shouldn't be written off just yet, however, as alternative tools don't do any better: The industry has been relying on static analysis tools, such as SpotBugs2 or PVS-Studio3 . SpotBugs promises to identify possible infinite loops, as well as array indices, offsets, lengths, and indexes that are out of bounds. PVSStudio also tries to identify mistakes in conditional statements and indexes that are out of bounds in array manipulation. And while they can indeed find some of them, many of them go undetected. As we later show in this paper, none of the realworld off-by-one errors could be detected by the state-of-thepractice static analysis tools. We also shouldn't be too hard on the static analysis tools. As I have already said, off-by-one errors are semantic and static analsys doesn't do semantics well. The bottom line of all of this is, something you might already assume, off-by-one errors are difficult. As the old saying goes, there are three hard problems in programming - naming things and off-by-one errors.

More InformationLearning Off-By-One Mistakes: An Empirical Study Hendrig Sellik, Onno van Paridon, Georgios Gousios and Maurício Aniche Related ArticlesCode Digger Finds The Values That Break Your Code Instabug Analyzes 100,000,000 Bugs Finding Bugs In The First C++ Compiler To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Wednesday, 31 March 2021 ) |