| Perl Threading |

| Written by Nikos Vaggalis | ||||

| Wednesday, 06 April 2011 | ||||

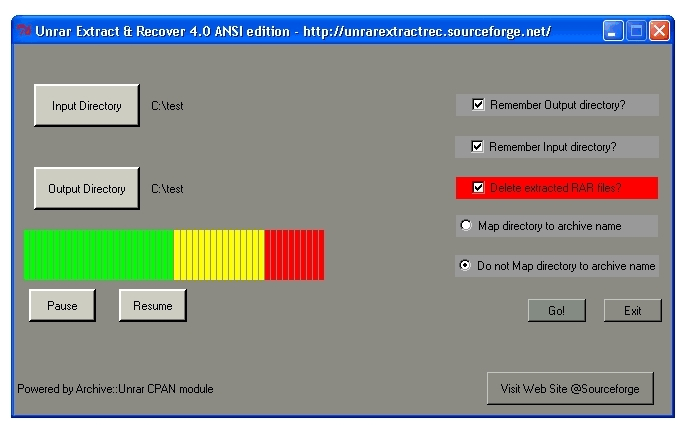

Page 1 of 3 UPDATE: There is a revised version of this article, Password Cracking RAR archives with Perl In this article we explore how to turn a single threaded Perl/Tk GUI application into a multi-threaded one; examining the steps, the obstacles and the benefits. Along the way we cover concepts such as callbacks, events, windows messages, thread affinity, thread safety and more. For code demonstration purposes we will use Perl, the Tk toollkit, and an open source application of mine, Unrar Extract and Recover, which is the real world example that this article is based on. This application started as single thread and evolved into a threaded one.Using Perl also provides an opportunity to look at the workings from a low level view since frameworks like .NET hide a lot of the underlying complexity. The UE&R applicationFirst a few words on the Unrar Extract and Recover application. I was always finding the operation of choosing a compressed file or files by left clicking on them, then right clicking to extract the contents, choosing an extraction directory, and then having to provide a password (if it was password protected), at least cumbersome. Though there are other ways for doing the same thing, none of them was intuitive and I thought that there could be an easier way where the UI won't get in your way and won't waste so much time. Nowadays, with time being a commodity and compression becoming an integral part of everybody's computing life, the need for automating repetitive tasks is more pressing than ever. Thus UE&R was born to help with the repetitive operation of extracting .rar files while saving valuable time. And since I am a fan of 'the keep it simple' principle I gave the application 'fire and forget' functionality. Place your files together, choose an input and output directory and just click 'Go'. Of course this basic functionality is coupled with other convenient options such as to 'Delete extracted files' or 'Map directory to archive name'. So what does Unrar Extract and Recover do?1. Do you compress your files to save storage space? Then, when you want to extract your files are you frustrated by having to go through them one by one? Use fire-and-forget functionality. Just point to the directory holding the archives and the software will automatically extract each one (optionally into its own subdirectory) with no user intervention. It saves you the time and the dull task of going through the files manually allowing you to use your precious time productively. 2. Do you compress and protect your valuable data/files by using a password? UE&R frees you from having to remember individual file passwords by keeping a single password depository so letting you choose as many passwords as needed. All you have to do is keep your passwords into one file (password_file.txt), essentially called a pass-wordlist, and when about to extract just feed the program the wordlist; it will check each password one by one against all files until it finds the correct one; then it continues with the extraction as expected. 3. Another use of this software is that of password recovery. Have you forgotten the password of a password protected archive? UE&R can retrieve the password by using the dictionary approach. Give it a wordlist with your common passwords and it will attempt all words against the archive. Once it retrieves the password it continues with extracting the archive. UE&R cannot guess the password required since it has no brute force functionality. Personally I think that the time the brute force technique takes to break a password renders the effort unworthy. I prefer using a dictionary, despite its limitations of course. 4. A pleasant side effect of its design is that it can be used as an automated file integrity validation tool. When batch processing your files it checks for broken multipart files, files with corrupted headers, CRC errors, etc and logs all errors into a text file; hence it enables you to check that your files are valid without requiring your attention.

The interface indicates most of the facilities.

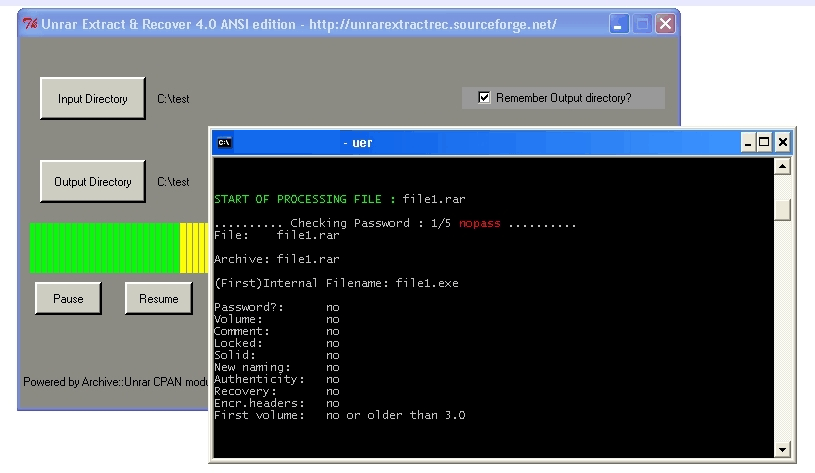

Extracting a RAR

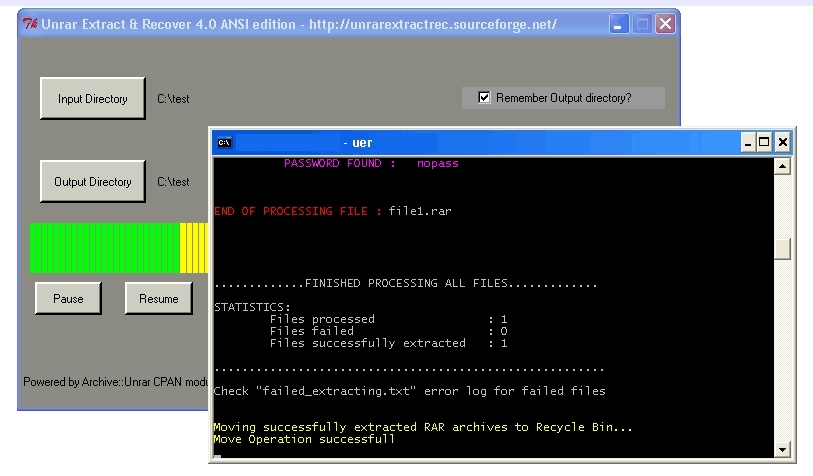

Job finished (Click images to expand)

You can download Unrar Extract and Recover here.

Components of UE&RUE&R is written in Perl and is comprised of the following parts:

The Archive::Unrar moduleThis is the back bone which provides the core functionality. It interacts with the RAR-lab unrar.dll http://www.rarlab.com/ (written in C++) which encapsulates all the functionality for manipulating (not compressing, only extracting) .rar compressed archives. The module also reads the .rar header format to make important decisions. There is much more going on inside the module but I will briefly introduce the most crucial parts: "list_files_in_archive" is the procedure that lists the details embedded into the archive (files bundled into the .rar archive, archive's comments) "extract_headers()" is the procedure that reads the headers "process_file" is the public procedure that is called by the client. The procedure receives all needed information from the client (file, output directory, password, an optional callback and a parameter ERAR_MAP_DIR_YES that instructs whether to create a directory named after the file name) in the form of a hash containing named parameters: (Unrar_Extract_and_Recover.pl) (Unrar.pm) It then calls the private extract_headers() function which runs various tests on the file by manipulating its file headers. It checks if it is valid, if it is multipart, if it is encrypted, extracts the embedded comments into an internal buffer handled by Perl for displaying to the user later on, and runs some more complex tests by using bitwise operations such as: (Unrar.pm) if (!($flagsEX & 256) && !($flagsEX & 128) After extract_headers() returns, execution is resumed inside the process_file() subroutine. It uses the information collected by extract_headers() for actually extracting the file. Multi-part .rar archives are chained together. Their headers contain the file names of the rest of the parts. When you extract just one part of the chain, the rest of the parts are extracted as well since they are linked. We use that to our advantage for caching purposes. After a file is successfully extracted by process_file(), the data comprising of the filename together with its absolute path and password (if it was password protected) is forwarded to list_files_in_archive() which re-opens the file. (It is a straightforward operation even if the file is password protected since the password has been already retrieved and does not have to be looked up again), extracts the file names of the rest of the parts and stores them in hash: (Unrar.pm) Later on when a file that has not been yet processed is encountered while traversing the directory, it is looked up inside the hash; if there is a match then there is no need to process it and a ERAR_CHAIN_FOUND=> The issue here is that since it is a batch application it is primarily designed for speed, which means that all files are thrown inside a bucket (the common directory) and selecting individual files cannot be done as the process has to be fully automated. Therefore caching plays a very important role performance wise, being particularly beneficial when encountering multipart archives. For example we have three files which are part of a multi-part archive: File1.part1.rar A batch application would have to go through each one of them, while in a normal application, the user would select each of the the files they wanted to extract by clicking on it. The batch application however cannot do that; it will go through all the files regardless. The only information the batch application has at its disposal, comes from the files' headers. Encrypted files however do not reveal much, as their headers are encrypted and the only information revealed is whether they are encrypted or not (and that is why they are handled differently by the module). So after we successfully process the first part, we also get the rest of the filenames comprising the chain and cache them inside the hash. Therefore the process won't need to be repeated when the rest of the files are encountered later on by the reading of the directory. Furthermore, only successfully extracted files are entered into this hash, so we instantly know which files failed and which did not. The added advantage of this approach is that since the hash is global, the caller/client has access to it. For example, UE&R uses it for deleting the successfully extracted files: (Unrar_Extract_and_Recover.pl) |

||||

| Last Updated ( Monday, 13 March 2017 ) |