| Introduction To The Genetic Algorithm |

| Written by Mike James | |||||

| Thursday, 18 February 2021 | |||||

Page 3 of 4

Some Examples Of GA In ActionBreeding A FacePareidoloop is a program which uses a GA approach to create a face that satisfies a face recognition algorithm - and all using JavaScript. If you go through enough generations the solutions should keep getting better as the population evolves towards a better fitness.

Image:Philip Mccarthy This is the idea behind Pareidoloop. It uses a face detection program written in JavaScript. This is taken from Liu Liu's Core Computer Vision library which provides a range of image processing algorithms as well as face detection and is well worth knowing about. Next code up a way to generate random polygons such that the representation can take part in the GA. This part of the program is based on an earlier experiment with trying to breed the Mona Lisa - see Roger Alsing’s Evolution of Mona Lisa. All you have to do next is to run the GA and use the output of the face detection program as the measure of fitness of each individual in the population. Keep the generations turning over and eventually you will reach a reasonable arrangement of polygons which activates the face detection algorithm - i.e. it looks like a face. Why Pareidoloop? This is the term for the phenomenon of seeing things like faces in random textures. I'm not sure that this is quite Pareidoloop in that the input isn't random as it is being designed for the purpose. Is there any purpose to this? Unlikely, but it is a good demonstration of the GA in action and the images it creates are kind of spooky. There might be a call for artistic renderings of the eigenface of face detection, but mostly it's just fun. You can find out more at: Pareidoloop Evolving Soft RobotsThe genetic algorithm can be used to evolve solutions to all types of problem. In this case it evolves "soft robots" with body parts built from a range of materials.

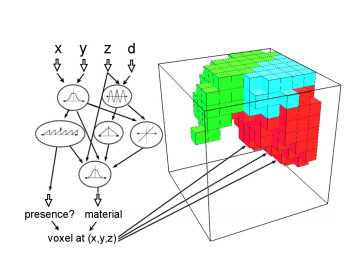

Building on the work of Karl Sims and his biomorphs, the team of researchers at Cornell allowed the robots to have bodies made of a mixture of soft and rigid materials. The idea is that evolving bodies using the genetic algorithm had hit a ceiling on the level of sophistication achievable because they were all restricted to mainly rigid components. More natural organisms might result from evolution applied to more natural materials. The second novel feature of this experiment is the coding used. This is a common difficulty with implementing the genetic algorithm. You need to find a genetic coding that allows the full range of possible types to be represented in a natural way. The shape and behavior of the robots was determined using a Compositional Pattern Producing Network. This is like a neural network but the nodes can output using any function of the inputs. The network can be driven with the location in the body of the voxel (volume pixel)and it can output properties for that voxel. The network itself can be evolved using genetic algorithm.

The CPPN generates the make up of each robot according to the position of each voxel

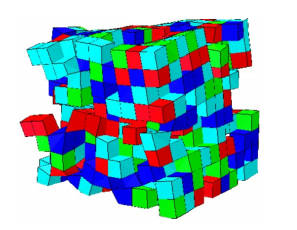

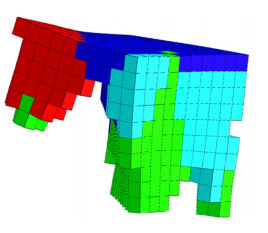

Each voxel in the robot was either an active unit which expanded and contracted at a specified frequency or a passive unit which possessed only material properties - soft or hard. In the videos green voxels are periodic with an amplitude of 20%, light blue are soft and passive, red are periodic but counter phase to green and dark blue are passive and stiff. The experiment created a generation of robots and tested them in how quickly they could move. The fastest robots were used to breed the next generation. Variations on the measure of fitness were used in the experiments to see the effect of different selective pressures. You can see the results and how one line of effective walkers evolved over time in this video. As the poster of the video, Jeff Clune, comments: "Evolution produces a diverse array of fun, wacky, interesting, but ultimately functional soft robots. Enjoy!"

The key finding is that the more complex encoding resulted in much better performance than the simpler direct encodings used in the past. Changing the measure of fitness mainly altered the body composition of the best robots. Overall it is suggested that the method created life-like complex robots with plausible modes of locomotion.

Some of the walking methods that evolved: L-Walker, Incher, Push-Pull, Jitter, Jumper and Wings.

The question arises where next? The researchers conclude the paper with: Our results suggest that investigating soft robotics and modern generative encodings may offer a path towards eventually producing the next generation of impressive, computationally evolved creatures to fill artificial worlds and showcase the power of evolutionary algorithms. I'm looking forward to the next paper and especially the next video. And I, for one, welcome our new squishy robotic overlords...

|

|||||

| Last Updated ( Thursday, 18 February 2021 ) |

In just 1000 generations the genetic algorithm creates some amazing solutions to the task of getting from A to B as fast as possible.

In just 1000 generations the genetic algorithm creates some amazing solutions to the task of getting from A to B as fast as possible.