| Detecting When A Neural Network Is Being Fooled |

| Written by Mike James |

| Wednesday, 08 March 2017 |

|

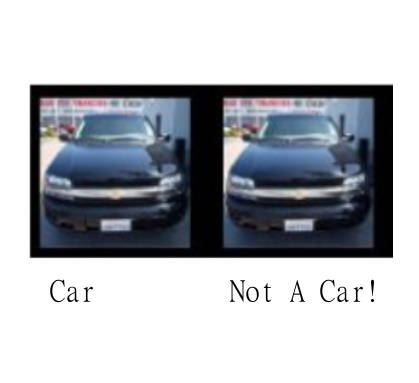

Adversarial images are the biggest unsolved problem in AI at the moment and progress is being made but for all the wrong reasons. Now we have some progress on detecting when an image has been specially constructed to fool a neural network. The existence of adversarial images came as a big shock when they were discovered, but perhaps not a big enough shock. The fact that our great hope for the future of AI, the convolutional neural network could be fooled by specially constructed images was surprising. After all we were used to headlines that proclaimed how great neural networks are. When a neural network got an image classification wrong you could sort of see why - yes that great hairy dog does look a bit like a brush. It was, and is, plausible that neural networks some how capture a little bit of they way we perceive the world. And yet... there are adversarial images. An adversarial image is constructed by taking a photograph that the neural network correctly classifies and adding to each pixel a tiny value. These tiny values have to be carefully computed but when added they cause the neural network to misclassify the image, even though it looks exactly as it did to a human. This is not how humans work and it puts a distance between us and our favourite neural network. Some researchers have been exploring the issue, but somehow the deep questions of why adverserial images exist at all have been sidelined by lesser concerns that some attacker might pervert the operation of a neural network by offering it adversarial images. In this world view adversarial images are a sort of malware for AI and what you have to do with malware is stop it.

Two new papers, at least, have appeared that suggest that you can detect an adversarial image. The first is from researchers at Symantec: Detecting Adversarial Samples from Artifacts Deep neural networks (DNNs) are powerful nonlinear architectures that are known to be robust to random perturbations of the input. However, these models are vulnerable to adversarial perturbations--small input changes crafted explicitly to fool the model. In this paper, we ask whether a DNN can distinguish adversarial samples from their normal and noisy counterparts. We investigate model confidence on adversarial samples by looking at Bayesian uncertainty estimates, available in dropout neural networks, and by performing density estimation in the subspace of deep features learned by the model. The result is a method for implicit adversarial detection that is oblivious to the attack algorithm. We evaluate this method on a variety of standard datasets including MNIST and CIFAR-10 and show that it generalizes well across different architectures and attacks. Our findings report that 85-92% ROC-AUC can be achieved on a number of standard classification tasks with a negative class that consists of both normal and noisy samples. The key to this technique is the assumption that adversarial images aren't part of the natural distribution of images - they don't occur in nature. Basically you can compute how likely you are to see an image and adversarial images aren't as likely as non-adversarial images. The second paper is from researchers at the Bosch Center for AI: On Detecting Adversarial Perturbations Machine learning and deep learning in particular has advanced tremendously on perceptual tasks in recent years. However, it remains vulnerable against adversarial perturbations of the input that have been crafted specifically to fool the system while being quasi-imperceptible to a human. In this work, we propose to augment deep neural networks with a small "detector" subnetwork which is trained on the binary classification task of distinguishing genuine data from data containing adversarial perturbations. Our method is orthogonal to prior work on addressing adversarial perturbations, which has mostly focused on making the classification network itself more robust. We show empirically that adversarial perturbations can be detected surprisingly well even though they are quasi-imperceptible to humans. Moreover, while the detectors have been trained to detect only a specific adversary, they generalize to similar and weaker adversaries. In addition, we propose an adversarial attack that fools both the classifier and the detector and a novel training procedure for the detector that counteracts this attack. OK, this does help with the security problem. but it also demonstrates that adversarial images have something about them that is just not natural.

More InformationDetecting Adversarial Samples from Artifacts On Detecting Adversarial Perturbations Related ArticlesNeural Networks Have A Universal Flaw The Flaw In Every Neural Network Just Got A Little Worse The Deep Flaw In All Neural Networks The Flaw Lurking In Every Deep Neural Net Neural Networks Describe What They See Neural Turing Machines Learn Their Algorithms AI Security At Online Conference: An Interview With Ian Goodfellow

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

| Last Updated ( Wednesday, 08 March 2017 ) |